Shun Li

ALIGN: Adversarial Learning for Generalizable Speech Neuroprosthesis

Mar 18, 2026Abstract:Intracortical brain-computer interfaces (BCIs) can decode speech from neural activity with high accuracy when trained on data pooled across recording sessions. In realistic deployment, however, models must generalize to new sessions without labeled data, and performance often degrades due to cross-session nonstationarities (e.g., electrode shifts, neural turnover, and changes in user strategy). In this paper, we propose ALIGN, a session-invariant learning framework based on multi-domain adversarial neural networks for semi-supervised cross-session adaptation. ALIGN trains a feature encoder jointly with a phoneme classifier and a domain classifier operating on the latent representation. Through adversarial optimization, the encoder is encouraged to preserve task-relevant information while suppressing session-specific cues. We evaluate ALIGN on intracortical speech decoding and find that it generalizes consistently better to previously unseen sessions, improving both phoneme error rate and word error rate relative to baselines. These results indicate that adversarial domain alignment is an effective approach for mitigating session-level distribution shift and enabling robust longitudinal BCI decoding.

Traditional Transformation Theory Guided Model for Learned Image Compression

Feb 24, 2024

Abstract:Recently, many deep image compression methods have been proposed and achieved remarkable performance. However, these methods are dedicated to optimizing the compression performance and speed at medium and high bitrates, while research on ultra low bitrates is limited. In this work, we propose a ultra low bitrates enhanced invertible encoding network guided by traditional transformation theory, experiments show that our codec outperforms existing methods in both compression and reconstruction performance. Specifically, we introduce the Block Discrete Cosine Transformation to model the sparsity of features and employ traditional Haar transformation to improve the reconstruction performance of the model without increasing the bitstream cost.

Identifying Emotion from Natural Walking

Sep 10, 2015

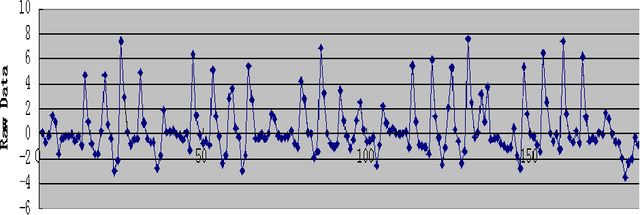

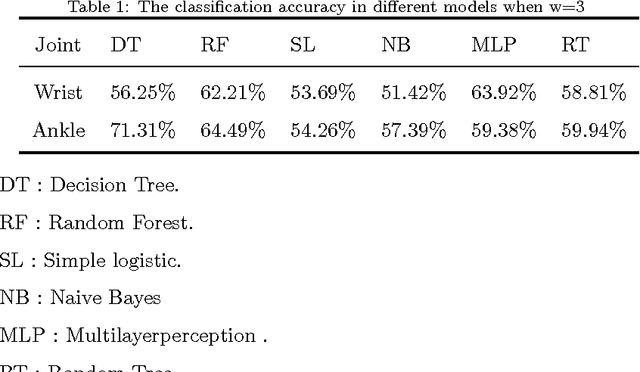

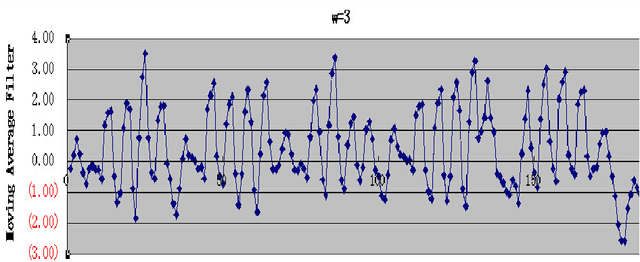

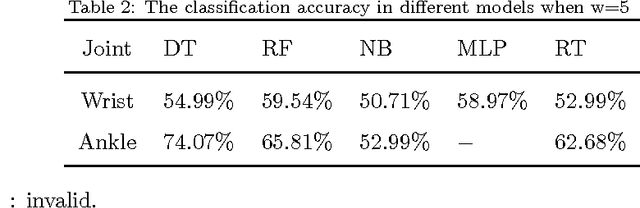

Abstract:Emotion identification from gait aims to automatically determine persons affective state, it has attracted a great deal of interests and offered immense potential value in action tendency, health care, psychological detection and human-computer(robot) interaction.In this paper, we propose a new method of identifying emotion from natural walking, and analyze the relevance between the traits of walking and affective states. After obtaining the pure acceleration data of wrist and ankle, we set a moving average filter window with different sizes w, then extract 114 features including time-domain, frequency-domain, power and distribution features from each data slice, and run principal component analysis (PCA) to reduce dimension. In experiments, we train SVM, Decision Tree, multilayerperception, Random Tree and Random Forest classification models, and compare the classification accuracy on data of wrist and ankle with respect to different w. The performance of emotion identification on acceleration data of ankle is better than wrist.Comparing different classification models' results, SVM has best accuracy of identifying anger and happy could achieve 90:31% and 89:76% respectively, and identification ratio of anger-happy is 87:10%.The anger-neutral-happy classification reaches 85%-78%-78%.The results show that it is capable of identifying personal emotional states through the gait of walking.

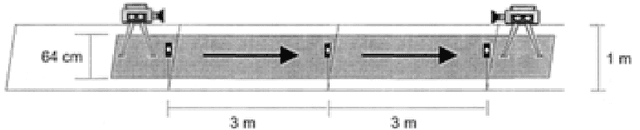

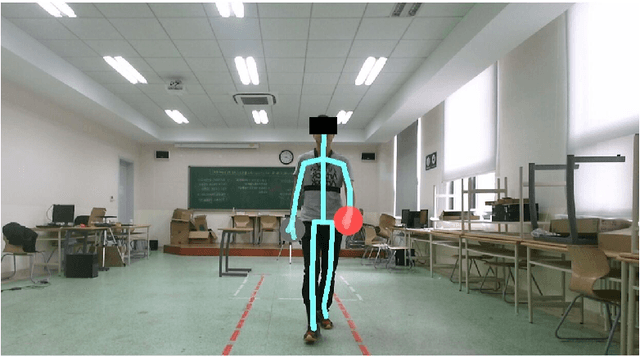

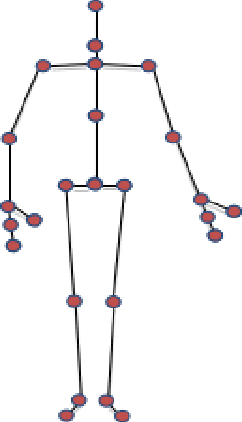

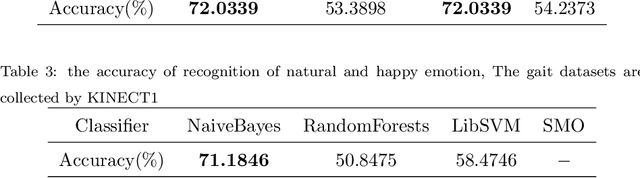

Recognition of Emotions using Kinects

Aug 04, 2015

Abstract:Psychological studies indicate that emotional states are expressed in the way people walk and the human gait is investigated in terms of its ability to reveal a person's emotional state. And Microsoft Kinect is a rapidly developing, inexpensive, portable and no-marker motion capture system. This paper gives a new referable method to do emotion recognition, by using Microsoft Kinect to do gait pattern analysis, which has not been reported. $59$ subjects are recruited in this study and their gait patterns are record by two Kinect cameras. Significant joints selecting, Coordinate system transforming, Slider window gauss filter, Differential operation, and Data segmentation are used in data preprocessing. Feature extracting is based on Fourier transformation. By using the NaiveBayes, RandomForests, libSVM and SMO classification, the recognition rate of natural and unnatural emotions can reach above 70%.It is concluded that using the Kinect system can be a new method in recognition of emotions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge