Shumpei Hatanaka

Nearest Neighbor Future Captioning: Generating Descriptions for Possible Collisions in Object Placement Tasks

Jul 18, 2024

Abstract:Domestic service robots (DSRs) that support people in everyday environments have been widely investigated. However, their ability to predict and describe future risks resulting from their own actions remains insufficient. In this study, we focus on the linguistic explainability of DSRs. Most existing methods do not explicitly model the region of possible collisions; thus, they do not properly generate descriptions of these regions. In this paper, we propose the Nearest Neighbor Future Captioning Model that introduces the Nearest Neighbor Language Model for future captioning of possible collisions, which enhances the model output with a nearest neighbors retrieval mechanism. Furthermore, we introduce the Collision Attention Module that attends regions of possible collisions, which enables our model to generate descriptions that adequately reflect the objects associated with possible collisions. To validate our method, we constructed a new dataset containing samples of collisions that can occur when a DSR places an object in a simulation environment. The experimental results demonstrated that our method outperformed baseline methods, based on the standard metrics. In particular, on CIDEr-D, the baseline method obtained 25.09 points, whereas our method obtained 33.08 points.

Multimodal Diffusion Segmentation Model for Object Segmentation from Manipulation Instructions

Jul 17, 2023

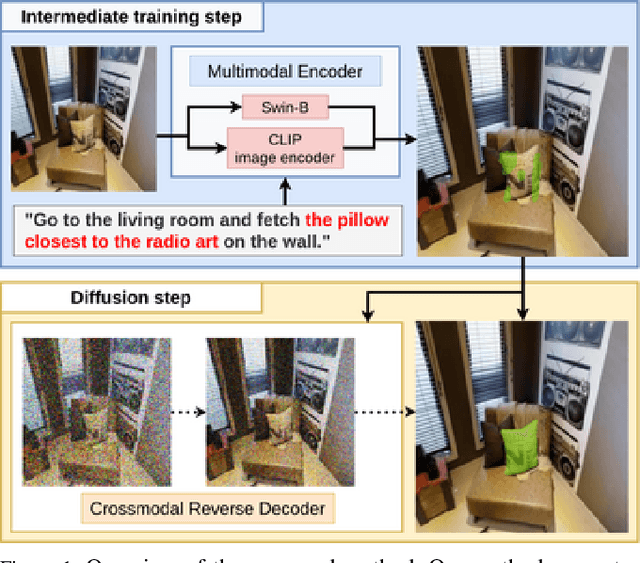

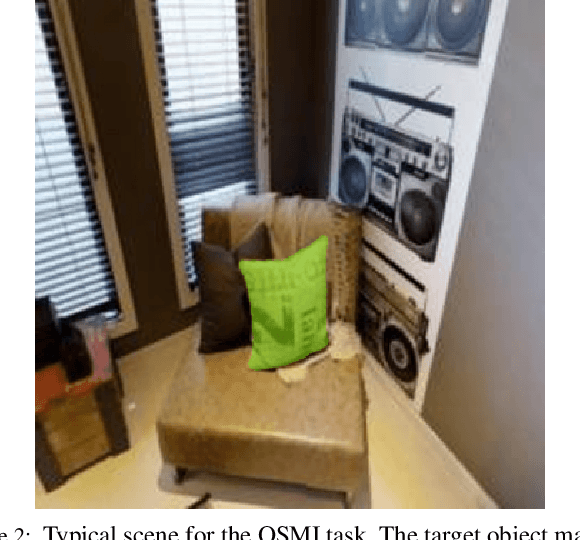

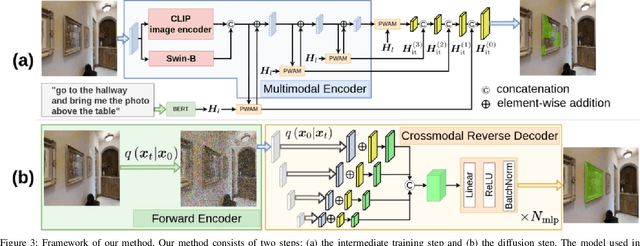

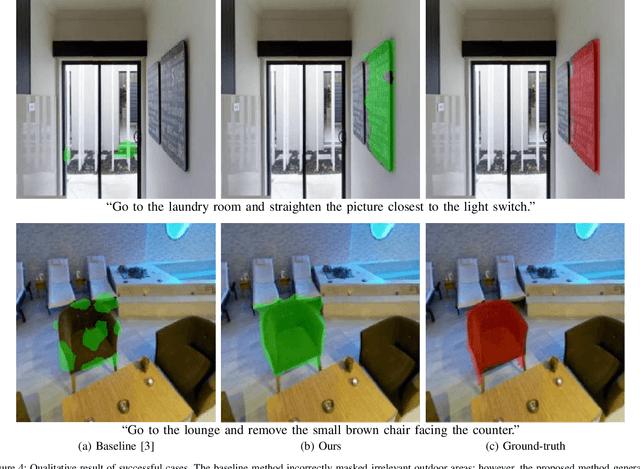

Abstract:In this study, we aim to develop a model that comprehends a natural language instruction (e.g., "Go to the living room and get the nearest pillow to the radio art on the wall") and generates a segmentation mask for the target everyday object. The task is challenging because it requires (1) the understanding of the referring expressions for multiple objects in the instruction, (2) the prediction of the target phrase of the sentence among the multiple phrases, and (3) the generation of pixel-wise segmentation masks rather than bounding boxes. Studies have been conducted on languagebased segmentation methods; however, they sometimes mask irrelevant regions for complex sentences. In this paper, we propose the Multimodal Diffusion Segmentation Model (MDSM), which generates a mask in the first stage and refines it in the second stage. We introduce a crossmodal parallel feature extraction mechanism and extend diffusion probabilistic models to handle crossmodal features. To validate our model, we built a new dataset based on the well-known Matterport3D and REVERIE datasets. This dataset consists of instructions with complex referring expressions accompanied by real indoor environmental images that feature various target objects, in addition to pixel-wise segmentation masks. The performance of MDSM surpassed that of the baseline method by a large margin of +10.13 mean IoU.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge