Shukai Wang

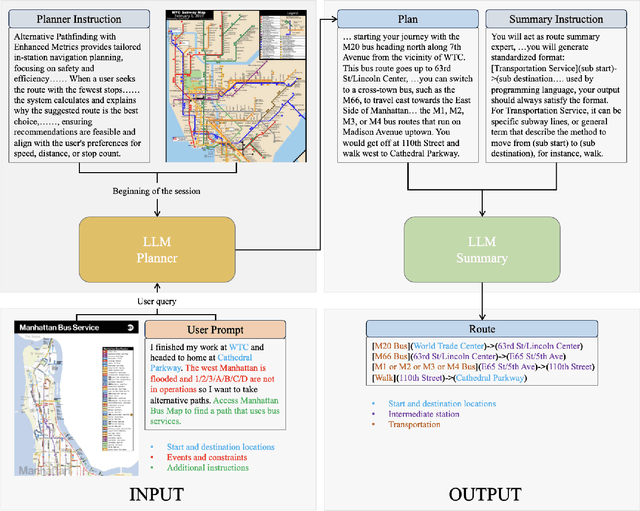

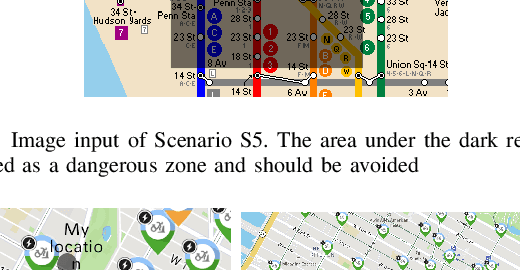

TraveLLM: Could you plan my new public transit route in face of a network disruption?

Jul 20, 2024

Abstract:Imagine there is a disruption in train 1 near Times Square metro station. You try to find an alternative subway route to the JFK airport on Google Maps, but the app fails to provide a suitable recommendation that takes into account the disruption and your preferences to avoid crowded stations. We find that in many such situations, current navigation apps may fall short and fail to give a reasonable recommendation. To fill this gap, in this paper, we develop a prototype, TraveLLM, to plan routing of public transit in face of disruption that relies on Large Language Models (LLMs). LLMs have shown remarkable capabilities in reasoning and planning across various domains. Here we hope to investigate the potential of LLMs that lies in incorporating multi-modal user-specific queries and constraints into public transit route recommendations. Various test cases are designed under different scenarios, including varying weather conditions, emergency events, and the introduction of new transportation services. We then compare the performance of state-of-the-art LLMs, including GPT-4, Claude 3 and Gemini, in generating accurate routes. Our comparative analysis demonstrates the effectiveness of LLMs, particularly GPT-4 in providing navigation plans. Our findings hold the potential for LLMs to enhance existing navigation systems and provide a more flexible and intelligent method for addressing diverse user needs in face of disruptions.

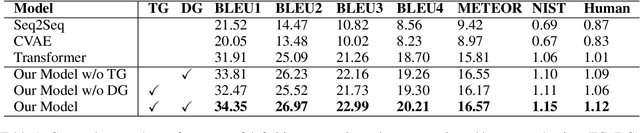

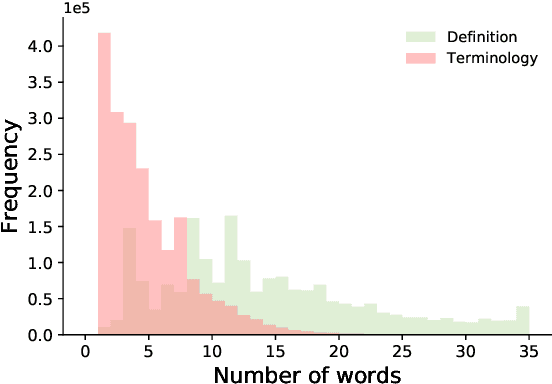

Graphine: A Dataset for Graph-aware Terminology Definition Generation

Sep 09, 2021

Abstract:Precisely defining the terminology is the first step in scientific communication. Developing neural text generation models for definition generation can circumvent the labor-intensity curation, further accelerating scientific discovery. Unfortunately, the lack of large-scale terminology definition dataset hinders the process toward definition generation. In this paper, we present a large-scale terminology definition dataset Graphine covering 2,010,648 terminology definition pairs, spanning 227 biomedical subdisciplines. Terminologies in each subdiscipline further form a directed acyclic graph, opening up new avenues for developing graph-aware text generation models. We then proposed a novel graph-aware definition generation model Graphex that integrates transformer with graph neural network. Our model outperforms existing text generation models by exploiting the graph structure of terminologies. We further demonstrated how Graphine can be used to evaluate pretrained language models, compare graph representation learning methods and predict sentence granularity. We envision Graphine to be a unique resource for definition generation and many other NLP tasks in biomedicine.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge