Shengpei Wang

Human-like object concept representations emerge naturally in multimodal large language models

Jul 01, 2024

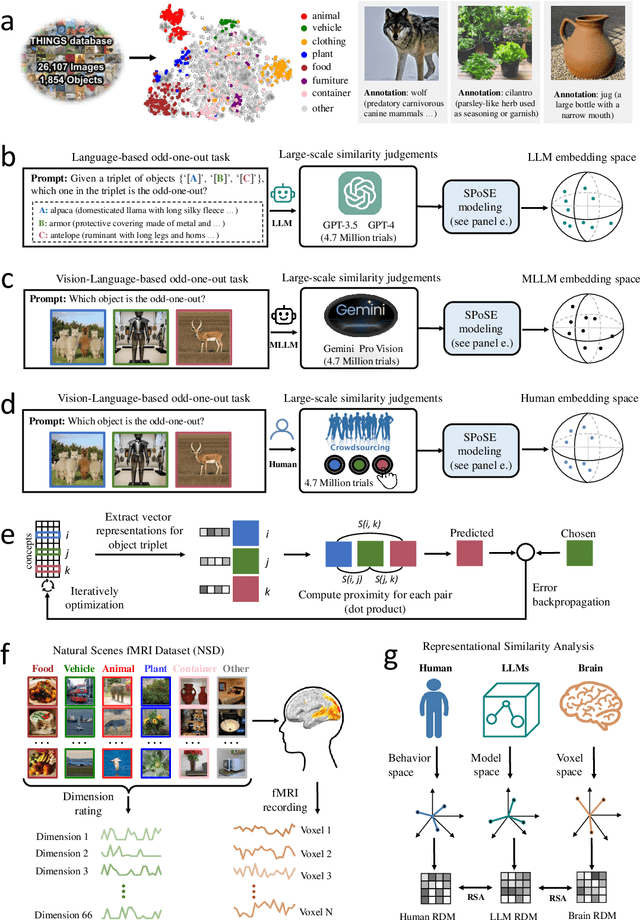

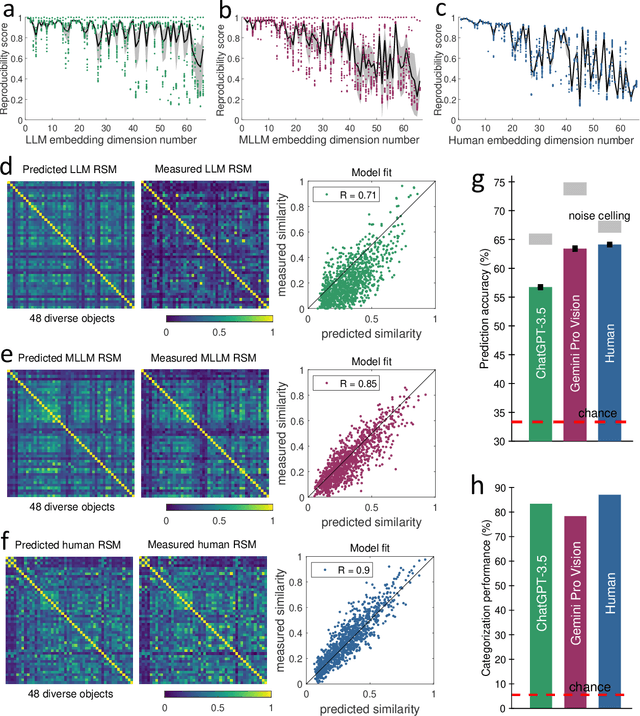

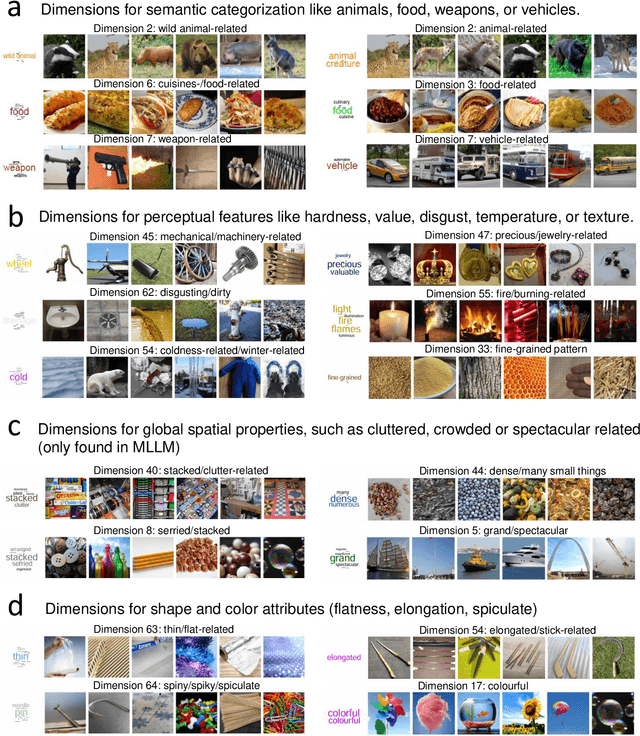

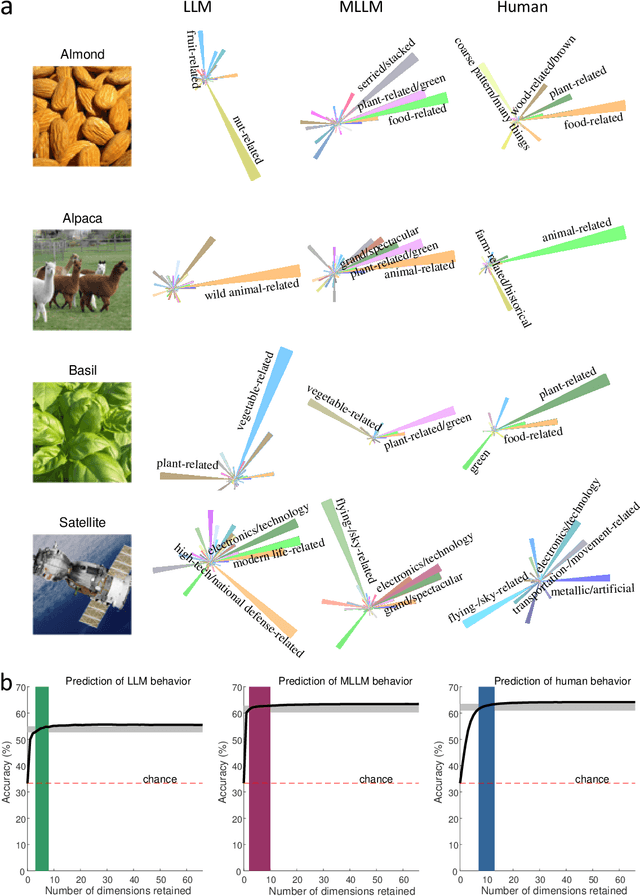

Abstract:The conceptualization and categorization of natural objects in the human mind have long intrigued cognitive scientists and neuroscientists, offering crucial insights into human perception and cognition. Recently, the rapid development of Large Language Models (LLMs) has raised the attractive question of whether these models can also develop human-like object representations through exposure to vast amounts of linguistic and multimodal data. In this study, we combined behavioral and neuroimaging analysis methods to uncover how the object concept representations in LLMs correlate with those of humans. By collecting large-scale datasets of 4.7 million triplet judgments from LLM and Multimodal LLM (MLLM), we were able to derive low-dimensional embeddings that capture the underlying similarity structure of 1,854 natural objects. The resulting 66-dimensional embeddings were found to be highly stable and predictive, and exhibited semantic clustering akin to human mental representations. Interestingly, the interpretability of the dimensions underlying these embeddings suggests that LLM and MLLM have developed human-like conceptual representations of natural objects. Further analysis demonstrated strong alignment between the identified model embeddings and neural activity patterns in many functionally defined brain ROIs (e.g., EBA, PPA, RSC and FFA). This provides compelling evidence that the object representations in LLMs, while not identical to those in the human, share fundamental commonalities that reflect key schemas of human conceptual knowledge. This study advances our understanding of machine intelligence and informs the development of more human-like artificial cognitive systems.

CLIP-MUSED: CLIP-Guided Multi-Subject Visual Neural Information Semantic Decoding

Feb 14, 2024

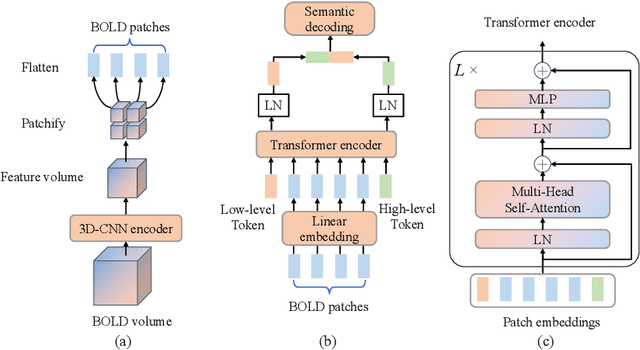

Abstract:The study of decoding visual neural information faces challenges in generalizing single-subject decoding models to multiple subjects, due to individual differences. Moreover, the limited availability of data from a single subject has a constraining impact on model performance. Although prior multi-subject decoding methods have made significant progress, they still suffer from several limitations, including difficulty in extracting global neural response features, linear scaling of model parameters with the number of subjects, and inadequate characterization of the relationship between neural responses of different subjects to various stimuli. To overcome these limitations, we propose a CLIP-guided Multi-sUbject visual neural information SEmantic Decoding (CLIP-MUSED) method. Our method consists of a Transformer-based feature extractor to effectively model global neural representations. It also incorporates learnable subject-specific tokens that facilitates the aggregation of multi-subject data without a linear increase of parameters. Additionally, we employ representational similarity analysis (RSA) to guide token representation learning based on the topological relationship of visual stimuli in the representation space of CLIP, enabling full characterization of the relationship between neural responses of different subjects under different stimuli. Finally, token representations are used for multi-subject semantic decoding. Our proposed method outperforms single-subject decoding methods and achieves state-of-the-art performance among the existing multi-subject methods on two fMRI datasets. Visualization results provide insights into the effectiveness of our proposed method. Code is available at https://github.com/CLIP-MUSED/CLIP-MUSED.

Multi-view Multi-label Fine-grained Emotion Decoding from Human Brain Activity

Oct 26, 2022

Abstract:Decoding emotional states from human brain activity plays an important role in brain-computer interfaces. Existing emotion decoding methods still have two main limitations: one is only decoding a single emotion category from a brain activity pattern and the decoded emotion categories are coarse-grained, which is inconsistent with the complex emotional expression of human; the other is ignoring the discrepancy of emotion expression between the left and right hemispheres of human brain. In this paper, we propose a novel multi-view multi-label hybrid model for fine-grained emotion decoding (up to 80 emotion categories) which can learn the expressive neural representations and predicting multiple emotional states simultaneously. Specifically, the generative component of our hybrid model is parametrized by a multi-view variational auto-encoder, in which we regard the brain activity of left and right hemispheres and their difference as three distinct views, and use the product of expert mechanism in its inference network. The discriminative component of our hybrid model is implemented by a multi-label classification network with an asymmetric focal loss. For more accurate emotion decoding, we first adopt a label-aware module for emotion-specific neural representations learning and then model the dependency of emotional states by a masked self-attention mechanism. Extensive experiments on two visually evoked emotional datasets show the superiority of our method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge