Shashank Jaiswal

xTrace: A Facial Expressive Behaviour Analysis Tool for Continuous Affect Recognition

May 08, 2025

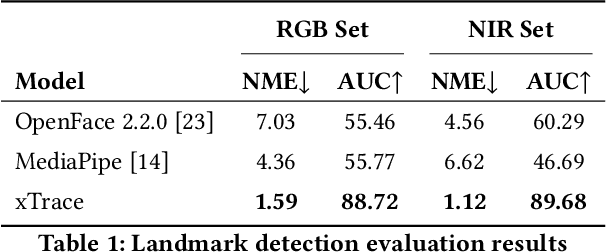

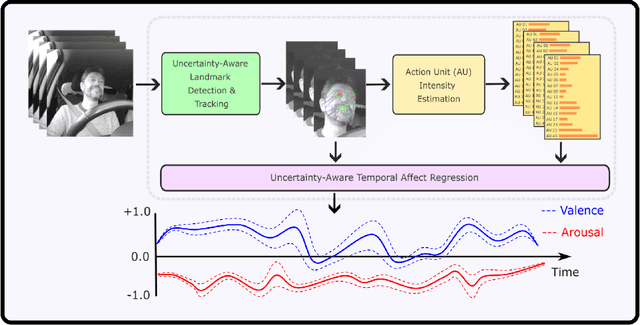

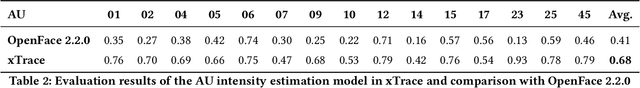

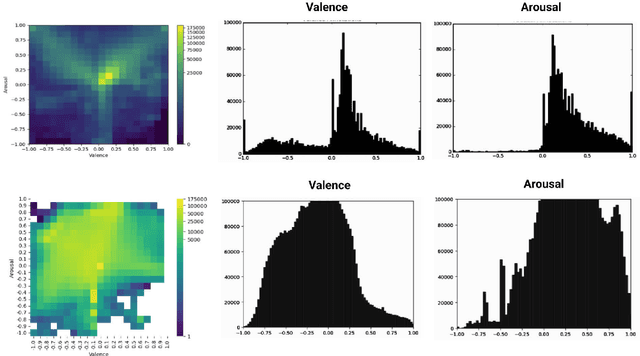

Abstract:Recognising expressive behaviours in face videos is a long-standing challenge in Affective Computing. Despite significant advancements in recent years, it still remains a challenge to build a robust and reliable system for naturalistic and in-the-wild facial expressive behaviour analysis in real time. This paper addresses two key challenges in building such a system: (1). The paucity of large-scale labelled facial affect video datasets with extensive coverage of the 2D emotion space, and (2). The difficulty of extracting facial video features that are discriminative, interpretable, robust, and computationally efficient. Toward addressing these challenges, we introduce xTrace, a robust tool for facial expressive behaviour analysis and predicting continuous values of dimensional emotions, namely valence and arousal, from in-the-wild face videos. To address challenge (1), our affect recognition model is trained on the largest facial affect video data set, containing ~450k videos that cover most emotion zones in the dimensional emotion space, making xTrace highly versatile in analysing a wide spectrum of naturalistic expressive behaviours. To address challenge (2), xTrace uses facial affect descriptors that are not only explainable, but can also achieve a high degree of accuracy and robustness with low computational complexity. The key components of xTrace are benchmarked against three existing tools: MediaPipe, OpenFace, and Augsburg Affect Toolbox. On an in-the-wild validation set composed of 50k videos, xTrace achieves 0.86 mean CCC and 0.13 mean absolute error values. We present a detailed error analysis of affect predictions from xTrace, illustrating (a). its ability to recognise emotions with high accuracy across most bins in the 2D emotion space, (b). its robustness to non-frontal head pose angles, and (c). a strong correlation between its uncertainty estimates and its accuracy.

Learning Graph Representation of Person-specific Cognitive Processes from Audio-visual Behaviours for Automatic Personality Recognition

Oct 27, 2021

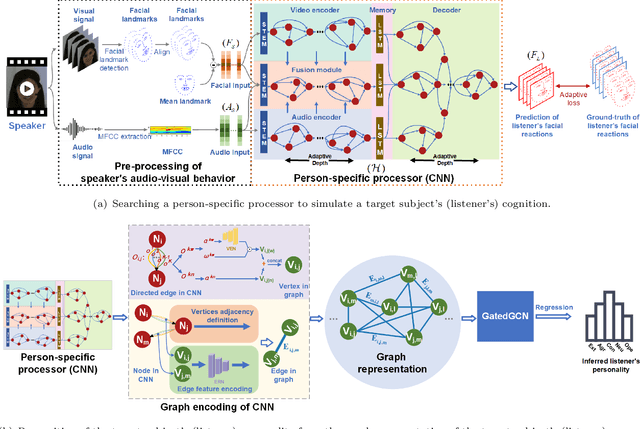

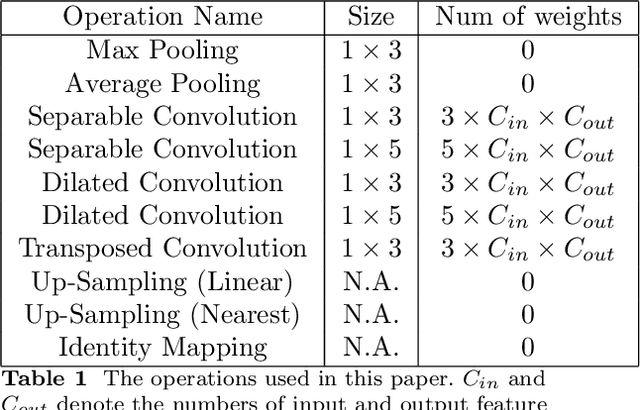

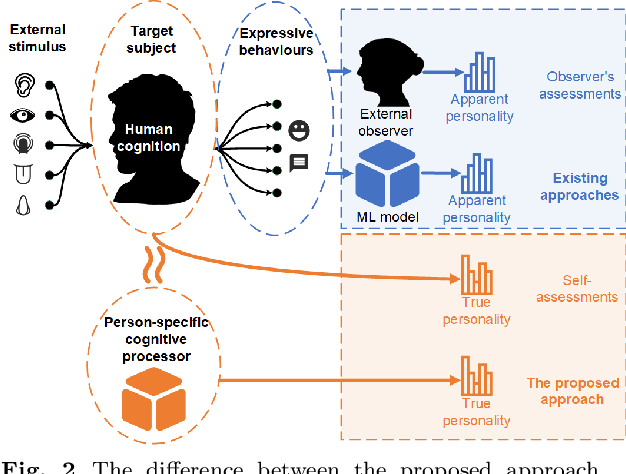

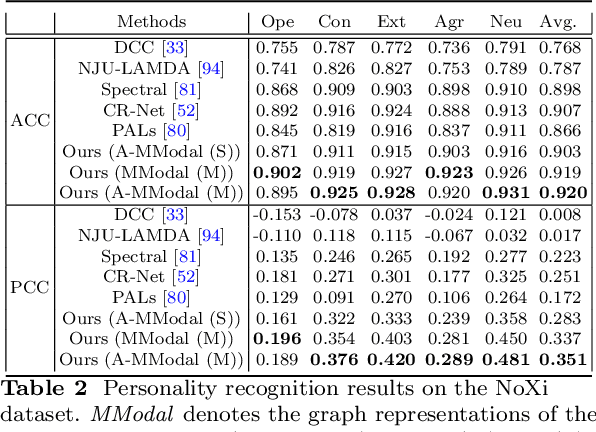

Abstract:This approach builds on two following findings in cognitive science: (i) human cognition partially determines expressed behaviour and is directly linked to true personality traits; and (ii) in dyadic interactions individuals' nonverbal behaviours are influenced by their conversational partner behaviours. In this context, we hypothesise that during a dyadic interaction, a target subject's facial reactions are driven by two main factors, i.e. their internal (person-specific) cognitive process, and the externalised nonverbal behaviours of their conversational partner. Consequently, we propose to represent the target subjects (defined as the listener) person-specific cognition in the form of a person-specific CNN architecture that has unique architectural parameters and depth, which takes audio-visual non-verbal cues displayed by the conversational partner (defined as the speaker) as input, and is able to reproduce the target subject's facial reactions. Each person-specific CNN is explored by the Neural Architecture Search (NAS) and a novel adaptive loss function, which is then represented as a graph representation for recognising the target subject's true personality. Experimental results not only show that the produced graph representations are well associated with target subjects' personality traits in both human-human and human-machine interaction scenarios, and outperform the existing approaches with significant advantages, but also demonstrate that the proposed novel strategies such as adaptive loss, and the end-to-end vertices/edges feature learning, help the proposed approach in learning more reliable personality representations.

Automatic Detection of ADHD and ASD from Expressive Behaviour in RGBD Data

Dec 07, 2016

Abstract:Attention Deficit Hyperactivity Disorder (ADHD) and Autism Spectrum Disorder (ASD) are neurodevelopmental conditions which impact on a significant number of children and adults. Currently, the diagnosis of such disorders is done by experts who employ standard questionnaires and look for certain behavioural markers through manual observation. Such methods for their diagnosis are not only subjective, difficult to repeat, and costly but also extremely time consuming. In this work, we present a novel methodology to aid diagnostic predictions about the presence/absence of ADHD and ASD by automatic visual analysis of a person's behaviour. To do so, we conduct the questionnaires in a computer-mediated way while recording participants with modern RGBD (Colour+Depth) sensors. In contrast to previous automatic approaches which have focussed only detecting certain behavioural markers, our approach provides a fully automatic end-to-end system for directly predicting ADHD and ASD in adults. Using state of the art facial expression analysis based on Dynamic Deep Learning and 3D analysis of behaviour, we attain classification rates of 96% for Controls vs Condition (ADHD/ASD) group and 94% for Comorbid (ADHD+ASD) vs ASD only group. We show that our system is a potentially useful time saving contribution to the diagnostic field of ADHD and ASD.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge