Shaohuan Zhou

Enhancing the vocal range of single-speaker singing voice synthesis with melody-unsupervised pre-training

Sep 01, 2023

Abstract:The single-speaker singing voice synthesis (SVS) usually underperforms at pitch values that are out of the singer's vocal range or associated with limited training samples. Based on our previous work, this work proposes a melody-unsupervised multi-speaker pre-training method conducted on a multi-singer dataset to enhance the vocal range of the single-speaker, while not degrading the timbre similarity. This pre-training method can be deployed to a large-scale multi-singer dataset, which only contains audio-and-lyrics pairs without phonemic timing information and pitch annotation. Specifically, in the pre-training step, we design a phoneme predictor to produce the frame-level phoneme probability vectors as the phonemic timing information and a speaker encoder to model the timbre variations of different singers, and directly estimate the frame-level f0 values from the audio to provide the pitch information. These pre-trained model parameters are delivered into the fine-tuning step as prior knowledge to enhance the single speaker's vocal range. Moreover, this work also contributes to improving the sound quality and rhythm naturalness of the synthesized singing voices. It is the first to introduce a differentiable duration regulator to improve the rhythm naturalness of the synthesized voice, and a bi-directional flow model to improve the sound quality. Experimental results verify that the proposed SVS system outperforms the baseline on both sound quality and naturalness.

Towards Improving the Expressiveness of Singing Voice Synthesis with BERT Derived Semantic Information

Aug 31, 2023

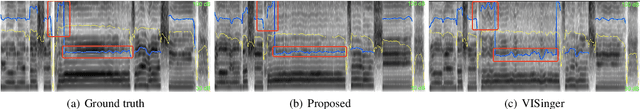

Abstract:This paper presents an end-to-end high-quality singing voice synthesis (SVS) system that uses bidirectional encoder representation from Transformers (BERT) derived semantic embeddings to improve the expressiveness of the synthesized singing voice. Based on the main architecture of recently proposed VISinger, we put forward several specific designs for expressive singing voice synthesis. First, different from the previous SVS models, we use text representation of lyrics extracted from pre-trained BERT as additional input to the model. The representation contains information about semantics of the lyrics, which could help SVS system produce more expressive and natural voice. Second, we further introduce an energy predictor to stabilize the synthesized voice and model the wider range of energy variations that also contribute to the expressiveness of singing voice. Last but not the least, to attenuate the off-key issues, the pitch predictor is re-designed to predict the real to note pitch ratio. Both objective and subjective experimental results indicate that the proposed SVS system can produce singing voice with higher-quality outperforming VISinger.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge