Shanchieh Jay Yang

Guided Reasoning in LLM-Driven Penetration Testing Using Structured Attack Trees

Sep 09, 2025Abstract:Recent advances in Large Language Models (LLMs) have driven interest in automating cybersecurity penetration testing workflows, offering the promise of faster and more consistent vulnerability assessment for enterprise systems. Existing LLM agents for penetration testing primarily rely on self-guided reasoning, which can produce inaccurate or hallucinated procedural steps. As a result, the LLM agent may undertake unproductive actions, such as exploiting unused software libraries or generating cyclical responses that repeat prior tactics. In this work, we propose a guided reasoning pipeline for penetration testing LLM agents that incorporates a deterministic task tree built from the MITRE ATT&CK Matrix, a proven penetration testing kll chain, to constrain the LLM's reaoning process to explicitly defined tactics, techniques, and procedures. This anchors reasoning in proven penetration testing methodologies and filters out ineffective actions by guiding the agent towards more productive attack procedures. To evaluate our approach, we built an automated penetration testing LLM agent using three LLMs (Llama-3-8B, Gemini-1.5, and GPT-4) and applied it to navigate 10 HackTheBox cybersecurity exercises with 103 discrete subtasks representing real-world cyberattack scenarios. Our proposed reasoning pipeline guided the LLM agent through 71.8\%, 72.8\%, and 78.6\% of subtasks using Llama-3-8B, Gemini-1.5, and GPT-4, respectively. Comparatively, the state-of-the-art LLM penetration testing tool using self-guided reasoning completed only 13.5\%, 16.5\%, and 75.7\% of subtasks and required 86.2\%, 118.7\%, and 205.9\% more model queries. This suggests that incorporating a deterministic task tree into LLM reasoning pipelines can enhance the accuracy and efficiency of automated cybersecurity assessments

LLM Embedding-based Attribution (LEA): Quantifying Source Contributions to Generative Model's Response for Vulnerability Analysis

Jun 12, 2025Abstract:Security vulnerabilities are rapidly increasing in frequency and complexity, creating a shifting threat landscape that challenges cybersecurity defenses. Large Language Models (LLMs) have been widely adopted for cybersecurity threat analysis. When querying LLMs, dealing with new, unseen vulnerabilities is particularly challenging as it lies outside LLMs' pre-trained distribution. Retrieval-Augmented Generation (RAG) pipelines mitigate the problem by injecting up-to-date authoritative sources into the model context, thus reducing hallucinations and increasing the accuracy in responses. Meanwhile, the deployment of LLMs in security-sensitive environments introduces challenges around trust and safety. This raises a critical open question: How to quantify or attribute the generated response to the retrieved context versus the model's pre-trained knowledge? This work proposes LLM Embedding-based Attribution (LEA) -- a novel, explainable metric to paint a clear picture on the 'percentage of influence' the pre-trained knowledge vs. retrieved content has for each generated response. We apply LEA to assess responses to 100 critical CVEs from the past decade, verifying its effectiveness to quantify the insightfulness for vulnerability analysis. Our development of LEA reveals a progression of independency in hidden states of LLMs: heavy reliance on context in early layers, which enables the derivation of LEA; increased independency in later layers, which sheds light on why scale is essential for LLM's effectiveness. This work provides security analysts a means to audit LLM-assisted workflows, laying the groundwork for transparent, high-assurance deployments of RAG-enhanced LLMs in cybersecurity operations.

ProveRAG: Provenance-Driven Vulnerability Analysis with Automated Retrieval-Augmented LLMs

Oct 22, 2024

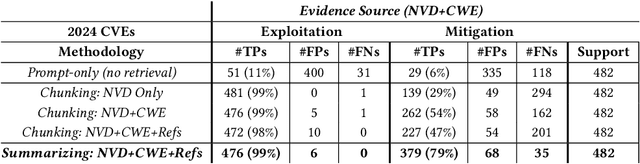

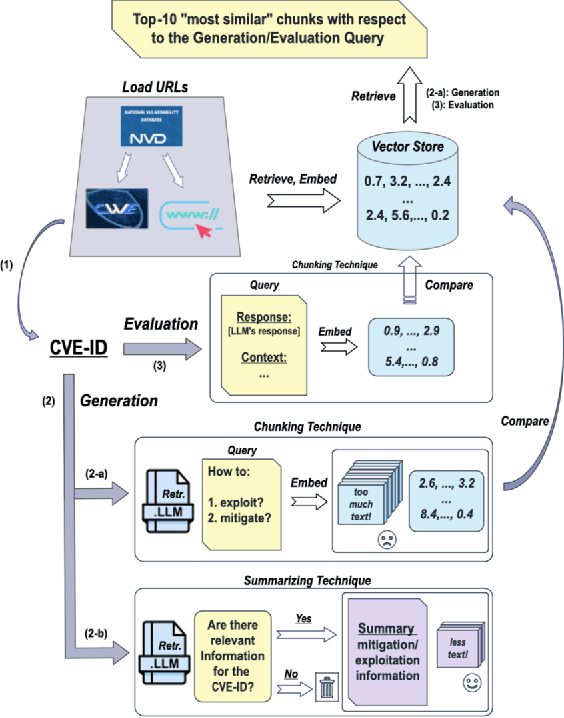

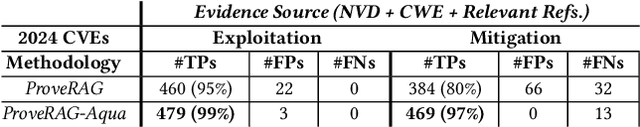

Abstract:In cybersecurity, security analysts face the challenge of mitigating newly discovered vulnerabilities in real-time, with over 300,000 Common Vulnerabilities and Exposures (CVEs) identified since 1999. The sheer volume of known vulnerabilities complicates the detection of patterns for unknown threats. While LLMs can assist, they often hallucinate and lack alignment with recent threats. Over 25,000 vulnerabilities have been identified so far in 2024, which are introduced after popular LLMs' (e.g., GPT-4) training data cutoff. This raises a major challenge of leveraging LLMs in cybersecurity, where accuracy and up-to-date information are paramount. In this work, we aim to improve the adaptation of LLMs in vulnerability analysis by mimicking how analysts perform such tasks. We propose ProveRAG, an LLM-powered system designed to assist in rapidly analyzing CVEs with automated retrieval augmentation of web data while self-evaluating its responses with verifiable evidence. ProveRAG incorporates a self-critique mechanism to help alleviate omission and hallucination common in the output of LLMs applied in cybersecurity applications. The system cross-references data from verifiable sources (NVD and CWE), giving analysts confidence in the actionable insights provided. Our results indicate that ProveRAG excels in delivering verifiable evidence to the user with over 99% and 97% accuracy in exploitation and mitigation strategies, respectively. This system outperforms direct prompting and chunking retrieval in vulnerability analysis by overcoming temporal and context-window limitations. ProveRAG guides analysts to secure their systems more effectively while documenting the process for future audits.

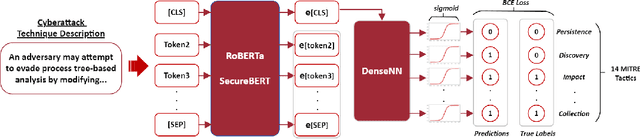

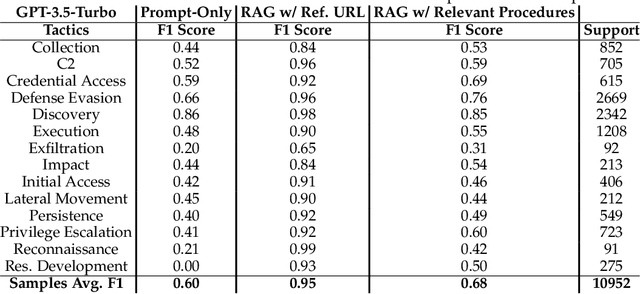

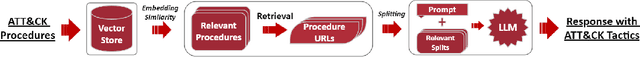

Advancing TTP Analysis: Harnessing the Power of Encoder-Only and Decoder-Only Language Models with Retrieval Augmented Generation

Jan 12, 2024

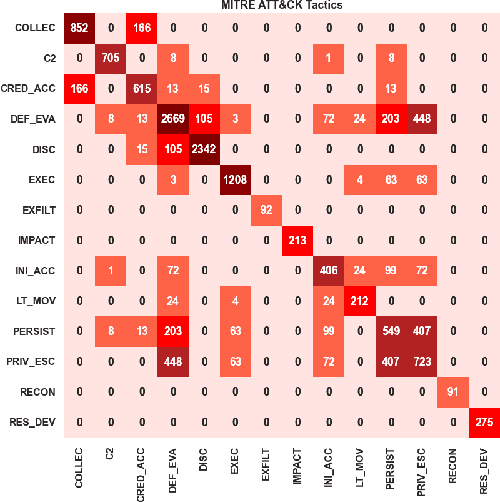

Abstract:Tactics, Techniques, and Procedures (TTPs) outline the methods attackers use to exploit vulnerabilities. The interpretation of TTPs in the MITRE ATT&CK framework can be challenging for cybersecurity practitioners due to presumed expertise, complex dependencies, and inherent ambiguity. Meanwhile, advancements with Large Language Models (LLMs) have led to recent surge in studies exploring its uses in cybersecurity operations. This leads us to question how well encoder-only (e.g., RoBERTa) and decoder-only (e.g., GPT-3.5) LLMs can comprehend and summarize TTPs to inform analysts of the intended purposes (i.e., tactics) of a cyberattack procedure. The state-of-the-art LLMs have shown to be prone to hallucination by providing inaccurate information, which is problematic in critical domains like cybersecurity. Therefore, we propose the use of Retrieval Augmented Generation (RAG) techniques to extract relevant contexts for each cyberattack procedure for decoder-only LLMs (without fine-tuning). We further contrast such approach against supervised fine-tuning (SFT) of encoder-only LLMs. Our results reveal that both the direct-use of decoder-only LLMs (i.e., its pre-trained knowledge) and the SFT of encoder-only LLMs offer inaccurate interpretation of cyberattack procedures. Significant improvements are shown when RAG is used for decoder-only LLMs, particularly when directly relevant context is found. This study further sheds insights on the limitations and capabilities of using RAG for LLMs in interpreting TTPs.

Are Existing Out-Of-Distribution Techniques Suitable for Network Intrusion Detection?

Aug 28, 2023

Abstract:Machine learning (ML) has become increasingly popular in network intrusion detection. However, ML-based solutions always respond regardless of whether the input data reflects known patterns, a common issue across safety-critical applications. While several proposals exist for detecting Out-Of-Distribution (OOD) in other fields, it remains unclear whether these approaches can effectively identify new forms of intrusions for network security. New attacks, not necessarily affecting overall distributions, are not guaranteed to be clearly OOD as instead, images depicting new classes are in computer vision. In this work, we investigate whether existing OOD detectors from other fields allow the identification of unknown malicious traffic. We also explore whether more discriminative and semantically richer embedding spaces within models, such as those created with contrastive learning and multi-class tasks, benefit detection. Our investigation covers a set of six OOD techniques that employ different detection strategies. These techniques are applied to models trained in various ways and subsequently exposed to unknown malicious traffic from the same and different datasets (network environments). Our findings suggest that existing detectors can identify a consistent portion of new malicious traffic, and that improved embedding spaces enhance detection. We also demonstrate that simple combinations of certain detectors can identify almost 100% of malicious traffic in our tested scenarios.

On the Uses of Large Language Models to Interpret Ambiguous Cyberattack Descriptions

Jun 24, 2023Abstract:The volume, variety, and velocity of change in vulnerabilities and exploits have made incident threat analysis challenging with human expertise and experience along. The MITRE AT&CK framework employs Tactics, Techniques, and Procedures (TTPs) to describe how and why attackers exploit vulnerabilities. However, a TTP description written by one security professional can be interpreted very differently by another, leading to confusion in cybersecurity operations or even business, policy, and legal decisions. Meanwhile, advancements in AI have led to the increasing use of Natural Language Processing (NLP) algorithms to assist the various tasks in cyber operations. With the rise of Large Language Models (LLMs), NLP tasks have significantly improved because of the LLM's semantic understanding and scalability. This leads us to question how well LLMs can interpret TTP or general cyberattack descriptions. We propose and analyze the direct use of LLMs as well as training BaseLLMs with ATT&CK descriptions to study their capability in predicting ATT&CK tactics. Our results reveal that the BaseLLMs with supervised training provide a more focused and clearer differentiation between the ATT&CK tactics (if such differentiation exists). On the other hand, LLMs offer a broader interpretation of cyberattack techniques. Despite the power of LLMs, inherent ambiguity exists within their predictions. We thus summarize the existing challenges and recommend research directions on LLMs to deal with the inherent ambiguity of TTP descriptions.

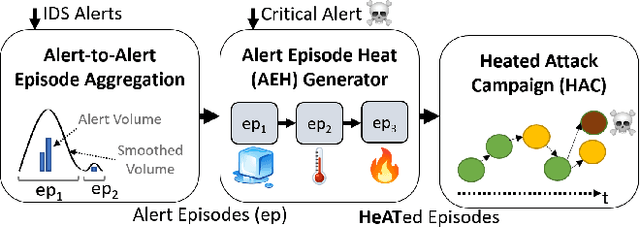

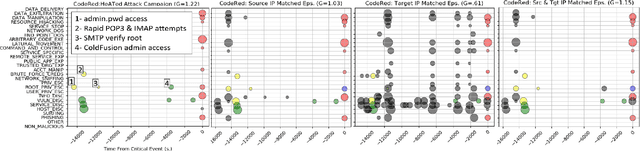

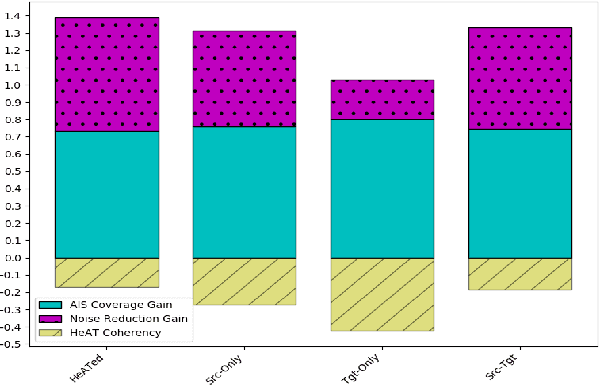

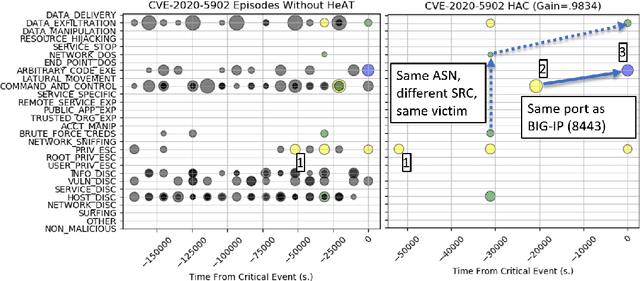

HeATed Alert Triage (HeAT): Transferrable Learning to Extract Multistage Attack Campaigns

Dec 28, 2022

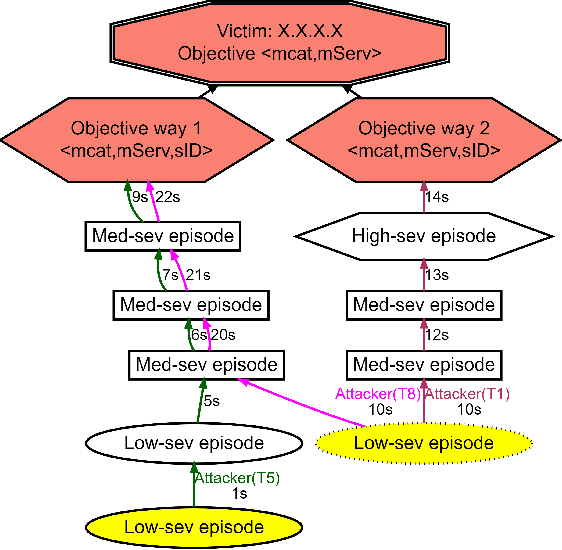

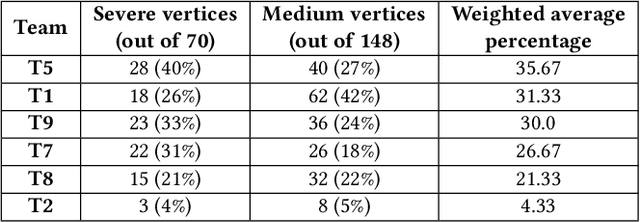

Abstract:With growing sophistication and volume of cyber attacks combined with complex network structures, it is becoming extremely difficult for security analysts to corroborate evidences to identify multistage campaigns on their network. This work develops HeAT (Heated Alert Triage): given a critical indicator of compromise (IoC), e.g., a severe IDS alert, HeAT produces a HeATed Attack Campaign (HAC) depicting the multistage activities that led up to the critical event. We define the concept of "Alert Episode Heat" to represent the analysts opinion of how much an event contributes to the attack campaign of the critical IoC given their knowledge of the network and security expertise. Leveraging a network-agnostic feature set, HeAT learns the essence of analyst's assessment of "HeAT" for a small set of IoC's, and applies the learned model to extract insightful attack campaigns for IoC's not seen before, even across networks by transferring what have been learned. We demonstrate the capabilities of HeAT with data collected in Collegiate Penetration Testing Competition (CPTC) and through collaboration with a real-world SOC. We developed HeAT-Gain metrics to demonstrate how analysts may assess and benefit from the extracted attack campaigns in comparison to common practices where IP addresses are used to corroborate evidences. Our results demonstrates the practical uses of HeAT by finding campaigns that span across diverse attack stages, remove a significant volume of irrelevant alerts, and achieve coherency to the analyst's original assessments.

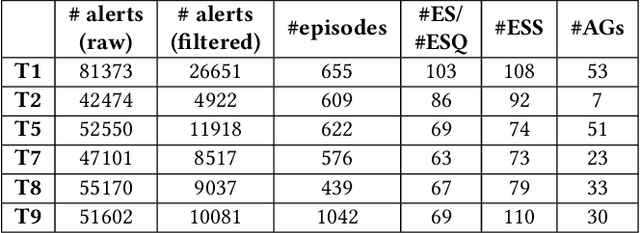

SAGE: Intrusion Alert-driven Attack Graph Extractor

Jul 06, 2021

Abstract:Attack graphs (AG) are used to assess pathways availed by cyber adversaries to penetrate a network. State-of-the-art approaches for AG generation focus mostly on deriving dependencies between system vulnerabilities based on network scans and expert knowledge. In real-world operations however, it is costly and ineffective to rely on constant vulnerability scanning and expert-crafted AGs. We propose to automatically learn AGs based on actions observed through intrusion alerts, without prior expert knowledge. Specifically, we develop an unsupervised sequence learning system, SAGE, that leverages the temporal and probabilistic dependence between alerts in a suffix-based probabilistic deterministic finite automaton (S-PDFA) -- a model that accentuates infrequent severe alerts and summarizes paths leading to them. AGs are then derived from the S-PDFA. Tested with intrusion alerts collected through Collegiate Penetration Testing Competition, SAGE produces AGs that reflect the strategies used by participating teams. The resulting AGs are succinct, interpretable, and enable analysts to derive actionable insights, e.g., attackers tend to follow shorter paths after they have discovered a longer one.

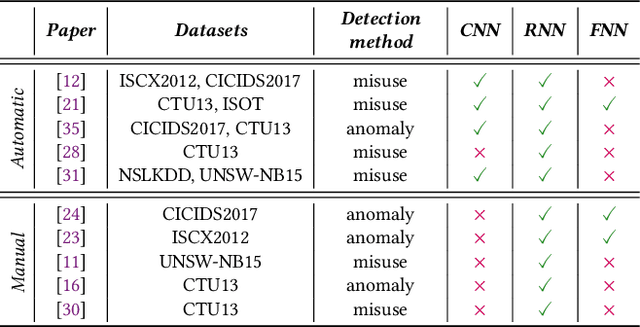

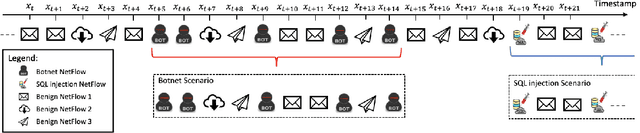

On the Evaluation of Sequential Machine Learning for Network Intrusion Detection

Jun 15, 2021

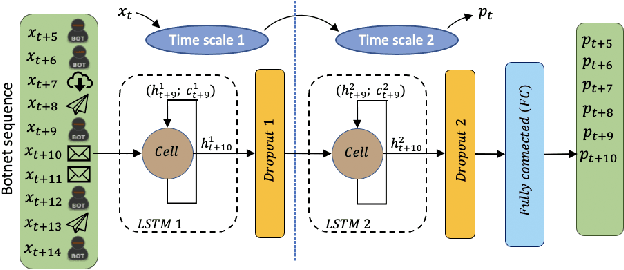

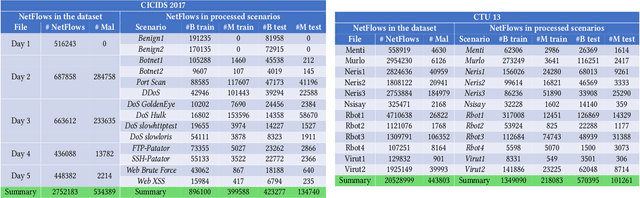

Abstract:Recent advances in deep learning renewed the research interests in machine learning for Network Intrusion Detection Systems (NIDS). Specifically, attention has been given to sequential learning models, due to their ability to extract the temporal characteristics of Network traffic Flows (NetFlows), and use them for NIDS tasks. However, the applications of these sequential models often consist of transferring and adapting methodologies directly from other fields, without an in-depth investigation on how to leverage the specific circumstances of cybersecurity scenarios; moreover, there is a lack of comprehensive studies on sequential models that rely on NetFlow data, which presents significant advantages over traditional full packet captures. We tackle this problem in this paper. We propose a detailed methodology to extract temporal sequences of NetFlows that denote patterns of malicious activities. Then, we apply this methodology to compare the efficacy of sequential learning models against traditional static learning models. In particular, we perform a fair comparison of a `sequential' Long Short-Term Memory (LSTM) against a `static' Feedforward Neural Networks (FNN) in distinct environments represented by two well-known datasets for NIDS: the CICIDS2017 and the CTU13. Our results highlight that LSTM achieves comparable performance to FNN in the CICIDS2017 with over 99.5\% F1-score; while obtaining superior performance in the CTU13, with 95.7\% F1-score against 91.5\%. This paper thus paves the way to future applications of sequential learning models for NIDS.

On the Veracity of Cyber Intrusion Alerts Synthesized by Generative Adversarial Networks

Aug 03, 2019

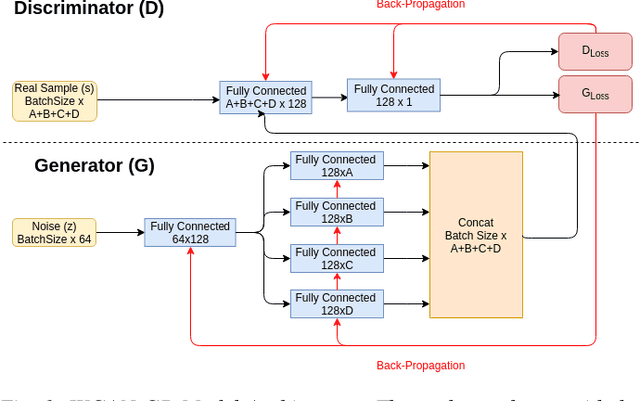

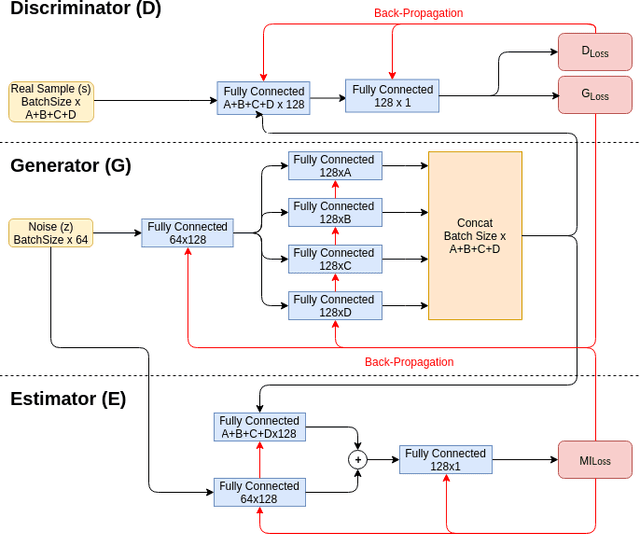

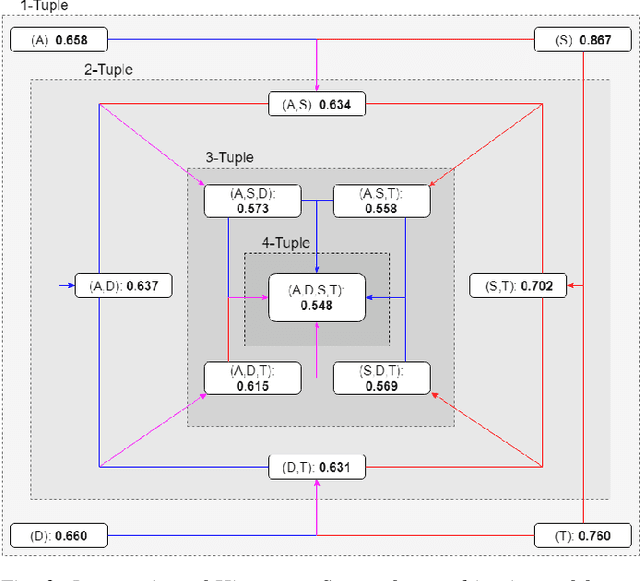

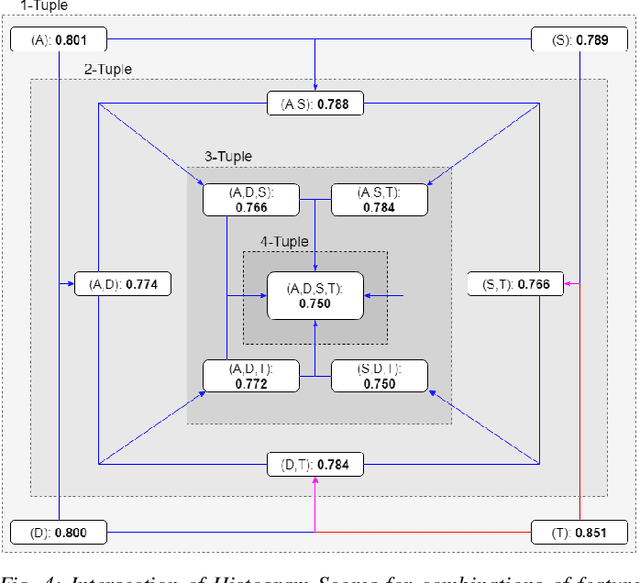

Abstract:Recreating cyber-attack alert data with a high level of fidelity is challenging due to the intricate interaction between features, non-homogeneity of alerts, and potential for rare yet critical samples. Generative Adversarial Networks (GANs) have been shown to effectively learn complex data distributions with the intent of creating increasingly realistic data. This paper presents the application of GANs to cyber-attack alert data and shows that GANs not only successfully learn to generate realistic alerts, but also reveal feature dependencies within alerts. This is accomplished by reviewing the intersection of histograms for varying alert-feature combinations between the ground truth and generated datsets. Traditional statistical metrics, such as conditional and joint entropy, are also employed to verify the accuracy of these dependencies. Finally, it is shown that a Mutual Information constraint on the network can be used to increase the generation of low probability, critical, alert values. By mapping alerts to a set of attack stages it is shown that the output of these low probability alerts has a direct contextual meaning for Cyber Security analysts. Overall, this work provides the basis for generating new cyber intrusion alerts and provides evidence that synthesized alerts emulate critical dependencies from the source dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge