Seydou Ba

Bine Trees: Enhancing Collective Operations by Optimizing Communication Locality

Aug 24, 2025Abstract:Communication locality plays a key role in the performance of collective operations on large HPC systems, especially on oversubscribed networks where groups of nodes are fully connected internally but sparsely linked through global connections. We present Bine (binomial negabinary) trees, a family of collective algorithms that improve communication locality. Bine trees maintain the generality of binomial trees and butterflies while cutting global-link traffic by up to 33%. We implement eight Bine-based collectives and evaluate them on four large-scale supercomputers with Dragonfly, Dragonfly+, oversubscribed fat-tree, and torus topologies, achieving up to 5x speedups and consistent reductions in global-link traffic across different vector sizes and node counts.

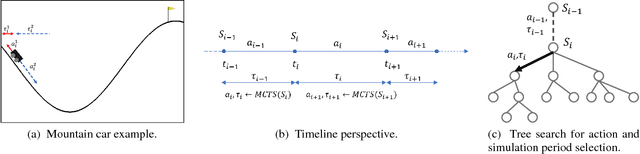

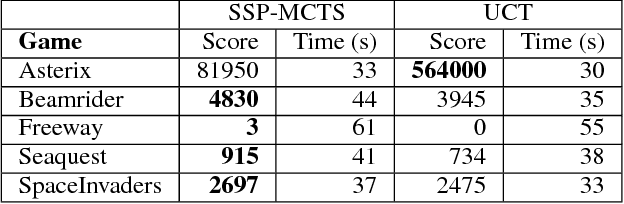

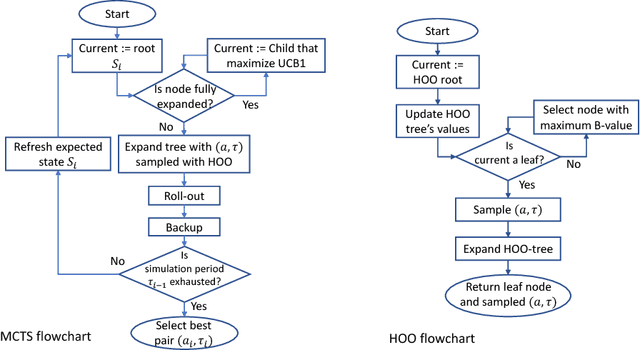

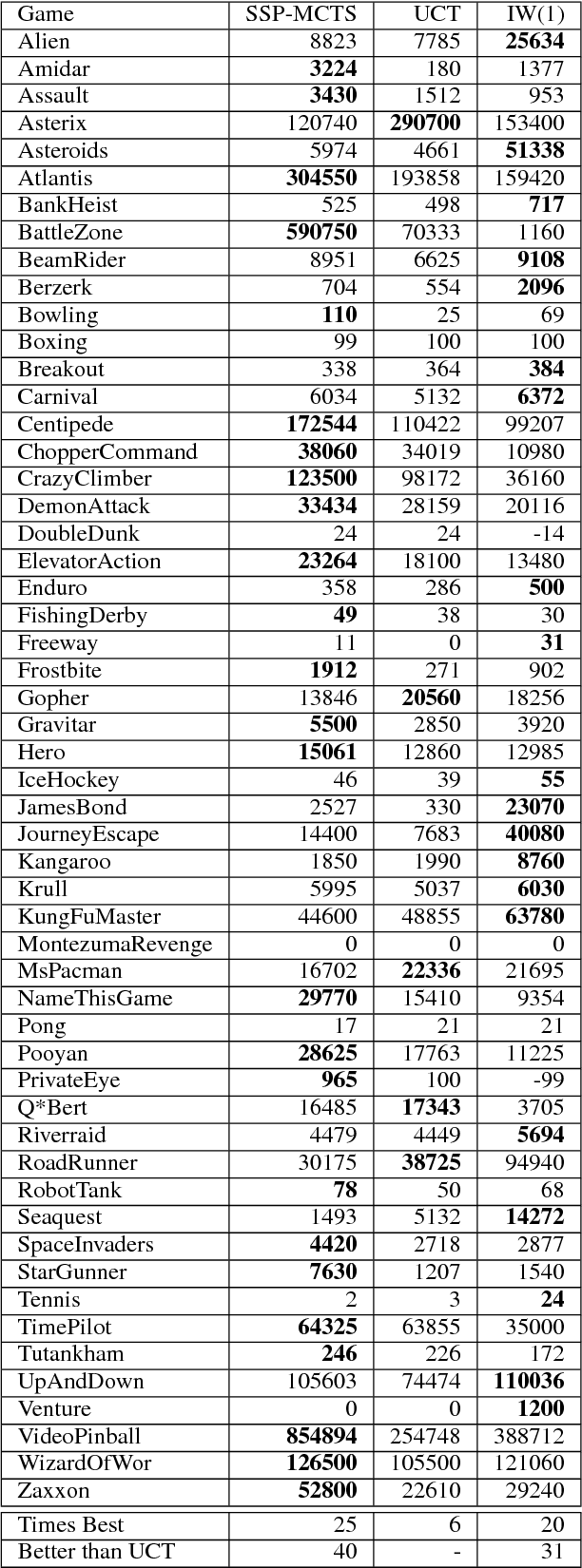

Monte Carlo Tree Search with Scalable Simulation Periods for Continuously Running Tasks

Sep 07, 2018

Abstract:Monte Carlo Tree Search (MCTS) is particularly adapted to domains where the potential actions can be represented as a tree of sequential decisions. For an effective action selection, MCTS performs many simulations to build a reliable tree representation of the decision space. As such, a bottleneck to MCTS appears when enough simulations cannot be performed between action selections. This is particularly highlighted in continuously running tasks, for which the time available to perform simulations between actions tends to be limited due to the environment's state constantly changing. In this paper, we present an approach that takes advantage of the anytime characteristic of MCTS to increase the simulation time when allowed. Our approach is to effectively balance the prospect of selecting an action with the time that can be spared to perform MCTS simulations before the next action selection. For that, we considered the simulation time as a decision variable to be selected alongside an action. We extended the Hierarchical Optimistic Optimization applied to Tree (HOOT) method to adapt our approach to environments with a continuous decision space. We evaluated our approach for environments with a continuous decision space through OpenAI gym's Pendulum and Continuous Mountain Car environments and for environments with discrete action space through the arcade learning environment (ALE) platform. The evaluation results show that, with variable simulation times, the proposed approach outperforms the conventional MCTS in the evaluated continuous decision space tasks and improves the performance of MCTS in most of the ALE tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge