Sebastian Stabinger

ANLS* -- A Universal Document Processing Metric for Generative Large Language Models

Feb 06, 2024Abstract:Traditionally, discriminative models have been the predominant choice for tasks like document classification and information extraction. These models make predictions that fall into a limited number of predefined classes, facilitating a binary true or false evaluation and enabling the direct calculation of metrics such as the F1 score. However, recent advancements in generative large language models (GLLMs) have prompted a shift in the field due to their enhanced zero-shot capabilities, which eliminate the need for a downstream dataset and computationally expensive fine-tuning. However, evaluating GLLMs presents a challenge as the binary true or false evaluation used for discriminative models is not applicable to the predictions made by GLLMs. This paper introduces a new metric for generative models called ANLS* for evaluating a wide variety of tasks, including information extraction and classification tasks. The ANLS* metric extends existing ANLS metrics as a drop-in-replacement and is still compatible with previously reported ANLS scores. An evaluation of 7 different datasets and 3 different GLLMs using the ANLS* metric is also provided, demonstrating the importance of the proposed metric. We also benchmark a novel approach to generate prompts for documents, called SFT, against other prompting techniques such as LATIN. In 15 out of 21 cases, SFT outperforms other techniques and improves the state-of-the-art, sometimes by as much as $15$ percentage points. Sources are available at https://github.com/deepopinion/anls_star_metric

Improving the Trainability of Deep Neural Networks through Layerwise Batch-Entropy Regularization

Aug 01, 2022

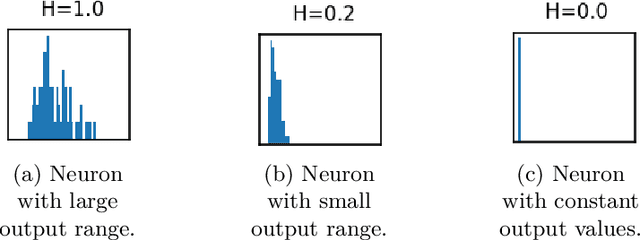

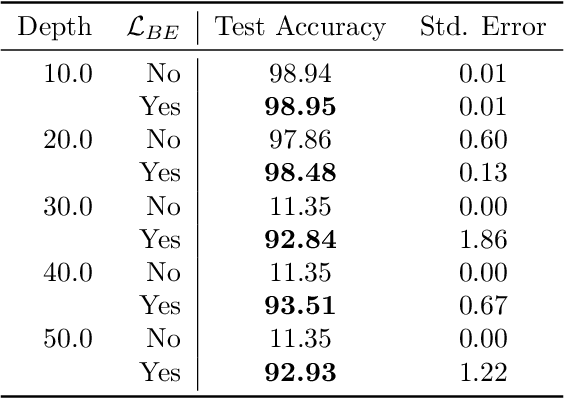

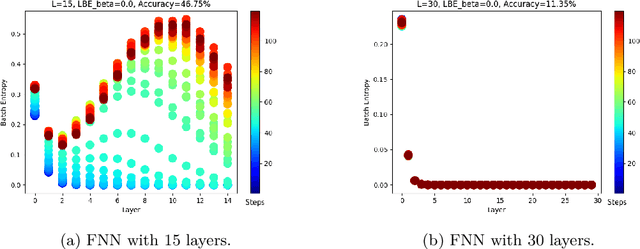

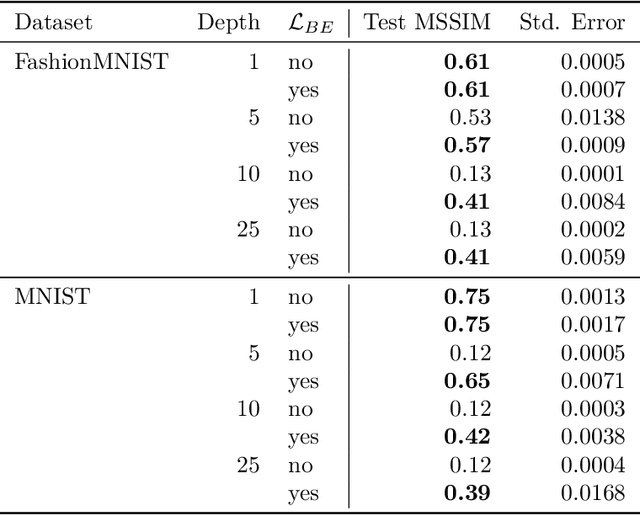

Abstract:Training deep neural networks is a very demanding task, especially challenging is how to adapt architectures to improve the performance of trained models. We can find that sometimes, shallow networks generalize better than deep networks, and the addition of more layers results in higher training and test errors. The deep residual learning framework addresses this degradation problem by adding skip connections to several neural network layers. It would at first seem counter-intuitive that such skip connections are needed to train deep networks successfully as the expressivity of a network would grow exponentially with depth. In this paper, we first analyze the flow of information through neural networks. We introduce and evaluate the batch-entropy which quantifies the flow of information through each layer of a neural network. We prove empirically and theoretically that a positive batch-entropy is required for gradient descent-based training approaches to optimize a given loss function successfully. Based on those insights, we introduce batch-entropy regularization to enable gradient descent-based training algorithms to optimize the flow of information through each hidden layer individually. With batch-entropy regularization, gradient descent optimizers can transform untrainable networks into trainable networks. We show empirically that we can therefore train a "vanilla" fully connected network and convolutional neural network -- no skip connections, batch normalization, dropout, or any other architectural tweak -- with 500 layers by simply adding the batch-entropy regularization term to the loss function. The effect of batch-entropy regularization is not only evaluated on vanilla neural networks, but also on residual networks, autoencoders, and also transformer models over a wide range of computer vision as well as natural language processing tasks.

Greedy Layer Pruning: Decreasing Inference Time of Transformer Models

May 31, 2021

Abstract:Fine-tuning transformer models after unsupervised pre-training reaches a very high performance on many different NLP tasks. Unfortunately, transformers suffer from long inference times which greatly increases costs in production and is a limiting factor for the deployment into embedded devices. One possible solution is to use knowledge distillation, which solves this problem by transferring information from large teacher models to smaller student models, but as it needs an additional expensive pre-training phase, this solution is computationally expensive and can be financially prohibitive for smaller academic research groups. Another solution is to use layer-wise pruning methods, which reach high compression rates for transformer models and avoids the computational load of the pre-training distillation stage. The price to pay is that the performance of layer-wise pruning algorithms is not on par with state-of-the-art knowledge distillation methods. In this paper, greedy layer pruning (GLP) is introduced to (1) outperform current state-of-the-art for layer-wise pruning (2) close the performance gap when compared to knowledge distillation, while (3) using only a modest budget. More precisely, with the methodology presented it is possible to prune and evaluate competitive models on the whole GLUE benchmark with a budget of just $\$300$. Our source code is available on https://github.com/deepopinion/greedy-layer-pruning.

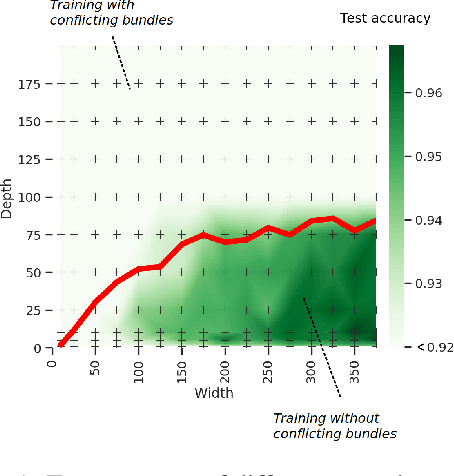

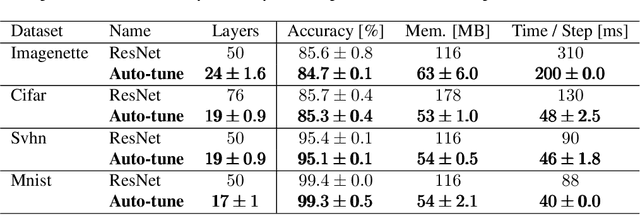

Auto-tuning of Deep Neural Networks by Conflicting Layer Removal

Mar 07, 2021

Abstract:Designing neural network architectures is a challenging task and knowing which specific layers of a model must be adapted to improve the performance is almost a mystery. In this paper, we introduce a novel methodology to identify layers that decrease the test accuracy of trained models. Conflicting layers are detected as early as the beginning of training. In the worst-case scenario, we prove that such a layer could lead to a network that cannot be trained at all. A theoretical analysis is provided on what is the origin of those layers that result in a lower overall network performance, which is complemented by our extensive empirical evaluation. More precisely, we identified those layers that worsen the performance because they would produce what we name conflicting training bundles. We will show that around 60% of the layers of trained residual networks can be completely removed from the architecture with no significant increase in the test-error. We will further present a novel neural-architecture-search (NAS) algorithm that identifies conflicting layers at the beginning of the training. Architectures found by our auto-tuning algorithm achieve competitive accuracy values when compared against more complex state-of-the-art architectures, while drastically reducing memory consumption and inference time for different computer vision tasks. The source code is available on https://github.com/peerdavid/conflicting-bundles

Arguments for the Unsuitability of Convolutional Neural Networks for Non--Local Tasks

Feb 23, 2021

Abstract:Convolutional neural networks have established themselves over the past years as the state of the art method for image classification, and for many datasets, they even surpass humans in categorizing images. Unfortunately, the same architectures perform much worse when they have to compare parts of an image to each other to correctly classify this image. Until now, no well-formed theoretical argument has been presented to explain this deficiency. In this paper, we will argue that convolutional layers are of little use for such problems, since comparison tasks are global by nature, but convolutional layers are local by design. We will use this insight to reformulate a comparison task into a sorting task and use findings on sorting networks to propose a lower bound for the number of parameters a neural network needs to solve comparison tasks in a generalizable way. We will use this lower bound to argue that attention, as well as iterative/recurrent processing, is needed to prevent a combinatorial explosion.

Conflicting Bundles: Adapting Architectures Towards the Improved Training of Deep Neural Networks

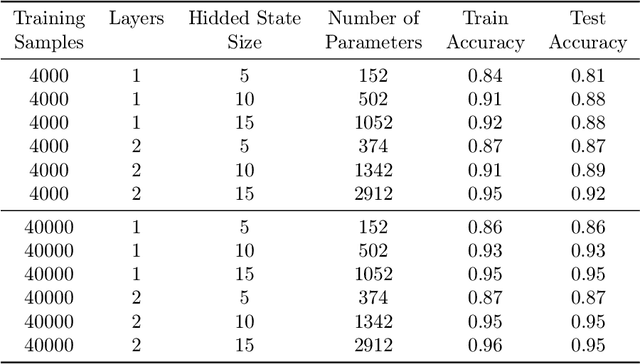

Nov 05, 2020

Abstract:Designing neural network architectures is a challenging task and knowing which specific layers of a model must be adapted to improve the performance is almost a mystery. In this paper, we introduce a novel theory and metric to identify layers that decrease the test accuracy of the trained models, this identification is done as early as at the beginning of training. In the worst-case, such a layer could lead to a network that can not be trained at all. More precisely, we identified those layers that worsen the performance because they produce conflicting training bundles as we show in our novel theoretical analysis, complemented by our extensive empirical studies. Based on these findings, a novel algorithm is introduced to remove performance decreasing layers automatically. Architectures found by this algorithm achieve a competitive accuracy when compared against the state-of-the-art architectures. While keeping such high accuracy, our approach drastically reduces memory consumption and inference time for different computer vision tasks.

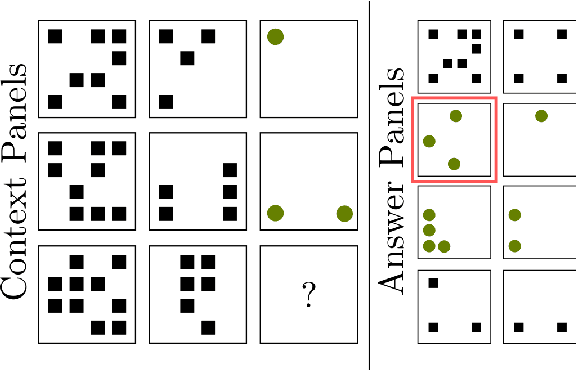

Evaluating the Progress of Deep Learning for Visual Relational Concepts

Jan 29, 2020

Abstract:Convolutional Neural Networks (CNNs) have become the state of the art method for image classification in the last 7 years, but despite the fact that they achieve super human performance on many classification datasets, there are lesser known datasets where they almost fail completely and perform much worse than humans. We will show that these problems correspond to relational concepts as defined by the field of concept learning. Therefore, we will present current deep learning research for visual relational concepts. Analyzing the current literature, we will hypothesise that iterative processing of the input, together with shifting attention between the iterations will be needed to efficiently and reliably solve real world relational concept learning. In addition, we will conclude that many current datasets overestimate the performance of tested systems by providing data in an already pre-attended form.

Adapt or Get Left Behind: Domain Adaptation through BERT Language Model Finetuning for Aspect-Target Sentiment Classification

Aug 30, 2019

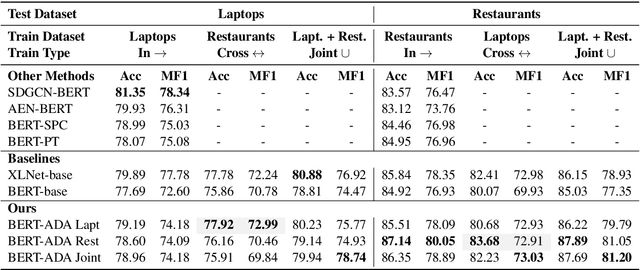

Abstract:Aspect-Target Sentiment Classification (ATSC) is a subtask of Aspect-Based Sentiment Analysis (ABSA), which has many applications e.g. in e-commerce, where data and insights from reviews can be leveraged to create value for businesses and customers. Recently, deep transfer-learning methods have been applied successfully to a myriad of Natural Language Processing (NLP) tasks, including ATSC. Building on top of the prominent the BERT language model, we approach ATSC by using a two-step procedure: Self-supervised domain-specific BERT language model finetuning, followed by supervised task-specific finetuning. Our findings on how to best exploit domain-specific language model finetuning enables us to produce new state-of-the-art performance on the SemEval 2014 Task 4 restaurants dataset. In addition, to explore the real-world robustness of our models, we perform cross-domain evaluation. We show that a cross-domain adapted BERT language model performs significantly better compared to strong baseline models like vanilla BERT-base and XLNet-base.

Limitations of routing-by-agreement based capsule networks

May 21, 2019

Abstract:Classical neural networks add a bias term to the sum of all weighted inputs. For capsule networks, the routing-by-agreement algorithm, which is commonly used to route vectors from lower level capsules to upper level capsules, calculates activations without a bias term. In this paper we show that such a term is also necessary for routing-by-agreement. We will proof that for every input there exists a symmetric input that cannot be distinguished correctly by capsules without a bias term. We show that this limitation impacts the training of deeper capsule networks negatively and that adding a bias term allows for the training of deeper capsule networks. An alternative to a bias is also presented in this paper. This novel method does not introduce additional parameters and is directly encoded in the activation vector of capsules.

Evaluating CNNs on the Gestalt Principle of Closure

Mar 30, 2019

Abstract:Deep convolutional neural networks (CNNs) are widely known for their outstanding performance in classification and regression tasks over high-dimensional data. This made them a popular and powerful tool for a large variety of applications in industry and academia. Recent publications show that seemingly easy classifaction tasks (for humans) can be very challenging for state of the art CNNs. An attempt to describe how humans perceive visual elements is given by the Gestalt principles. In this paper we evaluate AlexNet and GoogLeNet regarding their performance on classifying the correctness of the well known Kanizsa triangles, which heavily rely on the Gestalt principle of closure. Therefore we created various datasets containing valid as well as invalid variants of the Kanizsa triangle. Our findings suggest that perceiving objects by utilizing the principle of closure is very challenging for the applied network architectures but they appear to adapt to the effect of closure.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge