Roderich Gross

Design for One, Deploy for Many: Navigating Tree Mazes with Multiple Agents

Oct 30, 2025

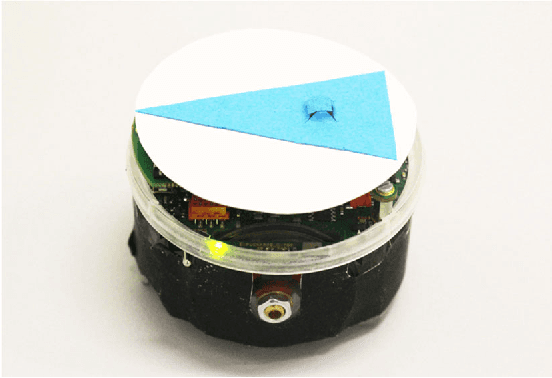

Abstract:Maze-like environments, such as cave and pipe networks, pose unique challenges for multiple robots to coordinate, including communication constraints and congestion. To address these challenges, we propose a distributed multi-agent maze traversal algorithm for environments that can be represented by acyclic graphs. It uses a leader-switching mechanism where one agent, assuming a head role, employs any single-agent maze solver while the other agents each choose an agent to follow. The head role gets transferred to neighboring agents where necessary, ensuring it follows the same path as a single agent would. The multi-agent maze traversal algorithm is evaluated in simulations with groups of up to 300 agents, various maze sizes, and multiple single-agent maze solvers. It is compared against strategies that are na\"ive, or assume either global communication or full knowledge of the environment. The algorithm outperforms the na\"ive strategy in terms of makespan and sum-of-fuel. It is superior to the global-communication strategy in terms of makespan but is inferior to it in terms of sum-of-fuel. The findings suggest it is asymptotically equivalent to the full-knowledge strategy with respect to either metric. Moreover, real-world experiments with up to 20 Pi-puck robots confirm the feasibility of the approach.

Ready, Bid, Go! On-Demand Delivery Using Fleets of Drones with Unknown, Heterogeneous Energy Storage Constraints

Apr 11, 2025

Abstract:Unmanned Aerial Vehicles (UAVs) are expected to transform logistics, reducing delivery time, costs, and emissions. This study addresses an on-demand delivery , in which fleets of UAVs are deployed to fulfil orders that arrive stochastically. Unlike previous work, it considers UAVs with heterogeneous, unknown energy storage capacities and assumes no knowledge of the energy consumption models. We propose a decentralised deployment strategy that combines auction-based task allocation with online learning. Each UAV independently decides whether to bid for orders based on its energy storage charge level, the parcel mass, and delivery distance. Over time, it refines its policy to bid only for orders within its capability. Simulations using realistic UAV energy models reveal that, counter-intuitively, assigning orders to the least confident bidders reduces delivery times and increases the number of successfully fulfilled orders. This strategy is shown to outperform threshold-based methods which require UAVs to exceed specific charge levels at deployment. We propose a variant of the strategy which uses learned policies for forecasting. This enables UAVs with insufficient charge levels to commit to fulfilling orders at specific future times, helping to prioritise early orders. Our work provides new insights into long-term deployment of UAV swarms, highlighting the advantages of decentralised energy-aware decision-making coupled with online learning in real-world dynamic environments.

On the Benefits of Robot Platooning for Navigating Crowded Environments

Oct 18, 2024

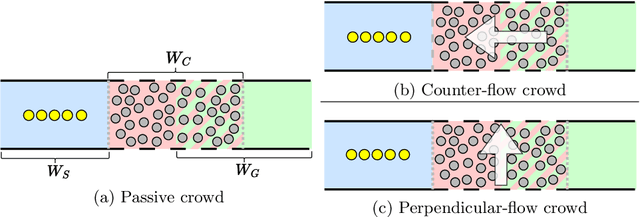

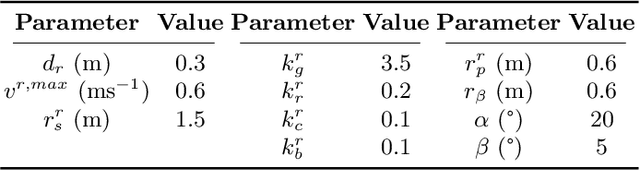

Abstract:This paper studies how groups of robots can effectively navigate through a crowd of agents. It quantifies the performance of platooning and less constrained, greedy strategies, and the extent to which these strategies disrupt the crowd agents. Three scenarios are considered: (i) passive crowds, (ii) counter-flow crowds, and (iii) perpendicular-flow crowds. Through simulations consisting of up to 200 robots, we show that for navigating passive and counter-flow crowds, the platooning strategy is less disruptive and more effective in dense crowds than the greedy strategy, whereas for navigating perpendicular-flow crowds, the greedy strategy outperforms the platooning strategy in either aspect. Moreover, we propose an adaptive strategy that can switch between platooning and greedy behavioral states, and demonstrate that it combines the strengths of both strategies in all the scenarios considered.

GANGs: Generative Adversarial Network Games

Dec 17, 2017

Abstract:Generative Adversarial Networks (GAN) have become one of the most successful frameworks for unsupervised generative modeling. As GANs are difficult to train much research has focused on this. However, very little of this research has directly exploited game-theoretic techniques. We introduce Generative Adversarial Network Games (GANGs), which explicitly model a finite zero-sum game between a generator ($G$) and classifier ($C$) that use mixed strategies. The size of these games precludes exact solution methods, therefore we define resource-bounded best responses (RBBRs), and a resource-bounded Nash Equilibrium (RB-NE) as a pair of mixed strategies such that neither $G$ or $C$ can find a better RBBR. The RB-NE solution concept is richer than the notion of `local Nash equilibria' in that it captures not only failures of escaping local optima of gradient descent, but applies to any approximate best response computations, including methods with random restarts. To validate our approach, we solve GANGs with the Parallel Nash Memory algorithm, which provably monotonically converges to an RB-NE. We compare our results to standard GAN setups, and demonstrate that our method deals well with typical GAN problems such as mode collapse, partial mode coverage and forgetting.

Turing learning: a metric-free approach to inferring behavior and its application to swarms

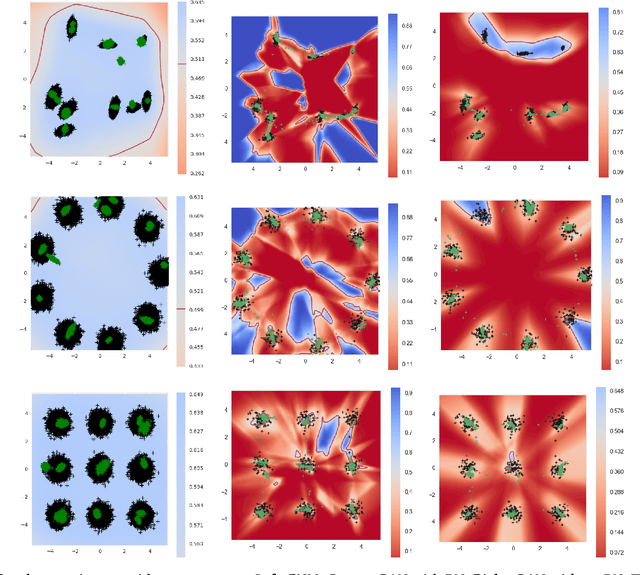

Sep 30, 2016

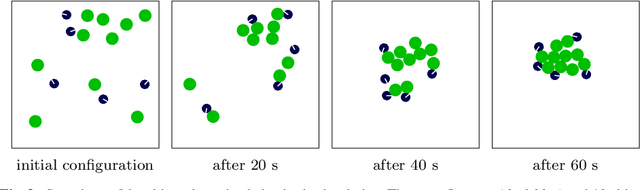

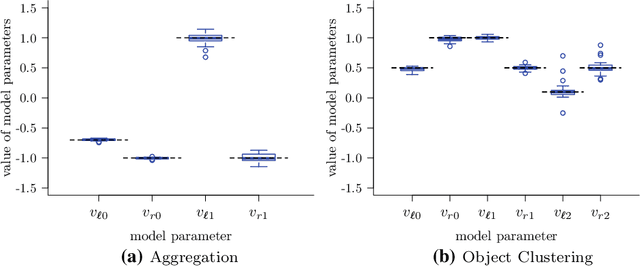

Abstract:We propose Turing Learning, a novel system identification method for inferring the behavior of natural or artificial systems. Turing Learning simultaneously optimizes two populations of computer programs, one representing models of the behavior of the system under investigation, and the other representing classifiers. By observing the behavior of the system as well as the behaviors produced by the models, two sets of data samples are obtained. The classifiers are rewarded for discriminating between these two sets, that is, for correctly categorizing data samples as either genuine or counterfeit. Conversely, the models are rewarded for 'tricking' the classifiers into categorizing their data samples as genuine. Unlike other methods for system identification, Turing Learning does not require predefined metrics to quantify the difference between the system and its models. We present two case studies with swarms of simulated robots and prove that the underlying behaviors cannot be inferred by a metric-based system identification method. By contrast, Turing Learning infers the behaviors with high accuracy. It also produces a useful by-product - the classifiers - that can be used to detect abnormal behavior in the swarm. Moreover, we show that Turing Learning also successfully infers the behavior of physical robot swarms. The results show that collective behaviors can be directly inferred from motion trajectories of individuals in the swarm, which may have significant implications for the study of animal collectives. Furthermore, Turing Learning could prove useful whenever a behavior is not easily characterizable using metrics, making it suitable for a wide range of applications.

* camera-ready version

Why 'GSA: A Gravitational Search Algorithm' Is Not Genuinely Based on the Law of Gravity

Jun 30, 2011Abstract:Why 'GSA: A Gravitational Search Algorithm' Is Not Genuinely Based on the Law of Gravity

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge