Reza Shirvany

ALiSNet: Accurate and Lightweight Human Segmentation Network for Fashion E-Commerce

Apr 15, 2023Abstract:Accurately estimating human body shape from photos can enable innovative applications in fashion, from mass customization, to size and fit recommendations and virtual try-on. Body silhouettes calculated from user pictures are effective representations of the body shape for downstream tasks. Smartphones provide a convenient way for users to capture images of their body, and on-device image processing allows predicting body segmentation while protecting users privacy. Existing off-the-shelf methods for human segmentation are closed source and cannot be specialized for our application of body shape and measurement estimation. Therefore, we create a new segmentation model by simplifying Semantic FPN with PointRend, an existing accurate model. We finetune this model on a high-quality dataset of humans in a restricted set of poses relevant for our application. We obtain our final model, ALiSNet, with a size of 4MB and 97.6$\pm$1.0$\%$ mIoU, compared to Apple Person Segmentation, which has an accuracy of 94.4$\pm$5.7$\%$ mIoU on our dataset.

FitGAN: Fit- and Shape-Realistic Generative Adversarial Networks for Fashion

Jun 23, 2022

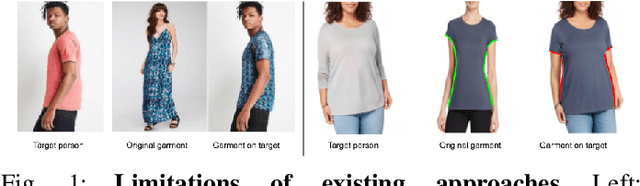

Abstract:Amidst the rapid growth of fashion e-commerce, remote fitting of fashion articles remains a complex and challenging problem and a main driver of customers' frustration. Despite the recent advances in 3D virtual try-on solutions, such approaches still remain limited to a very narrow - if not only a handful - selection of articles, and often for only one size of those fashion items. Other state-of-the-art approaches that aim to support customers find what fits them online mostly require a high level of customer engagement and privacy-sensitive data (such as height, weight, age, gender, belly shape, etc.), or alternatively need images of customers' bodies in tight clothing. They also often lack the ability to produce fit and shape aware visual guidance at scale, coming up short by simply advising which size to order that would best match a customer's physical body attributes, without providing any information on how the garment may fit and look. Contributing towards taking a leap forward and surpassing the limitations of current approaches, we present FitGAN, a generative adversarial model that explicitly accounts for garments' entangled size and fit characteristics of online fashion at scale. Conditioned on the fit and shape of the articles, our model learns disentangled item representations and generates realistic images reflecting the true fit and shape properties of fashion articles. Through experiments on real world data at scale, we demonstrate how our approach is capable of synthesizing visually realistic and diverse fits of fashion items and explore its ability to control fit and shape of images for thousands of online garments.

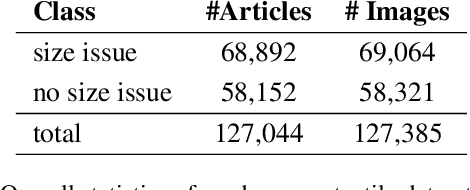

SizeFlags: Reducing Size and Fit Related Returns in Fashion E-Commerce

Jun 07, 2021

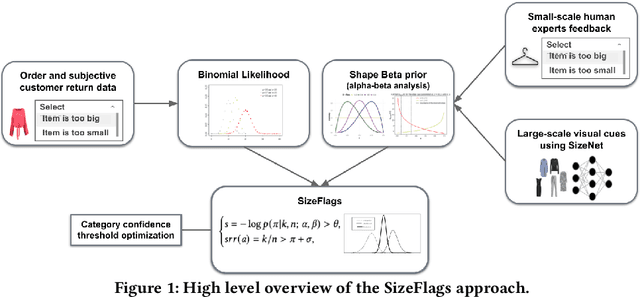

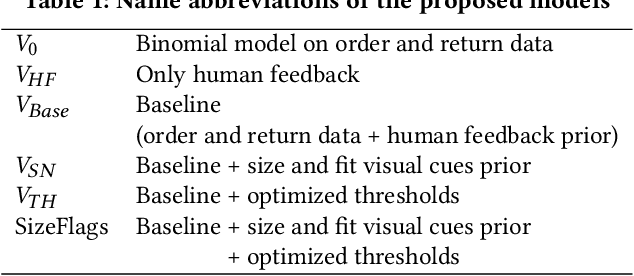

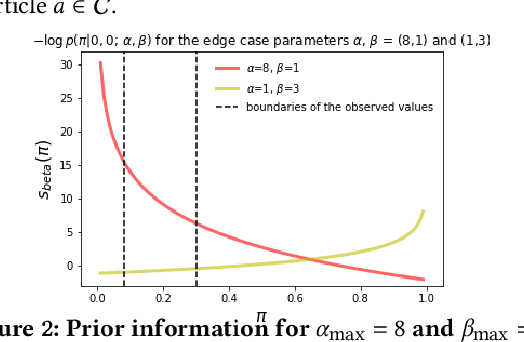

Abstract:E-commerce is growing at an unprecedented rate and the fashion industry has recently witnessed a noticeable shift in customers' order behaviour towards stronger online shopping. However, fashion articles ordered online do not always find their way to a customer's wardrobe. In fact, a large share of them end up being returned. Finding clothes that fit online is very challenging and accounts for one of the main drivers of increased return rates in fashion e-commerce. Size and fit related returns severely impact 1. the customers experience and their dissatisfaction with online shopping, 2. the environment through an increased carbon footprint, and 3. the profitability of online fashion platforms. Due to poor fit, customers often end up returning articles that they like but do not fit them, which they have to re-order in a different size. To tackle this issue we introduce SizeFlags, a probabilistic Bayesian model based on weakly annotated large-scale data from customers. Leveraging the advantages of the Bayesian framework, we extend our model to successfully integrate rich priors from human experts feedback and computer vision intelligence. Through extensive experimentation, large-scale A/B testing and continuous evaluation of the model in production, we demonstrate the strong impact of the proposed approach in robustly reducing size-related returns in online fashion over 14 countries.

A Hierarchical Bayesian Model for Size Recommendation in Fashion

Aug 02, 2019

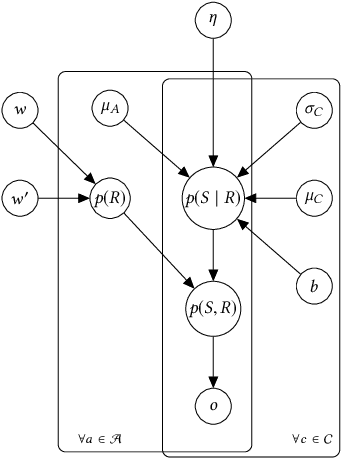

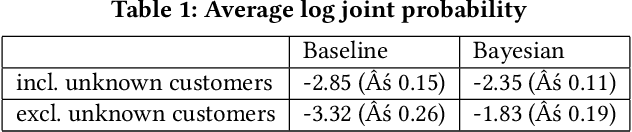

Abstract:We introduce a hierarchical Bayesian approach to tackle the challenging problem of size recommendation in e-commerce fashion. Our approach jointly models a size purchased by a customer, and its possible return event: 1. no return, 2. returned too small 3. returned too big. Those events are drawn following a multinomial distribution parameterized on the joint probability of each event, built following a hierarchy combining priors. Such a model allows us to incorporate extended domain expertise and article characteristics as prior knowledge, which in turn makes it possible for the underlying parameters to emerge thanks to sufficient data. Experiments are presented on real (anonymized) data from millions of customers along with a detailed discussion on the efficiency of such an approach within a large scale production system.

A Deep Learning System for Predicting Size and Fit in Fashion E-Commerce

Jul 23, 2019

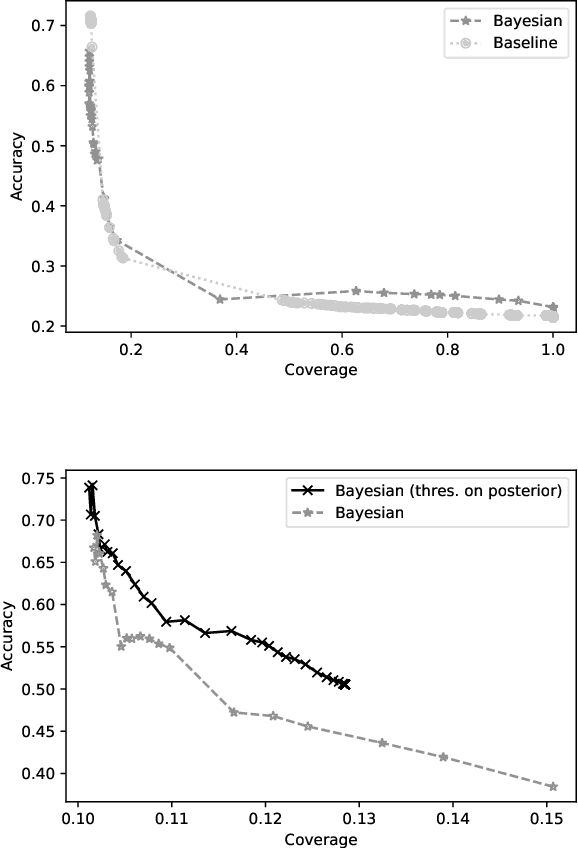

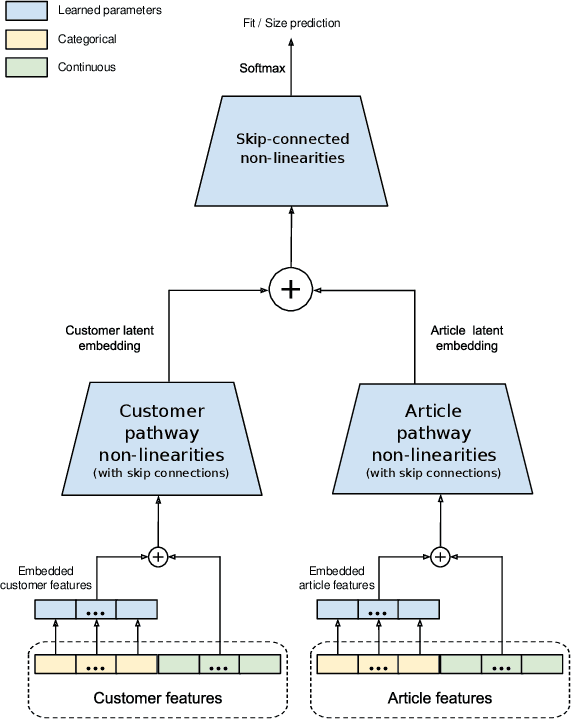

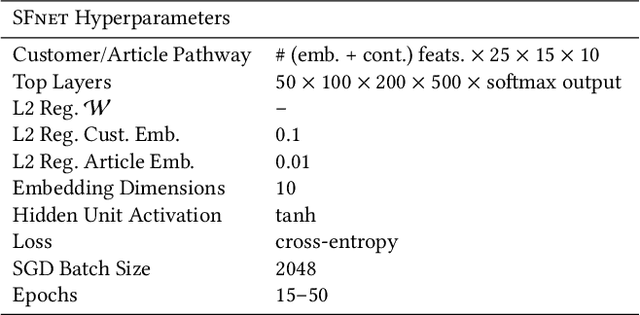

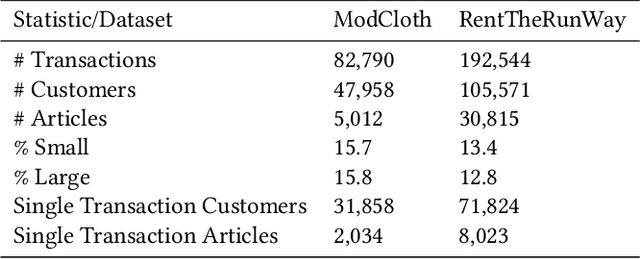

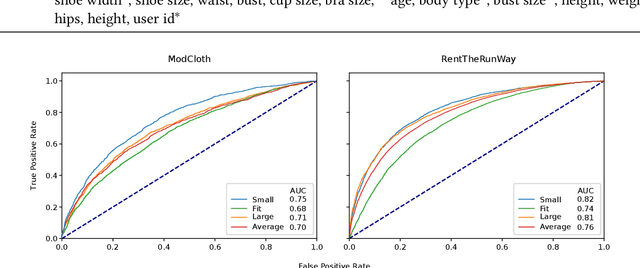

Abstract:Personalized size and fit recommendations bear crucial significance for any fashion e-commerce platform. Predicting the correct fit drives customer satisfaction and benefits the business by reducing costs incurred due to size-related returns. Traditional collaborative filtering algorithms seek to model customer preferences based on their previous orders. A typical challenge for such methods stems from extreme sparsity of customer-article orders. To alleviate this problem, we propose a deep learning based content-collaborative methodology for personalized size and fit recommendation. Our proposed method can ingest arbitrary customer and article data and can model multiple individuals or intents behind a single account. The method optimizes a global set of parameters to learn population-level abstractions of size and fit relevant information from observed customer-article interactions. It further employs customer and article specific embedding variables to learn their properties. Together with learned entity embeddings, the method maps additional customer and article attributes into a latent space to derive personalized recommendations. Application of our method to two publicly available datasets demonstrate an improvement over the state-of-the-art published results. On two proprietary datasets, one containing fit feedback from fashion experts and the other involving customer purchases, we further outperform comparable methodologies, including a recent Bayesian approach for size recommendation.

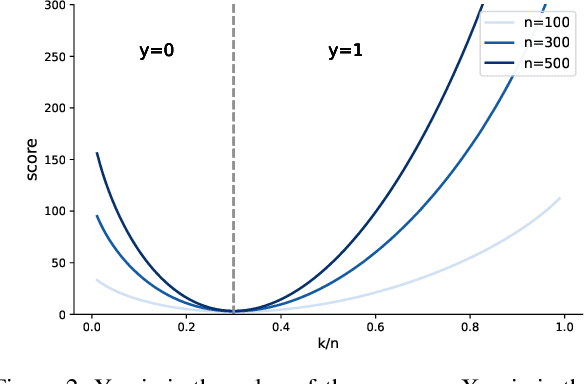

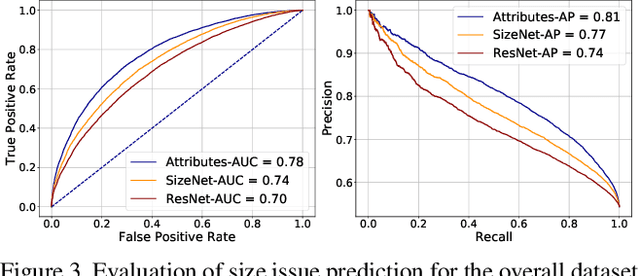

SizeNet: Weakly Supervised Learning of Visual Size and Fit in Fashion Images

May 28, 2019

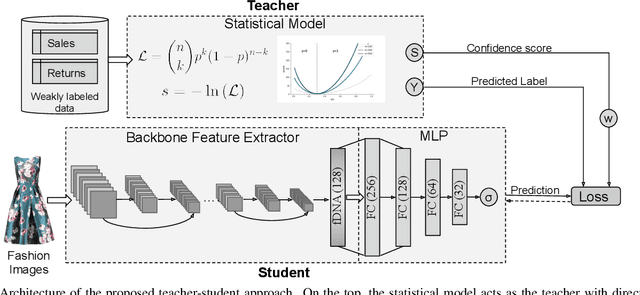

Abstract:Finding clothes that fit is a hot topic in the e-commerce fashion industry. Most approaches addressing this problem are based on statistical methods relying on historical data of articles purchased and returned to the store. Such approaches suffer from the cold start problem for the thousands of articles appearing on the shopping platforms every day, for which no prior purchase history is available. We propose to employ visual data to infer size and fit characteristics of fashion articles. We introduce SizeNet, a weakly-supervised teacher-student training framework that leverages the power of statistical models combined with the rich visual information from article images to learn visual cues for size and fit characteristics, capable of tackling the challenging cold start problem. Detailed experiments are performed on thousands of textile garments, including dresses, trousers, knitwear, tops, etc. from hundreds of different brands.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge