Reiner Schmid

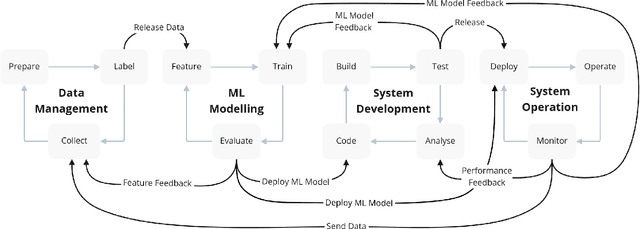

Towards a safe MLOps Process for the Continuous Development and Safety Assurance of ML-based Systems in the Railway Domain

Jul 06, 2023Abstract:Traditional automation technologies alone are not sufficient to enable driverless operation of trains (called Grade of Automation (GoA) 4) on non-restricted infrastructure. The required perception tasks are nowadays realized using Machine Learning (ML) and thus need to be developed and deployed reliably and efficiently. One important aspect to achieve this is to use an MLOps process for tackling improved reproducibility, traceability, collaboration, and continuous adaptation of a driverless operation to changing conditions. MLOps mixes ML application development and operation (Ops) and enables high frequency software releases and continuous innovation based on the feedback from operations. In this paper, we outline a safe MLOps process for the continuous development and safety assurance of ML-based systems in the railway domain. It integrates system engineering, safety assurance, and the ML life-cycle in a comprehensive workflow. We present the individual stages of the process and their interactions. Moreover, we describe relevant challenges to automate the different stages of the safe MLOps process.

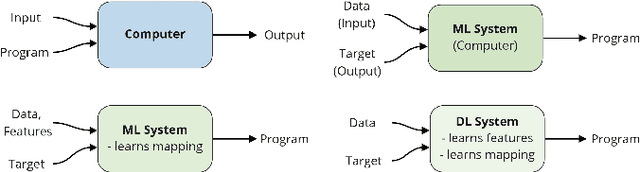

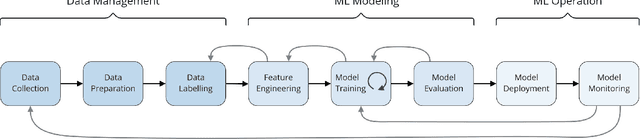

Capturing Dependencies within Machine Learning via a Formal Process Model

Aug 10, 2022

Abstract:The development of Machine Learning (ML) models is more than just a special case of software development (SD): ML models acquire properties and fulfill requirements even without direct human interaction in a seemingly uncontrollable manner. Nonetheless, the underlying processes can be described in a formal way. We define a comprehensive SD process model for ML that encompasses most tasks and artifacts described in the literature in a consistent way. In addition to the production of the necessary artifacts, we also focus on generating and validating fitting descriptions in the form of specifications. We stress the importance of further evolving the ML model throughout its life-cycle even after initial training and testing. Thus, we provide various interaction points with standard SD processes in which ML often is an encapsulated task. Further, our SD process model allows to formulate ML as a (meta-) optimization problem. If automated rigorously, it can be used to realize self-adaptive autonomous systems. Finally, our SD process model features a description of time that allows to reason about the progress within ML development processes. This might lead to further applications of formal methods within the field of ML.

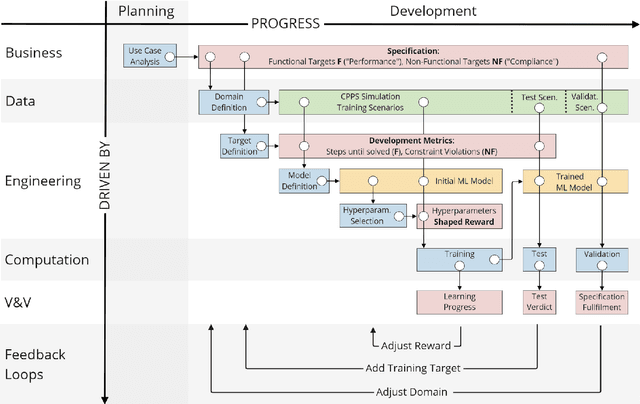

SAT-MARL: Specification Aware Training in Multi-Agent Reinforcement Learning

Dec 14, 2020

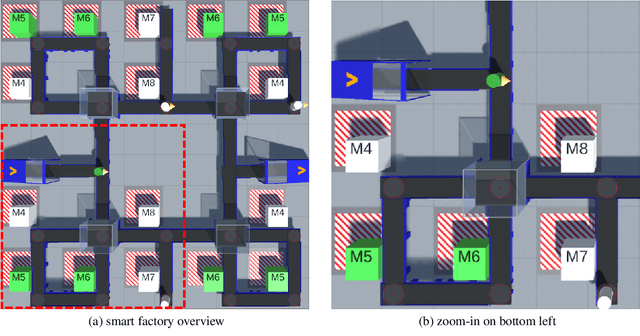

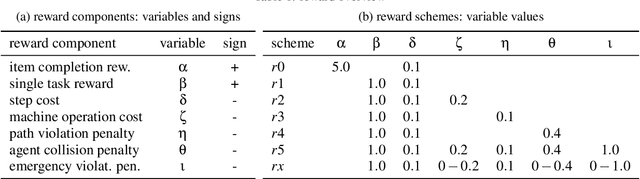

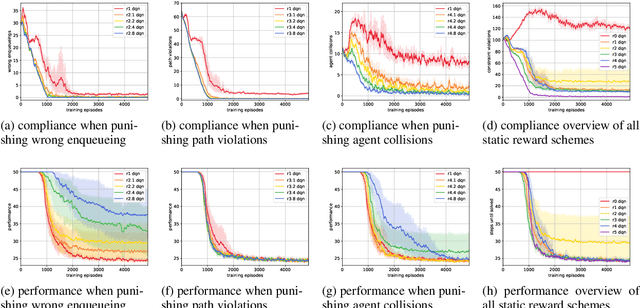

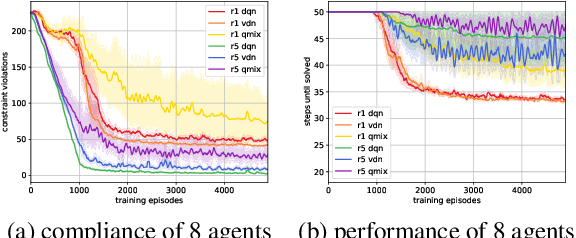

Abstract:A characteristic of reinforcement learning is the ability to develop unforeseen strategies when solving problems. While such strategies sometimes yield superior performance, they may also result in undesired or even dangerous behavior. In industrial scenarios, a system's behavior also needs to be predictable and lie within defined ranges. To enable the agents to learn (how) to align with a given specification, this paper proposes to explicitly transfer functional and non-functional requirements into shaped rewards. Experiments are carried out on the smart factory, a multi-agent environment modeling an industrial lot-size-one production facility, with up to eight agents and different multi-agent reinforcement learning algorithms. Results indicate that compliance with functional and non-functional constraints can be achieved by the proposed approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge