Raymond H. Cuijpers

Bayesian Active Object Recognition and 6D Pose Estimation from Multimodal Contact Sensing

Mar 22, 2026Abstract:We present an active tactile exploration framework for joint object recognition and 6D pose estimation. The proposed method integrates wrist force/torque sensing, GelSight tactile sensing, and free-space constraints within a Bayesian inference framework that maintains a belief over object class and pose during active tactile exploration. By combining contact and non-contact evidence, the framework reduces ambiguity and improves robustness in the joint class-pose estimation problem. To enable efficient inference in the large hypothesis space, we employ a customized particle filter that progressively samples particles based on new observations. The inferred belief is further used to guide active exploration by selecting informative next touches under reachability constraints. For effective data collection, a motion planning and control framework is developed to plan and execute feasible paths for tactile exploration, handle unexpected contacts and GelSight-surface alignment with tactile servoing. We evaluate the framework in simulation and on a Franka Panda robot using 11 YCB objects. Results show that incorporating tactile and free-space information substantially improves recognition and pose estimation accuracy and stability, while reducing the number of action cycles compared with force/torque-only baselines. Code, dataset, and supplementary material will be made available online.

Designing Persuasive Social Robots for Health Behavior Change: A Systematic Review of Behavior Change Strategies and Evaluation Methods

Jan 13, 2026Abstract:Social robots are increasingly applied as health behavior change interventions, yet actionable knowledge to guide their design and evaluation remains limited. This systematic review synthesizes (1) the behavior change strategies used in existing HRI studies employing social robots to promote health behavior change, and (2) the evaluation methods applied to assess behavior change outcomes. Relevant literature was identified through systematic database searches and hand searches. Analysis of 39 studies revealed four overarching categories of behavior change strategies: coaching strategies, counseling strategies, social influence strategies, and persuasion-enhancing strategies. These strategies highlight the unique affordances of social robots as behavior change interventions and offer valuable design heuristics. The review also identified key characteristics of current evaluation practices, including study designs, settings, durations, and outcome measures, on the basis of which we propose several directions for future HRI research.

Robot-Initiated Social Control of Sedentary Behavior: Comparing the Impact of Relationship- and Target-Focused Strategies

Feb 12, 2025

Abstract:To design social robots to effectively promote health behavior change, it is essential to understand how people respond to various health communication strategies employed by these robots. This study examines the effectiveness of two types of social control strategies from a social robot, relationship-focused strategies (emphasizing relational consequences) and target-focused strategies (emphasizing health consequences), in encouraging people to reduce sedentary behavior. A two-session lab experiment was conducted (n = 135), where participants first played a game with a robot, followed by the robot persuading them to stand up and move using one of the strategies. Half of the participants joined a second session to have a repeated interaction with the robot. Results showed that relationship-focused strategies motivated participants to stay active longer. Repeated sessions did not strengthen participants' relationship with the robot, but those who felt more attached to the robot responded more actively to the target-focused strategies. These findings offer valuable insights for designing persuasive strategies for social robots in health communication contexts.

A Bayesian framework for active object recognition, pose estimation and shape transfer learning through touch

Sep 10, 2024

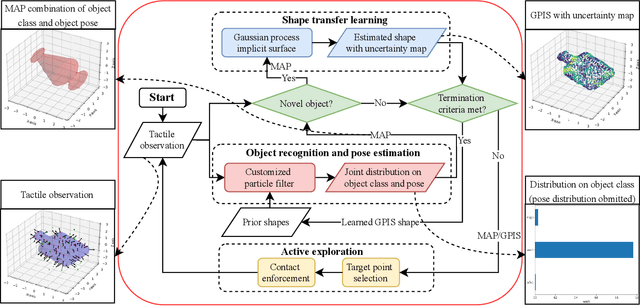

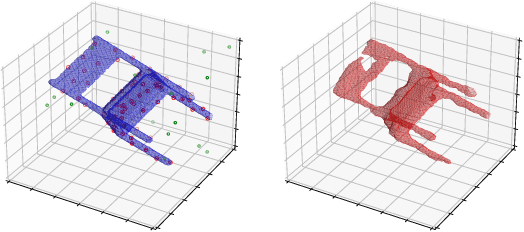

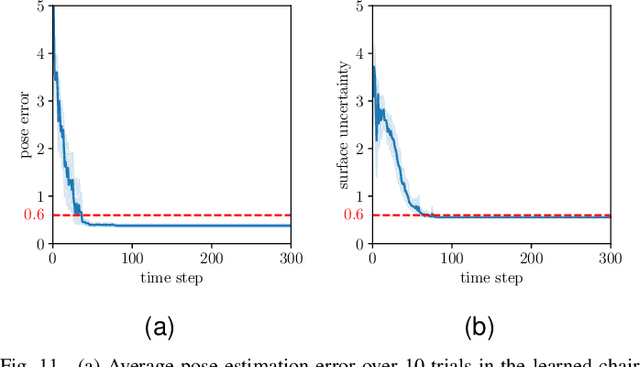

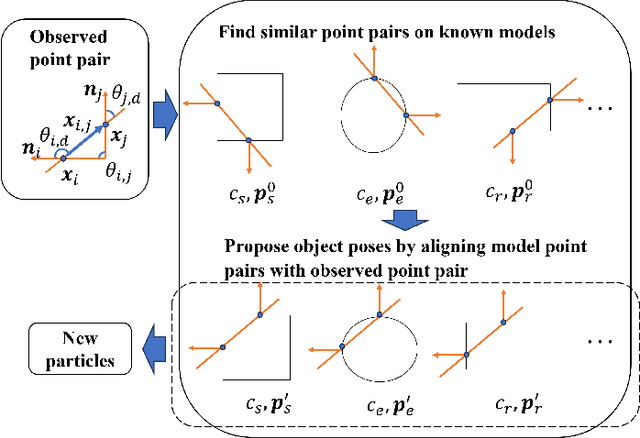

Abstract:As humans can explore and understand the world through the sense of touch, tactile sensing is also an important aspect of robotic perception. In unstructured environments, robots can encounter both known and novel objects, this calls for a method to address both known and novel objects. In this study, we combine a particle filter (PF) and Gaussian process implicit surface (GPIS) in a unified Bayesian framework. The framework can differentiate between known and novel objects, perform object recognition, estimate pose for known objects, and reconstruct shapes for unknown objects, in an active learning fashion. By grounding the selection of the GPIS prior with the maximum-likelihood-estimation (MLE) shape from the PF, the knowledge about known objects' shapes can be transferred to learn novel shapes. An exploration procedure with global shape estimation is proposed to guide active data acquisition and conclude the exploration when sufficient information is obtained. The performance of the proposed Bayesian framework is evaluated through simulations on known and novel objects, initialized with random poses and is compared with a rapidly explore random tree (RRT).The results show that the proposed exploration procedure, utilizing global shape estimation, achieves faster exploration than the RRT-based local exploration procedure. Overall, results indicate that the proposed framework is effective and efficient in object recognition, pose estimation and shape reconstruction. Moreover, we show that a learned shape can be included as a new prior and used effectively for future object recognition and pose estimation of novel objects.

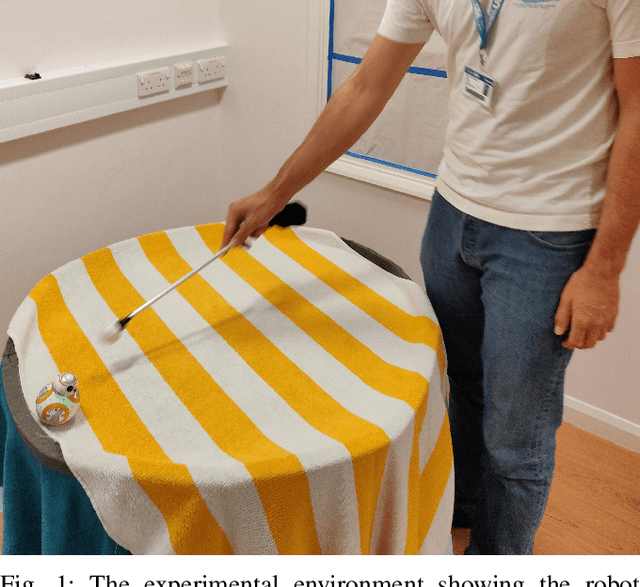

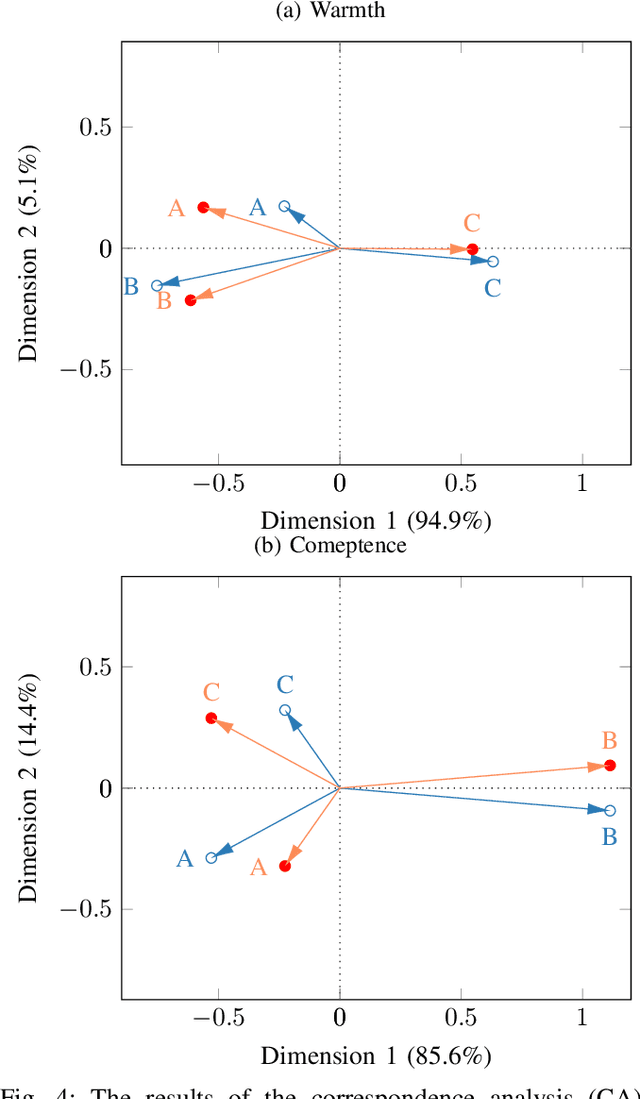

Warmth and Competence to Predict Human Preference of Robot Behavior in Physical Human-Robot Interaction

Aug 13, 2020

Abstract:A solid methodology to understand human perception and preferences in human-robot interaction (HRI) is crucial in designing real-world HRI. Social cognition posits that the dimensions Warmth and Competence are central and universal dimensions characterizing other humans. The Robotic Social Attribute Scale (RoSAS) proposes items for those dimensions suitable for HRI and validated them in a visual observation study. In this paper we complement the validation by showing the usability of these dimensions in a behavior based, physical HRI study with a fully autonomous robot. We compare the findings with the popular Godspeed dimensions Animacy, Anthropomorphism, Likeability, Perceived Intelligence and Perceived Safety. We found that Warmth and Competence, among all RoSAS and Godspeed dimensions, are the most important predictors for human preferences between different robot behaviors. This predictive power holds even when there is no clear consensus preference or significant factor difference between conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge