Ramasubramanian Sundararajan

Double Ramp Loss Based Reject Option Classifier

Dec 08, 2014

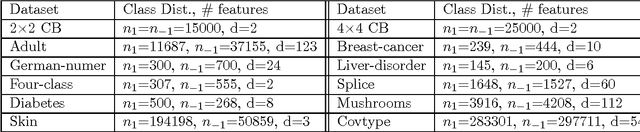

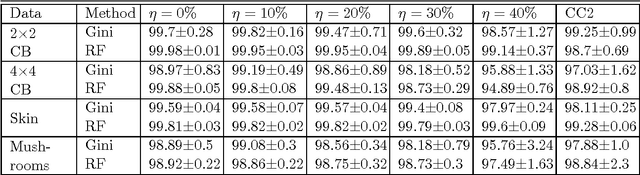

Abstract:We consider the problem of learning reject option classifiers. The goodness of a reject option classifier is quantified using $0-d-1$ loss function wherein a loss $d \in (0,.5)$ is assigned for rejection. In this paper, we propose {\em double ramp loss} function which gives a continuous upper bound for $(0-d-1)$ loss. Our approach is based on minimizing regularized risk under the double ramp loss using {\em difference of convex (DC) programming}. We show the effectiveness of our approach through experiments on synthetic and benchmark datasets. Our approach performs better than the state of the art reject option classification approaches.

An SVM Based Approach for Cardiac View Planning

Jul 11, 2014

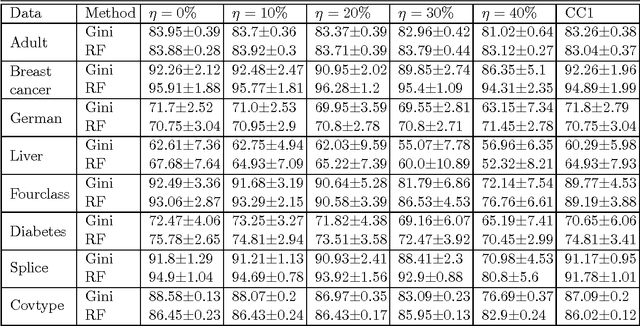

Abstract:We consider the problem of automatically prescribing oblique planes (short axis, 4 chamber and 2 chamber views) in Cardiac Magnetic Resonance Imaging (MRI). A concern with technologist-driven acquisitions of these planes is the quality and time taken for the total examination. We propose an automated solution incorporating anatomical features external to the cardiac region. The solution uses support vector machine regression models wherein complexity and feature selection are optimized using multi-objective genetic algorithms. Additionally, we examine the robustness of our approach by training our models on images with additive Rician-Gaussian mixtures at varying Signal to Noise (SNR) levels. Our approach has shown promising results, with an angular deviation of less than 15 degrees on 90% cases across oblique planes, measured in terms of average 6-fold cross validation performance -- this is generally within acceptable bounds of variation as specified by clinicians.

A multi-instance learning algorithm based on a stacked ensemble of lazy learners

Jul 10, 2014

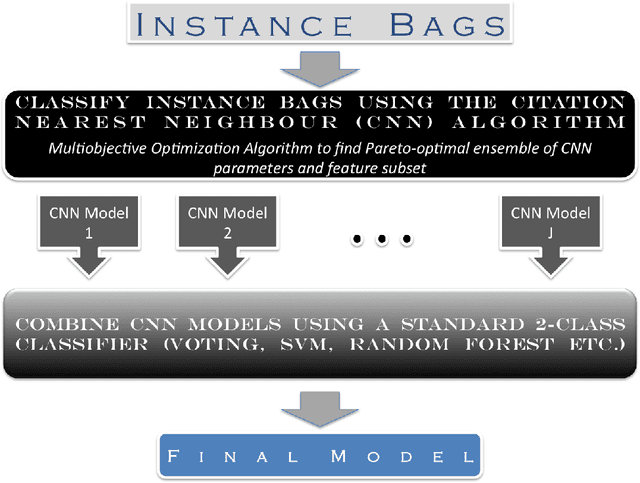

Abstract:This document describes a novel learning algorithm that classifies "bags" of instances rather than individual instances. A bag is labeled positive if it contains at least one positive instance (which may or may not be specifically identified), and negative otherwise. This class of problems is known as multi-instance learning problems, and is useful in situations where the class label at an instance level may be unavailable or imprecise or difficult to obtain, or in situations where the problem is naturally posed as one of classifying instance groups. The algorithm described here is an ensemble-based method, wherein the members of the ensemble are lazy learning classifiers learnt using the Citation Nearest Neighbour method. Diversity among the ensemble members is achieved by optimizing their parameters using a multi-objective optimization method, with the objectives being to maximize Class 1 accuracy and minimize false positive rate. The method has been found to be effective on the Musk1 benchmark dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge