Qingfeng Yao

Imitation and Adaptation Based on Consistency: A Quadruped Robot Imitates Animals from Videos Using Deep Reinforcement Learning

Mar 02, 2022

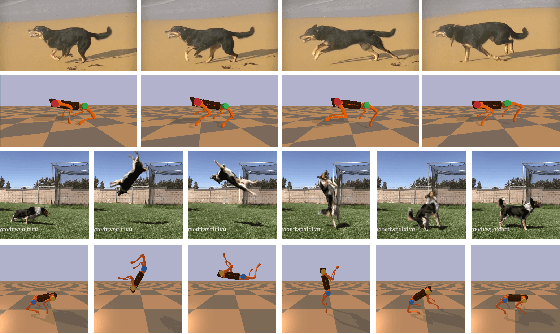

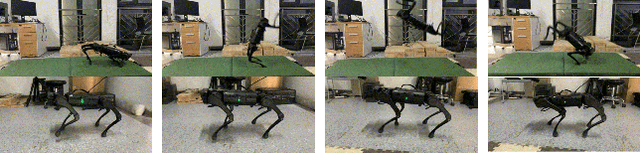

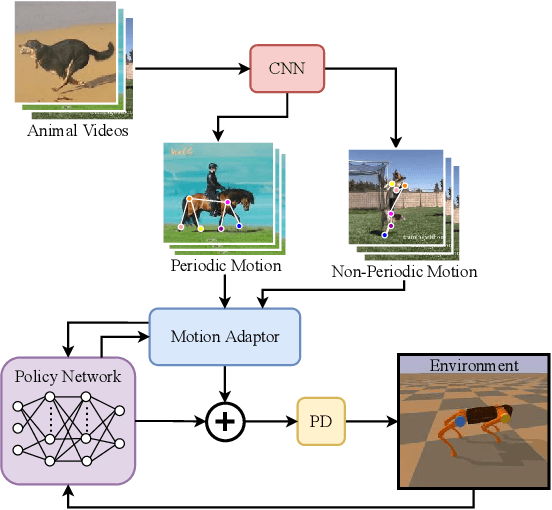

Abstract:The essence of quadrupeds' movements is the movement of the center of gravity, which has a pattern in the action of quadrupeds. However, the gait motion planning of the quadruped robot is time-consuming. Animals in nature can provide a large amount of gait information for robots to learn and imitate. Common methods learn animal posture with a motion capture system or numerous motion data points. In this paper, we propose a video imitation adaptation network (VIAN) that can imitate the action of animals and adapt it to the robot from a few seconds of video. The deep learning model extracts key points during animal motion from videos. The VIAN eliminates noise and extracts key information of motion with a motion adaptor, and then applies the extracted movements function as the motion pattern into deep reinforcement learning (DRL). To ensure similarity between the learning result and the animal motion in the video, we introduce rewards that are based on the consistency of the motion. DRL explores and learns to maintain balance from movement patterns from videos, imitates the action of animals, and eventually, allows the model to learn the gait or skills from short motion videos of different animals and to transfer the motion pattern to the real robot.

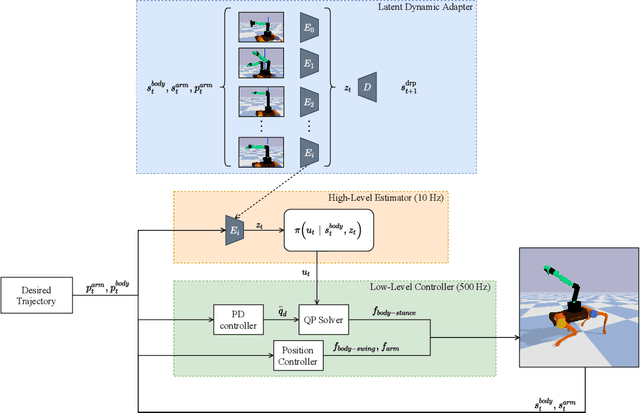

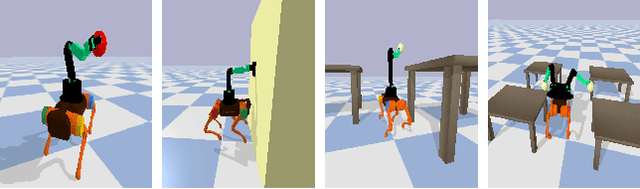

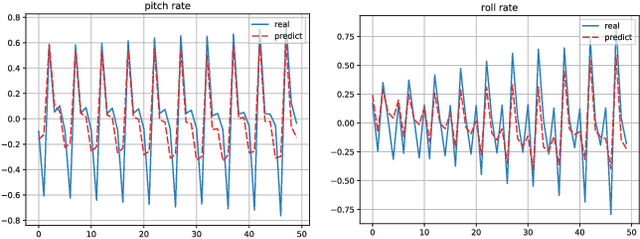

A Transferable Legged Mobile Manipulation Framework Based on Disturbance Predictive Control

Mar 02, 2022

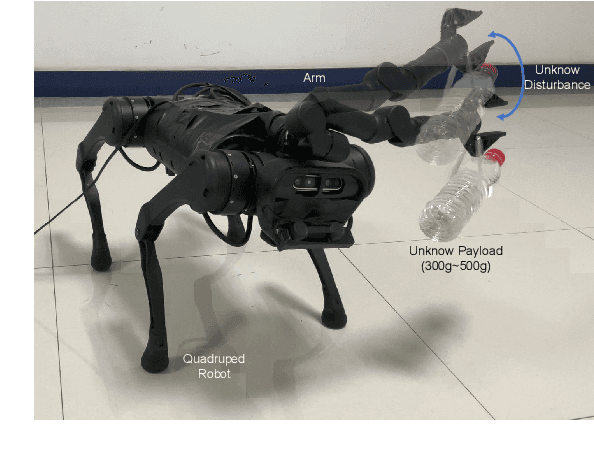

Abstract:Due to their ability to adapt to different terrains, quadruped robots have drawn much attention in the research field of robot learning. Legged mobile manipulation, where a quadruped robot is equipped with a robotic arm, can greatly enhance the performance of the robot in diverse manipulation tasks. Several prior works have investigated legged mobile manipulation from the viewpoint of control theory. However, modeling a unified structure for various robotic arms and quadruped robots is a challenging task. In this paper, we propose a unified framework disturbance predictive control where a reinforcement learning scheme with a latent dynamic adapter is embedded into our proposed low-level controller. Our method can adapt well to various types of robotic arms with a few random motion samples and the experimental results demonstrate the effectiveness of our method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge