Puneet Batra

Latent Space Explorer: Visual Analytics for Multimodal Latent Space Exploration

Dec 01, 2023Abstract:Machine learning models built on training data with multiple modalities can reveal new insights that are not accessible through unimodal datasets. For example, cardiac magnetic resonance images (MRIs) and electrocardiograms (ECGs) are both known to capture useful information about subjects' cardiovascular health status. A multimodal machine learning model trained from large datasets can potentially predict the onset of heart-related diseases and provide novel medical insights about the cardiovascular system. Despite the potential benefits, it is difficult for medical experts to explore multimodal representation models without visual aids and to test the predictive performance of the models on various subpopulations. To address the challenges, we developed a visual analytics system called Latent Space Explorer. Latent Space Explorer provides interactive visualizations that enable users to explore the multimodal representation of subjects, define subgroups of interest, interactively decode data with different modalities with the selected subjects, and inspect the accuracy of the embedding in downstream prediction tasks. A user study was conducted with medical experts and their feedback provided useful insights into how Latent Space Explorer can help their analysis and possible new direction for further development in the medical domain.

Patient Contrastive Learning: a Performant, Expressive, and Practical Approach to ECG Modeling

Apr 09, 2021

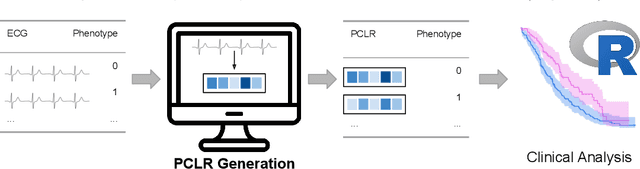

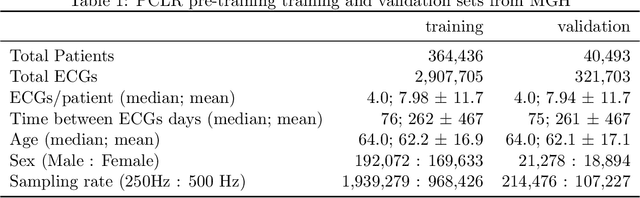

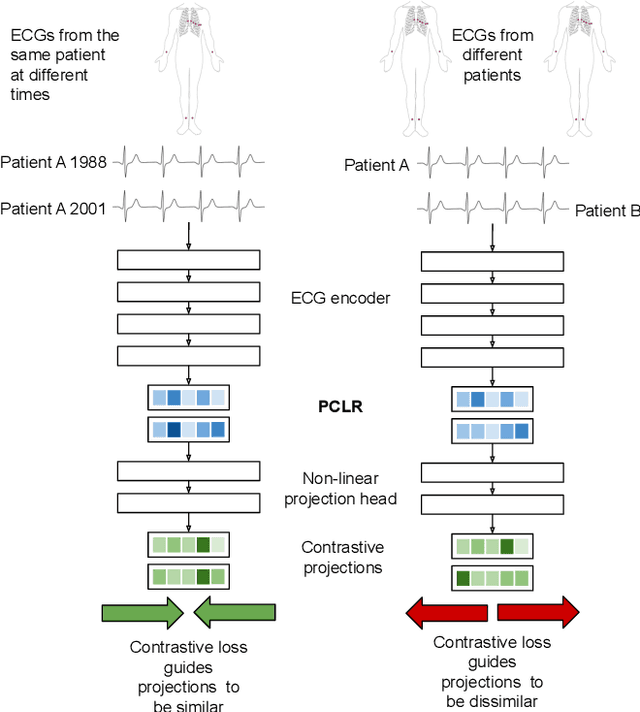

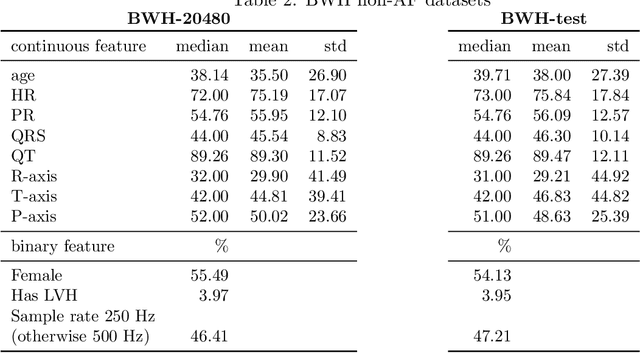

Abstract:Supervised machine learning applications in health care are often limited due to a scarcity of labeled training data. To mitigate this effect of small sample size, we introduce a pre-training approach, Patient Contrastive Learning of Representations (PCLR), which creates latent representations of ECGs from a large number of unlabeled examples. The resulting representations are expressive, performant, and practical across a wide spectrum of clinical tasks. We develop PCLR using a large health care system with over 3.2 million 12-lead ECGs, and demonstrate substantial improvements across multiple new tasks when there are fewer than 5,000 labels. We release our model to extract ECG representations at https://github.com/broadinstitute/ml4h/tree/master/model_zoo/PCLR.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge