Patient Contrastive Learning: a Performant, Expressive, and Practical Approach to ECG Modeling

Paper and Code

Apr 09, 2021

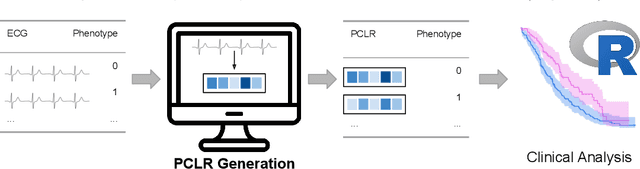

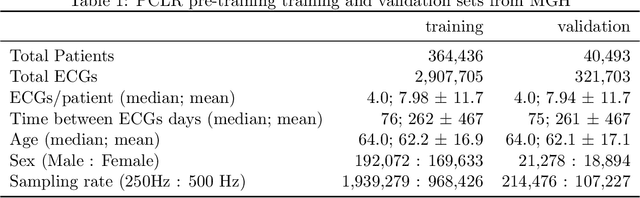

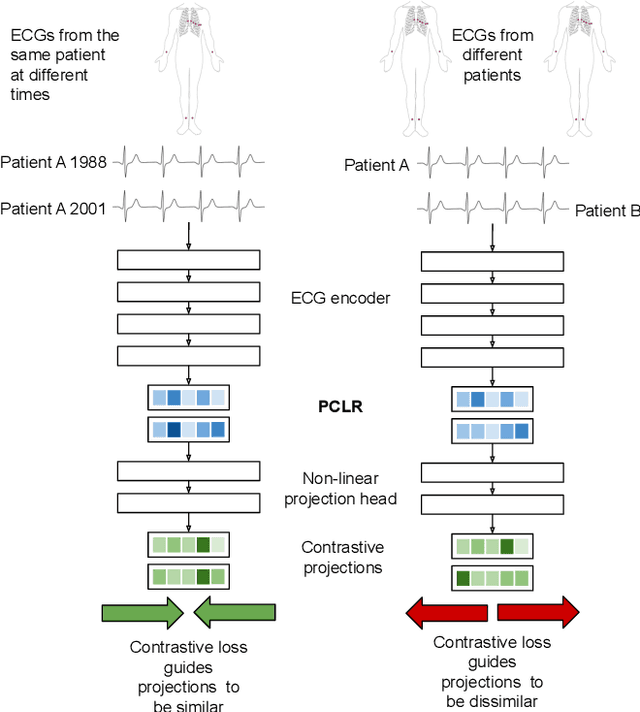

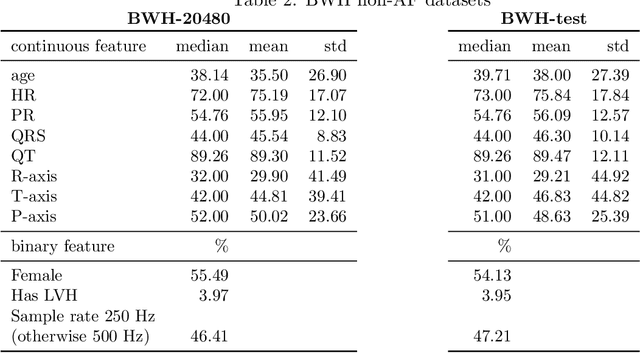

Supervised machine learning applications in health care are often limited due to a scarcity of labeled training data. To mitigate this effect of small sample size, we introduce a pre-training approach, Patient Contrastive Learning of Representations (PCLR), which creates latent representations of ECGs from a large number of unlabeled examples. The resulting representations are expressive, performant, and practical across a wide spectrum of clinical tasks. We develop PCLR using a large health care system with over 3.2 million 12-lead ECGs, and demonstrate substantial improvements across multiple new tasks when there are fewer than 5,000 labels. We release our model to extract ECG representations at https://github.com/broadinstitute/ml4h/tree/master/model_zoo/PCLR.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge