Podshara Chanrungmaneekul

ManipulationNet: An Infrastructure for Benchmarking Real-World Robot Manipulation with Physical Skill Challenges and Embodied Multimodal Reasoning

Mar 04, 2026Abstract:Dexterous manipulation enables robots to purposefully alter the physical world, transforming them from passive observers into active agents in unstructured environments. This capability is the cornerstone of physical artificial intelligence. Despite decades of advances in hardware, perception, control, and learning, progress toward general manipulation systems remains fragmented due to the absence of widely adopted standard benchmarks. The central challenge lies in reconciling the variability of the real world with the reproducibility and authenticity required for rigorous scientific evaluation. To address this, we introduce ManipulationNet, a global infrastructure that hosts real-world benchmark tasks for robotic manipulation. ManipulationNet delivers reproducible task setups through standardized hardware kits, and enables distributed performance evaluation via a unified software client that delivers real-time task instructions and collects benchmarking results. As a persistent and scalable infrastructure, ManipulationNet organizes benchmark tasks into two complementary tracks: 1) the Physical Skills Track, which evaluates low-level physical interaction skills, and 2) the Embodied Reasoning Track, which tests high-level reasoning and multimodal grounding abilities. This design fosters the systematic growth of an interconnected network of real-world abilities and skills, paving the path toward general robotic manipulation. By enabling comparable manipulation research in the real world at scale, this infrastructure establishes a sustainable foundation for measuring long-term scientific progress and identifying capabilities ready for real-world deployment.

ARC-Calib: Autonomous Markerless Camera-to-Robot Calibration via Exploratory Robot Motions

Mar 18, 2025Abstract:Camera-to-robot (also known as eye-to-hand) calibration is a critical component of vision-based robot manipulation. Traditional marker-based methods often require human intervention for system setup. Furthermore, existing autonomous markerless calibration methods typically rely on pre-trained robot tracking models that impede their application on edge devices and require fine-tuning for novel robot embodiments. To address these limitations, this paper proposes a model-based markerless camera-to-robot calibration framework, ARC-Calib, that is fully autonomous and generalizable across diverse robots and scenarios without requiring extensive data collection or learning. First, exploratory robot motions are introduced to generate easily trackable trajectory-based visual patterns in the camera's image frames. Then, a geometric optimization framework is proposed to exploit the coplanarity and collinearity constraints from the observed motions to iteratively refine the estimated calibration result. Our approach eliminates the need for extra effort in either environmental marker setup or data collection and model training, rendering it highly adaptable across a wide range of real-world autonomous systems. Extensive experiments are conducted in both simulation and the real world to validate its robustness and generalizability.

Wearable Roller Rings to Enable Robot Dexterous In-Hand Manipulation through Active Surfaces

Mar 19, 2024

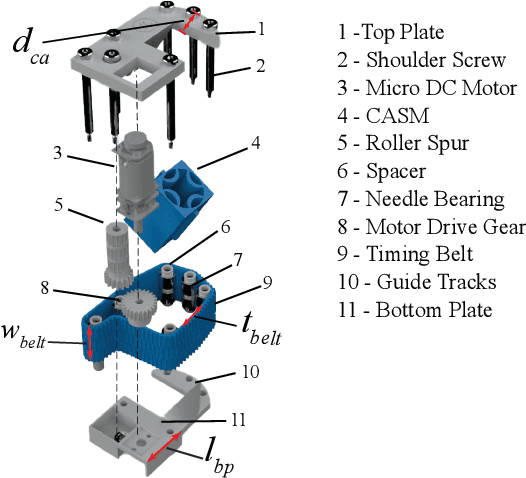

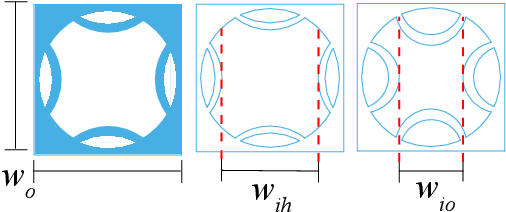

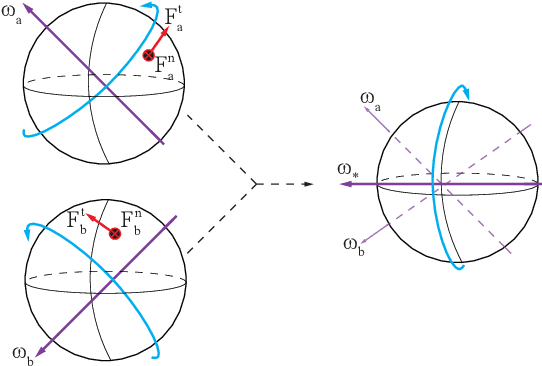

Abstract:In-hand manipulation is a crucial ability for reorienting and repositioning objects within grasps. The main challenges are not only the complexity in the computational models, but also the risks of grasp instability caused by active finger motions, such as rolling, sliding, breaking, and remaking contacts. Based on the idea of manipulation without lifting a finger, this paper presents the development of Roller Rings (RR), a modular robotic attachment with active surfaces that is wearable by both robot and human hands. By installing and angling the RRs on grasping systems, such that their spatial motions are not co-linear, we derive a general differential motion model for the object actuated by the active surfaces. Our motion model shows that complete in-hand manipulation skill sets can be provided by as few as only 2 RRs through non-holonomic object motions, while more RRs can enable enhanced manipulation dexterity with fewer motion constraints. Through extensive experiments, we wear RRs on both a robot hand and a human hand to evaluate their manipulation capabilities, and show that the RRs can be employed to manipulate arbitrary object shapes to provide dexterous in-hand manipulation.

Interactive Robot-Environment Self-Calibration via Compliant Exploratory Actions

Mar 19, 2024

Abstract:Calibrating robots into their workspaces is crucial for manipulation tasks. Existing calibration techniques often rely on sensors external to the robot (cameras, laser scanners, etc.) or specialized tools. This reliance complicates the calibration process and increases the costs and time requirements. Furthermore, the associated setup and measurement procedures require significant human intervention, which makes them more challenging to operate. Using the built-in force-torque sensors, which are nowadays a default component in collaborative robots, this work proposes a self-calibration framework where robot-environmental spatial relations are automatically estimated through compliant exploratory actions by the robot itself. The self-calibration approach converges, verifies its own accuracy, and terminates upon completion, autonomously purely through interactive exploration of the environment's geometries. Extensive experiments validate the effectiveness of our self-calibration approach in accurately establishing the robot-environment spatial relationships without the need for additional sensing equipment or any human intervention.

Non-Parametric Self-Identification and Model Predictive Control of Dexterous In-Hand Manipulation

Jul 14, 2023

Abstract:Building hand-object models for dexterous in-hand manipulation remains a crucial and open problem. Major challenges include the difficulty of obtaining the geometric and dynamical models of the hand, object, and time-varying contacts, as well as the inevitable physical and perception uncertainties. Instead of building accurate models to map between the actuation inputs and the object motions, this work proposes to enable the hand-object systems to continuously approximate their local models via a self-identification process where an underlying manipulation model is estimated through a small number of exploratory actions and non-parametric learning. With a very small number of data points, as opposed to most data-driven methods, our system self-identifies the underlying manipulation models online through exploratory actions and non-parametric learning. By integrating the self-identified hand-object model into a model predictive control framework, the proposed system closes the control loop to provide high accuracy in-hand manipulation. Furthermore, the proposed self-identification is able to adaptively trigger online updates through additional exploratory actions, as soon as the self-identified local models render large discrepancies against the observed manipulation outcomes. We implemented the proposed approach on a sensorless underactuated Yale Model O hand with a single external camera to observe the object's motion. With extensive experiments, we show that the proposed self-identification approach can enable accurate and robust dexterous manipulation without requiring an accurate system model nor a large amount of data for offline training.

Kinodynamic Rapidly-exploring Random Forest for Rearrangement-Based Nonprehensile Manipulation

Feb 08, 2023

Abstract:Rearrangement-based nonprehensile manipulation still remains as a challenging problem due to the high-dimensional problem space and the complex physical uncertainties it entails. We formulate this class of problems as a coupled problem of local rearrangement and global action optimization by incorporating free-space transit motions between constrained rearranging actions. We propose a forest-based kinodynamic planning framework to concurrently search in multiple problem regions, so as to enable global exploration of the most task-relevant subspaces, while facilitating effective switches between local rearranging actions. By interleaving dynamic horizon planning and action execution, our framework can adaptively handle real-world uncertainties. With extensive experiments, we show that our framework significantly improves the planning efficiency and manipulation effectiveness while being robust against various uncertainties.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge