Pedro Herruzo

Traffic4cast at NeurIPS 2021 -- Temporal and Spatial Few-Shot Transfer Learning in Gridded Geo-Spatial Processes

Apr 01, 2022

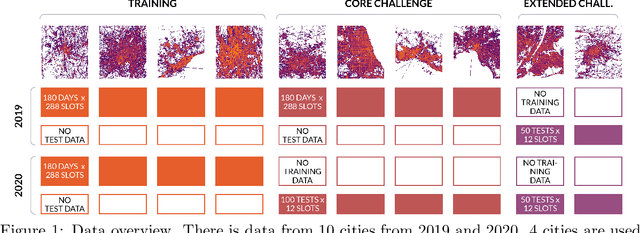

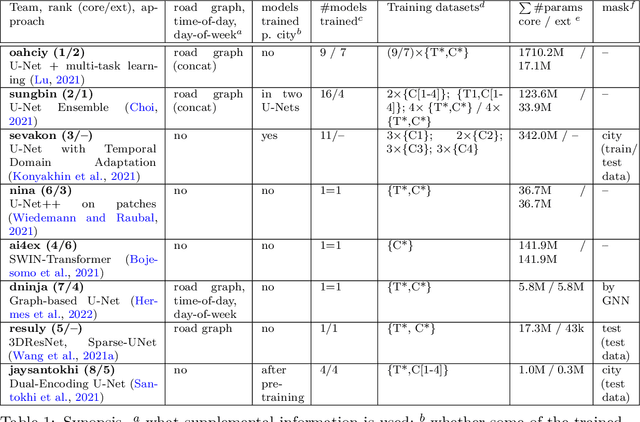

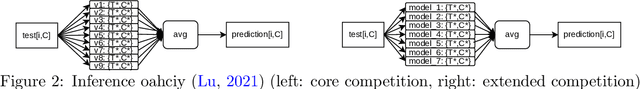

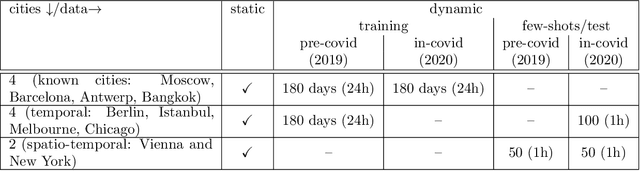

Abstract:The IARAI Traffic4cast competitions at NeurIPS 2019 and 2020 showed that neural networks can successfully predict future traffic conditions 1 hour into the future on simply aggregated GPS probe data in time and space bins. We thus reinterpreted the challenge of forecasting traffic conditions as a movie completion task. U-Nets proved to be the winning architecture, demonstrating an ability to extract relevant features in this complex real-world geo-spatial process. Building on the previous competitions, Traffic4cast 2021 now focuses on the question of model robustness and generalizability across time and space. Moving from one city to an entirely different city, or moving from pre-COVID times to times after COVID hit the world thus introduces a clear domain shift. We thus, for the first time, release data featuring such domain shifts. The competition now covers ten cities over 2 years, providing data compiled from over 10^12 GPS probe data. Winning solutions captured traffic dynamics sufficiently well to even cope with these complex domain shifts. Surprisingly, this seemed to require only the previous 1h traffic dynamic history and static road graph as input.

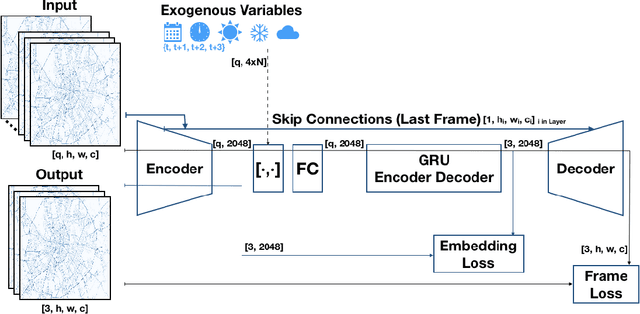

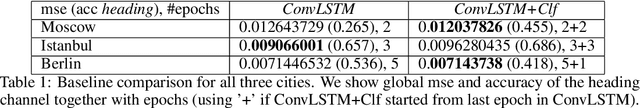

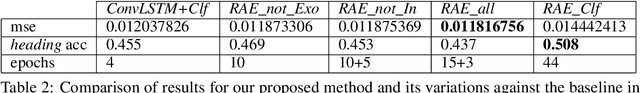

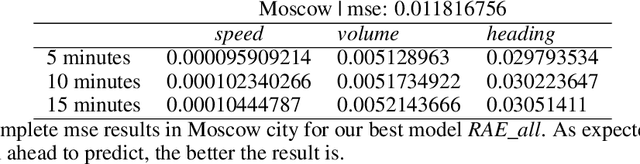

Recurrent Autoencoder with Skip Connections and Exogenous Variables for Traffic Forecasting

Oct 28, 2019

Abstract:The increasing complexity of mobility plus the growing population in cities, together with the importance of privacy when sharing data from vehicles or any device, makes traffic forecasting that uses data from infrastructure and citizens an open and challenging task. In this paper, we introduce a novel approach to deal with predictions of speed, volume, and main traffic direction, in a new aggregated way of traffic data presented as videos. The approach leverages the continuity in a sequence of frames and its dynamics, learning to predict changing areas in a low dimensional space and then, recovering static features when reconstructing the original space. Exogenous variables like weather, time and calendar are also added in the model. Furthermore, we introduce a novel sampling approach for sequences that ensures diversity when creating batches, running in parallel to the optimization process.

Analyzing First-Person Stories Based on Socializing, Eating and Sedentary Patterns

Jul 25, 2017

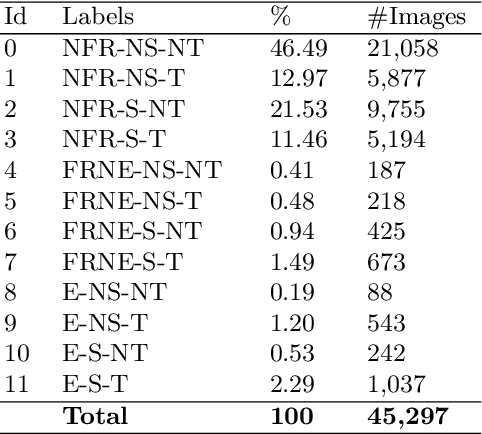

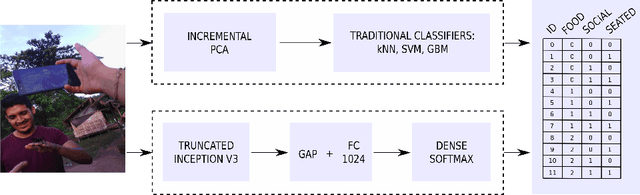

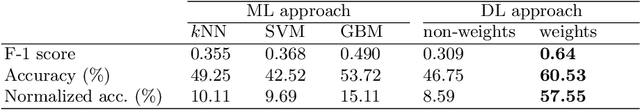

Abstract:First-person stories can be analyzed by means of egocentric pictures acquired throughout the whole active day with wearable cameras. This manuscript presents an egocentric dataset with more than 45,000 pictures from four people in different environments such as working or studying. All the images were manually labeled to identify three patterns of interest regarding people's lifestyle: socializing, eating and sedentary. Additionally, two different approaches are proposed to classify egocentric images into one of the 12 target categories defined to characterize these three patterns. The approaches are based on machine learning and deep learning techniques, including traditional classifiers and state-of-art convolutional neural networks. The experimental results obtained when applying these methods to the egocentric dataset demonstrated their adequacy for the problem at hand.

Can a CNN Recognize Catalan Diet?

Jul 29, 2016

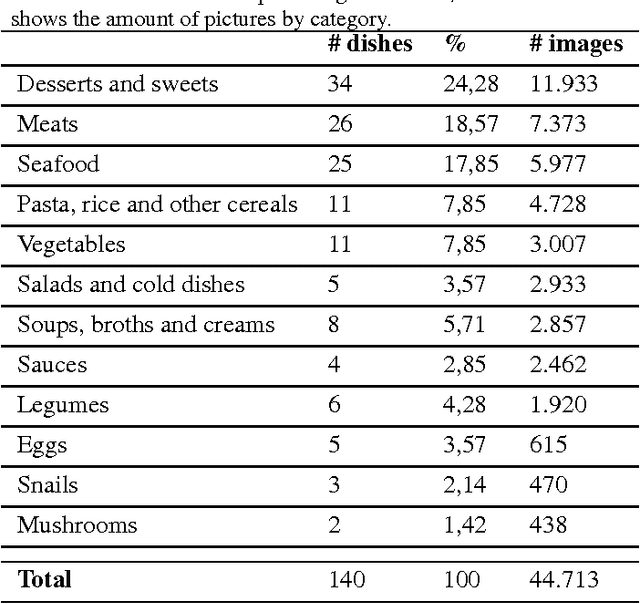

Abstract:Nowadays, we can find several diseases related to the unhealthy diet habits of the population, such as diabetes, obesity, anemia, bulimia and anorexia. In many cases, these diseases are related to the food consumption of people. Mediterranean diet is scientifically known as a healthy diet that helps to prevent many metabolic diseases. In particular, our work focuses on the recognition of Mediterranean food and dishes. The development of this methodology would allow to analise the daily habits of users with wearable cameras, within the topic of lifelogging. By using automatic mechanisms we could build an objective tool for the analysis of the patient's behaviour, allowing specialists to discover unhealthy food patterns and understand the user's lifestyle. With the aim to automatically recognize a complete diet, we introduce a challenging multi-labeled dataset related to Mediterranean diet called FoodCAT. The first type of label provided consists of 115 food classes with an average of 400 images per dish, and the second one consists of 12 food categories with an average of 3800 pictures per class. This dataset will serve as a basis for the development of automatic diet recognition. In this context, deep learning and more specifically, Convolutional Neural Networks (CNNs), currently are state-of-the-art methods for automatic food recognition. In our work, we compare several architectures for image classification, with the purpose of diet recognition. Applying the best model for recognising food categories, we achieve a top-1 accuracy of 72.29\%, and top-5 of 97.07\%. In a complete diet recognition of dishes from Mediterranean diet, enlarged with the Food-101 dataset for international dishes recognition, we achieve a top-1 accuracy of 68.07\%, and top-5 of 89.53\%, for a total of 115+101 food classes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge