Panyu Chen

Models That Are Interpretable But Not Transparent

Feb 26, 2025Abstract:Faithful explanations are essential for machine learning models in high-stakes applications. Inherently interpretable models are well-suited for these applications because they naturally provide faithful explanations by revealing their decision logic. However, model designers often need to keep these models proprietary to maintain their value. This creates a tension: we need models that are interpretable--allowing human decision-makers to understand and justify predictions, but not transparent, so that the model's decision boundary is not easily replicated by attackers. Shielding the model's decision boundary is particularly challenging alongside the requirement of completely faithful explanations, since such explanations reveal the true logic of the model for an entire subspace around each query point. This work provides an approach, FaithfulDefense, that creates model explanations for logical models that are completely faithful, yet reveal as little as possible about the decision boundary. FaithfulDefense is based on a maximum set cover formulation, and we provide multiple formulations for it, taking advantage of submodularity.

Kuaiji: the First Chinese Accounting Large Language Model

Feb 24, 2024

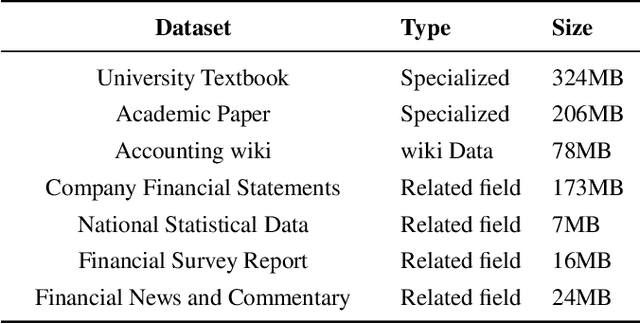

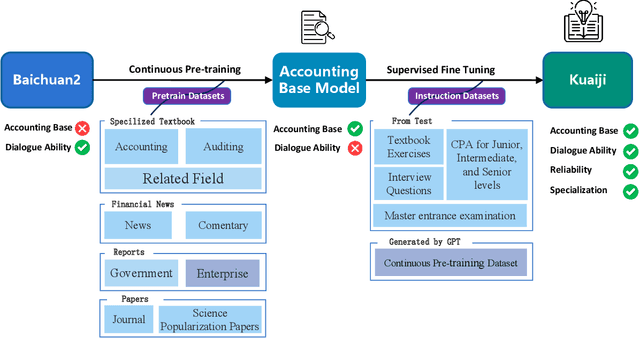

Abstract:Large Language Models (LLMs) like ChatGPT and GPT-4 have demonstrated impressive proficiency in comprehending and generating natural language. However, they encounter difficulties when tasked with adapting to specialized domains such as accounting. To address this challenge, we introduce Kuaiji, a tailored Accounting Large Language Model. Kuaiji is meticulously fine-tuned using the Baichuan framework, which encompasses continuous pre-training and supervised fine-tuning processes. Supported by CAtAcctQA, a dataset containing large genuine accountant-client dialogues, Kuaiji exhibits exceptional accuracy and response speed. Our contributions encompass the creation of the first Chinese accounting dataset, the establishment of Kuaiji as a leading open-source Chinese accounting LLM, and the validation of its efficacy through real-world accounting scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge