Pablo Marin

Anomaly Detection in Video Data Based on Probabilistic Latent Space Models

Mar 17, 2020

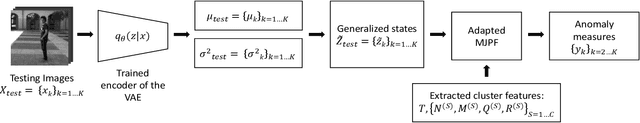

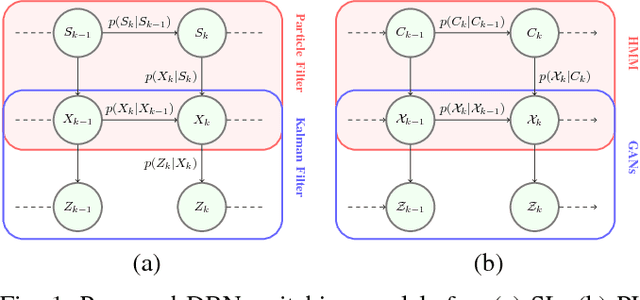

Abstract:This paper proposes a method for detecting anomalies in video data. A Variational Autoencoder (VAE) is used for reducing the dimensionality of video frames, generating latent space information that is comparable to low-dimensional sensory data (e.g., positioning, steering angle), making feasible the development of a consistent multi-modal architecture for autonomous vehicles. An Adapted Markov Jump Particle Filter defined by discrete and continuous inference levels is employed to predict the following frames and detecting anomalies in new video sequences. Our method is evaluated on different video scenarios where a semi-autonomous vehicle performs a set of tasks in a closed environment.

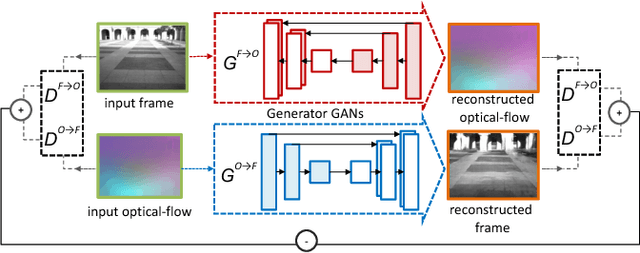

Hierarchy of GANs for learning embodied self-awareness model

Jun 08, 2018

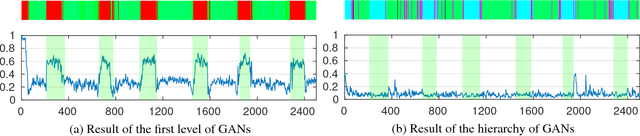

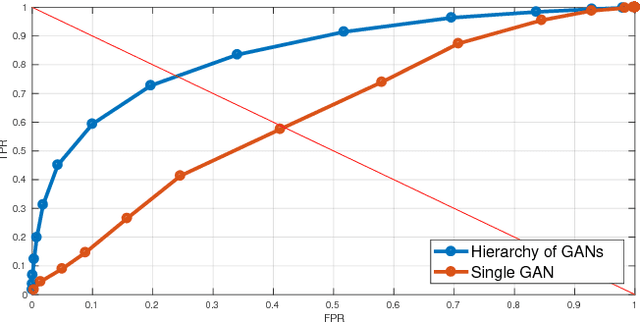

Abstract:In recent years several architectures have been proposed to learn embodied agents complex self-awareness models. In this paper, dynamic incremental self-awareness (SA) models are proposed that allow experiences done by an agent to be modeled in a hierarchical fashion, starting from more simple situations to more structured ones. Each situation is learned from subsets of private agent perception data as a model capable to predict normal behaviors and detect abnormalities. Hierarchical SA models have been already proposed using low dimensional sensorial inputs. In this work, a hierarchical model is introduced by means of a cross-modal Generative Adversarial Networks (GANs) processing high dimensional visual data. Different levels of the GANs are detected in a self-supervised manner using GANs discriminators decision boundaries. Real experiments on semi-autonomous ground vehicles are presented.

Learning Multi-Modal Self-Awareness Models for Autonomous Vehicles from Human Driving

Jun 07, 2018

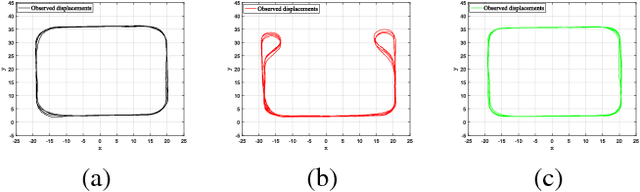

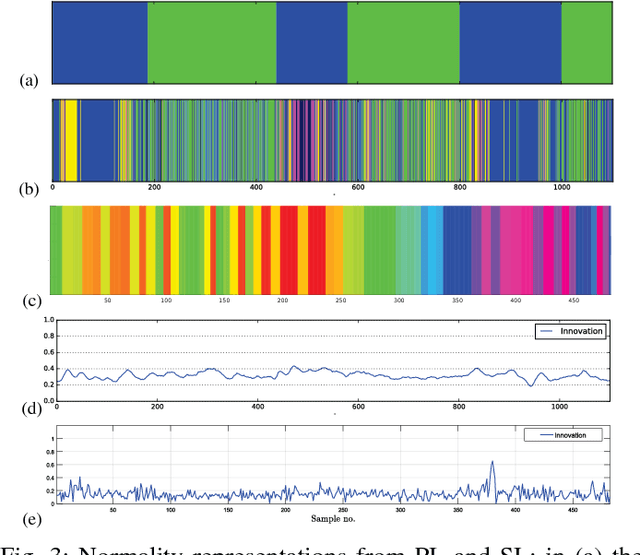

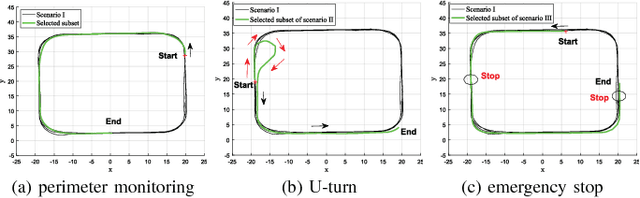

Abstract:This paper presents a novel approach for learning self-awareness models for autonomous vehicles. The proposed technique is based on the availability of synchronized multi-sensor dynamic data related to different maneuvering tasks performed by a human operator. It is shown that different machine learning approaches can be used to first learn single modality models using coupled Dynamic Bayesian Networks; such models are then correlated at event level to discover contextual multi-modal concepts. In the presented case, visual perception and localization are used as modalities. Cross-correlations among modalities in time is discovered from data and are described as probabilistic links connecting shared and private multi-modal DBNs at the event (discrete) level. Results are presented on experiments performed on an autonomous vehicle, highlighting potentiality of the proposed approach to allow anomaly detection and autonomous decision making based on learned self-awareness models.

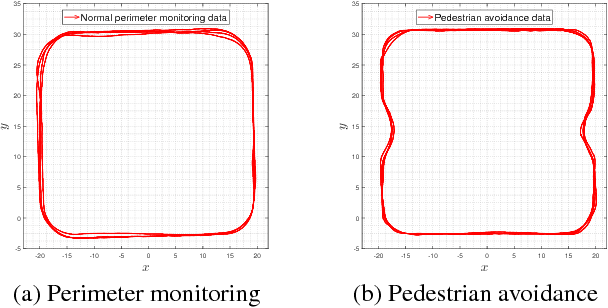

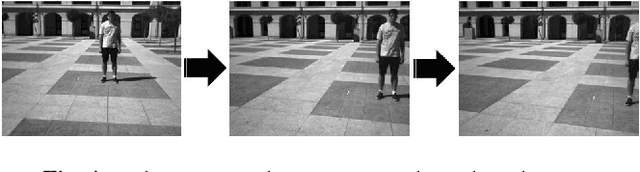

A Multi-perspective Approach To Anomaly Detection For Self-aware Embodied Agents

Mar 17, 2018

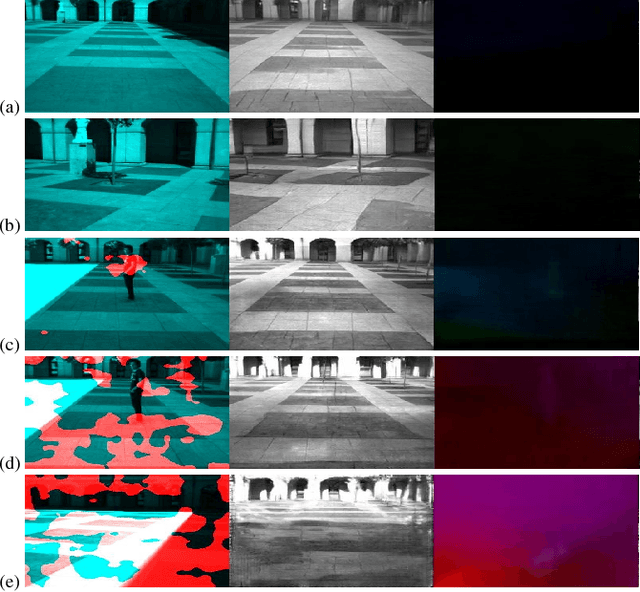

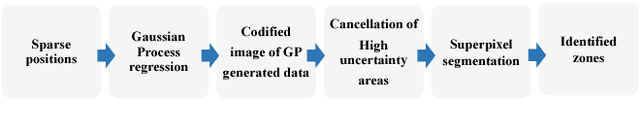

Abstract:This paper focuses on multi-sensor anomaly detection for moving cognitive agents using both external and private first-person visual observations. Both observation types are used to characterize agents' motion in a given environment. The proposed method generates locally uniform motion models by dividing a Gaussian process that approximates agents' displacements on the scene and provides a Shared Level (SL) self-awareness based on Environment Centered (EC) models. Such models are then used to train in a semi-unsupervised way a set of Generative Adversarial Networks (GANs) that produce an estimation of external and internal parameters of moving agents. Obtained results exemplify the feasibility of using multi-perspective data for predicting and analyzing trajectory information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge