Pablo Garcia-Bringas

On the Inherent Robustness of One-Stage Object Detection against Out-of-Distribution Data

Nov 07, 2024

Abstract:Robustness is a fundamental aspect for developing safe and trustworthy models, particularly when they are deployed in the open world. In this work we analyze the inherent capability of one-stage object detectors to robustly operate in the presence of out-of-distribution (OoD) data. Specifically, we propose a novel detection algorithm for detecting unknown objects in image data, which leverages the features extracted by the model from each sample. Differently from other recent approaches in the literature, our proposal does not require retraining the object detector, thereby allowing for the use of pretrained models. Our proposed OoD detector exploits the application of supervised dimensionality reduction techniques to mitigate the effects of the curse of dimensionality on the features extracted by the model. Furthermore, it utilizes high-resolution feature maps to identify potential unknown objects in an unsupervised fashion. Our experiments analyze the Pareto trade-off between the performance detecting known and unknown objects resulting from different algorithmic configurations and inference confidence thresholds. We also compare the performance of our proposed algorithm to that of logits-based post-hoc OoD methods, as well as possible fusion strategies. Finally, we discuss on the competitiveness of all tested methods against state-of-the-art OoD approaches for object detection models over the recently published Unknown Object Detection benchmark. The obtained results verify that the performance of avant-garde post-hoc OoD detectors can be further improved when combined with our proposed algorithm.

Resilience to the Flowing Unknown: an Open Set Recognition Framework for Data Streams

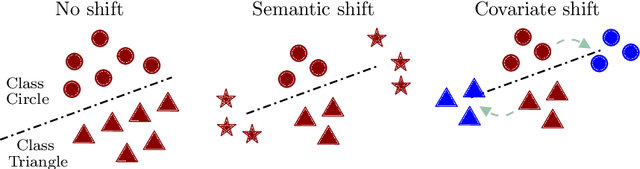

Oct 31, 2024Abstract:Modern digital applications extensively integrate Artificial Intelligence models into their core systems, offering significant advantages for automated decision-making. However, these AI-based systems encounter reliability and safety challenges when handling continuously generated data streams in complex and dynamic scenarios. This work explores the concept of resilient AI systems, which must operate in the face of unexpected events, including instances that belong to patterns that have not been seen during the training process. This is an issue that regular closed-set classifiers commonly encounter in streaming scenarios, as they are designed to compulsory classify any new observation into one of the training patterns (i.e., the so-called \textit{over-occupied space} problem). In batch learning, the Open Set Recognition research area has consistently confronted this issue by requiring models to robustly uphold their classification performance when processing query instances from unknown patterns. In this context, this work investigates the application of an Open Set Recognition framework that combines classification and clustering to address the \textit{over-occupied space} problem in streaming scenarios. Specifically, we systematically devise a benchmark comprising different classification datasets with varying ratios of known to unknown classes. Experiments are presented on this benchmark to compare the performance of the proposed hybrid framework with that of individual incremental classifiers. Discussions held over the obtained results highlight situations where the proposed framework performs best, and delineate the limitations and hurdles encountered by incremental classifiers in effectively resolving the challenges posed by open-world streaming environments.

* 12 pages, 3 figures, an updated version of this article is published in LNAI,volume 14857 as part of the conference proceedings HAIS 2024

On the Improvement of Generalization and Stability of Forward-Only Learning via Neural Polarization

Aug 17, 2024

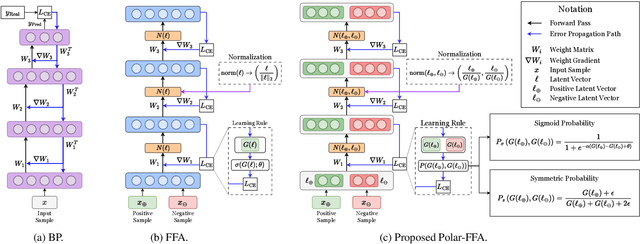

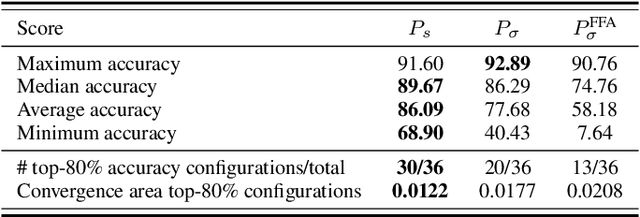

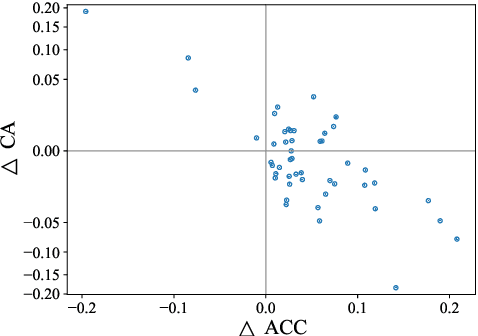

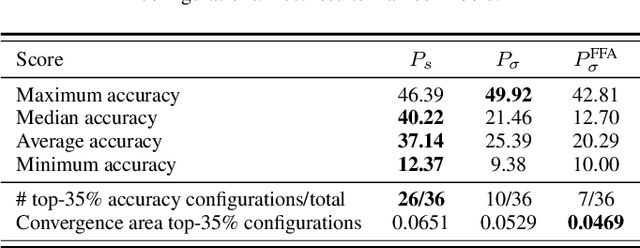

Abstract:Forward-only learning algorithms have recently gained attention as alternatives to gradient backpropagation, replacing the backward step of this latter solver with an additional contrastive forward pass. Among these approaches, the so-called Forward-Forward Algorithm (FFA) has been shown to achieve competitive levels of performance in terms of generalization and complexity. Networks trained using FFA learn to contrastively maximize a layer-wise defined goodness score when presented with real data (denoted as positive samples) and to minimize it when processing synthetic data (corr. negative samples). However, this algorithm still faces weaknesses that negatively affect the model accuracy and training stability, primarily due to a gradient imbalance between positive and negative samples. To overcome this issue, in this work we propose a novel implementation of the FFA algorithm, denoted as Polar-FFA, which extends the original formulation by introducing a neural division (\emph{polarization}) between positive and negative instances. Neurons in each of these groups aim to maximize their goodness when presented with their respective data type, thereby creating a symmetric gradient behavior. To empirically gauge the improved learning capabilities of our proposed Polar-FFA, we perform several systematic experiments using different activation and goodness functions over image classification datasets. Our results demonstrate that Polar-FFA outperforms FFA in terms of accuracy and convergence speed. Furthermore, its lower reliance on hyperparameters reduces the need for hyperparameter tuning to guarantee optimal generalization capabilities, thereby allowing for a broader range of neural network configurations.

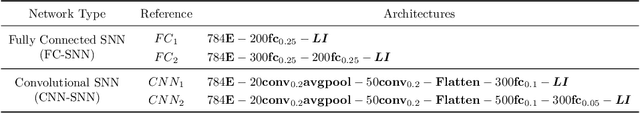

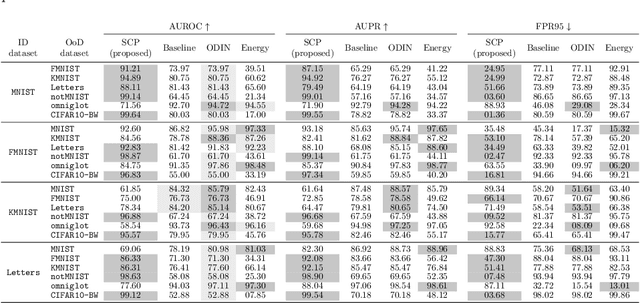

On the Robustness of Fully-Spiking Neural Networks in Open-World Scenarios using Forward-Only Learning Algorithms

Jul 19, 2024Abstract:In the last decade, Artificial Intelligence (AI) models have rapidly integrated into production pipelines propelled by their excellent modeling performance. However, the development of these models has not been matched by advancements in algorithms ensuring their safety, failing to guarantee robust behavior against Out-of-Distribution (OoD) inputs outside their learning domain. Furthermore, there is a growing concern with the sustainability of AI models and their required energy consumption in both training and inference phases. To mitigate these issues, this work explores the use of the Forward-Forward Algorithm (FFA), a biologically plausible alternative to Backpropagation, adapted to the spiking domain to enhance the overall energy efficiency of the model. By capitalizing on the highly expressive topology emerging from the latent space of models trained with FFA, we develop a novel FF-SCP algorithm for OoD Detection. Our approach measures the likelihood of a sample belonging to the in-distribution (ID) data by using the distance from the latent representation of samples to class-representative manifolds. Additionally, to provide deeper insights into our OoD pipeline, we propose a gradient-free attribution technique that highlights the features of a sample pushing it away from the distribution of any class. Multiple experiments using our spiking FFA adaptation demonstrate that the achieved accuracy levels are comparable to those seen in analog networks trained via back-propagation. Furthermore, OoD detection experiments on multiple datasets prove that FF-SCP outperforms avant-garde OoD detectors within the spiking domain in terms of several metrics used in this area. We also present a qualitative analysis of our explainability technique, exposing the precision by which the method detects OoD features, such as embedded artifacts or missing regions.

Managing the unknown: a survey on Open Set Recognition and tangential areas

Dec 14, 2023

Abstract:In real-world scenarios classification models are often required to perform robustly when predicting samples belonging to classes that have not appeared during its training stage. Open Set Recognition addresses this issue by devising models capable of detecting unknown classes from samples arriving during the testing phase, while maintaining a good level of performance in the classification of samples belonging to known classes. This review comprehensively overviews the recent literature related to Open Set Recognition, identifying common practices, limitations, and connections of this field with other machine learning research areas, such as continual learning, out-of-distribution detection, novelty detection, and uncertainty estimation. Our work also uncovers open problems and suggests several research directions that may motivate and articulate future efforts towards more safe Artificial Intelligence methods.

Efficient Object Detection in Autonomous Driving using Spiking Neural Networks: Performance, Energy Consumption Analysis, and Insights into Open-set Object Discovery

Dec 12, 2023

Abstract:Besides performance, efficiency is a key design driver of technologies supporting vehicular perception. Indeed, a well-balanced trade-off between performance and energy consumption is crucial for the sustainability of autonomous vehicles. In this context, the diversity of real-world contexts in which autonomous vehicles can operate motivates the need for empowering perception models with the capability to detect, characterize and identify newly appearing objects by themselves. In this manuscript we elaborate on this threefold conundrum (performance, efficiency and open-world learning) for object detection modeling tasks over image data collected from vehicular scenarios. Specifically, we show that well-performing and efficient models can be realized by virtue of Spiking Neural Networks (SNNs), reaching competitive levels of detection performance when compared to their non-spiking counterparts at dramatic energy consumption savings (up to 85%) and a slightly improved robustness against image noise. Our experiments herein offered also expose qualitatively the complexity of detecting new objects based on the preliminary results of a simple approach to discriminate potential object proposals in the captured image.

A Novel Explainable Out-of-Distribution Detection Approach for Spiking Neural Networks

Sep 30, 2022

Abstract:Research around Spiking Neural Networks has ignited during the last years due to their advantages when compared to traditional neural networks, including their efficient processing and inherent ability to model complex temporal dynamics. Despite these differences, Spiking Neural Networks face similar issues than other neural computation counterparts when deployed in real-world settings. This work addresses one of the practical circumstances that can hinder the trustworthiness of this family of models: the possibility of querying a trained model with samples far from the distribution of its training data (also referred to as Out-of-Distribution or OoD data). Specifically, this work presents a novel OoD detector that can identify whether test examples input to a Spiking Neural Network belong to the distribution of the data over which it was trained. For this purpose, we characterize the internal activations of the hidden layers of the network in the form of spike count patterns, which lay a basis for determining when the activations induced by a test instance is atypical. Furthermore, a local explanation method is devised to produce attribution maps revealing which parts of the input instance push most towards the detection of an example as an OoD sample. Experimental results are performed over several image classification datasets to compare the proposed detector to other OoD detection schemes from the literature. As the obtained results clearly show, the proposed detector performs competitively against such alternative schemes, and produces relevance attribution maps that conform to expectations for synthetically created OoD instances.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge