Noel Teku

Speeding up Speculative Decoding via Approximate Verification

Feb 06, 2025Abstract:Speculative Decoding (SD) is a recently proposed technique for faster inference using Large Language Models (LLMs). SD operates by using a smaller draft LLM for autoregressively generating a sequence of tokens and a larger target LLM for parallel verification to ensure statistical consistency. However, periodic parallel calls to the target LLM for verification prevent SD from achieving even lower latencies. We propose SPRINTER, which utilizes a low-complexity verifier trained to predict if tokens generated from a draft LLM would be accepted by the target LLM. By performing approximate sequential verification, SPRINTER does not require verification by the target LLM and is only invoked when a token is deemed unacceptable. This leads to reducing the number of calls to the larger LLM and can achieve further speedups. We present a theoretical analysis of SPRINTER, examining the statistical properties of the generated tokens, as well as the expected reduction in latency as a function of the verifier. We evaluate SPRINTER on several datasets and model pairs, demonstrating that approximate verification can still maintain high quality generation while further reducing latency. For instance, on Wiki-Summaries dataset, SPRINTER achieves a 1.7x latency speedup and requires 8.3x fewer flops relative to SD, while still generating high-quality responses when using GPT2-Small and GPT2-XL as draft/target models.

Latency-Distortion Tradeoffs in Communicating Classification Results over Noisy Channels

Apr 22, 2024

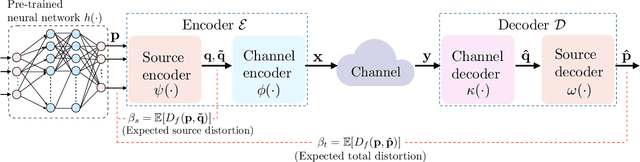

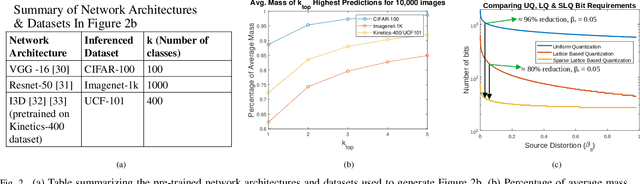

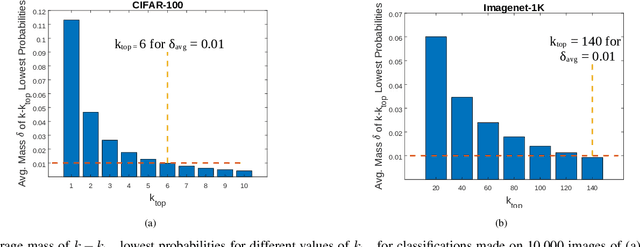

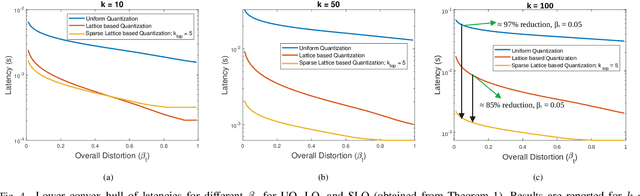

Abstract:In this work, the problem of communicating decisions of a classifier over a noisy channel is considered. With machine learning based models being used in variety of time-sensitive applications, transmission of these decisions in a reliable and timely manner is of significant importance. To this end, we study the scenario where a probability vector (representing the decisions of a classifier) at the transmitter, needs to be transmitted over a noisy channel. Assuming that the distortion between the original probability vector and the reconstructed one at the receiver is measured via f-divergence, we study the trade-off between transmission latency and the distortion. We completely analyze this trade-off using uniform, lattice, and sparse lattice-based quantization techniques to encode the probability vector by first characterizing bit budgets for each technique given a requirement on the allowed source distortion. These bounds are then combined with results from finite-blocklength literature to provide a framework for analyzing the effects of both quantization distortion and distortion due to decoding error probability (i.e., channel effects) on the incurred transmission latency. Our results show that there is an interesting interplay between source distortion (i.e., distortion for the probability vector measured via f-divergence) and the subsequent channel encoding/decoding parameters; and indicate that a joint design of these parameters is crucial to navigate the latency-distortion tradeoff. We study the impact of changing different parameters (e.g. number of classes, SNR, source distortion) on the latency-distortion tradeoff and perform experiments on AWGN and fading channels. Our results indicate that sparse lattice-based quantization is the most effective at minimizing latency across various regimes and for sparse, high-dimensional probability vectors (i.e., high number of classes).

Adversarial Filters for Secure Modulation Classification

Aug 15, 2020

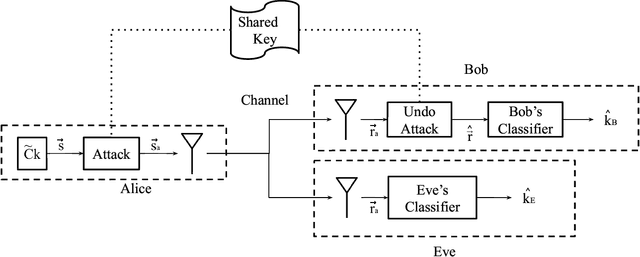

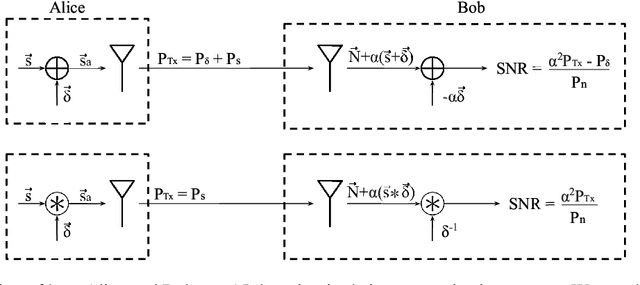

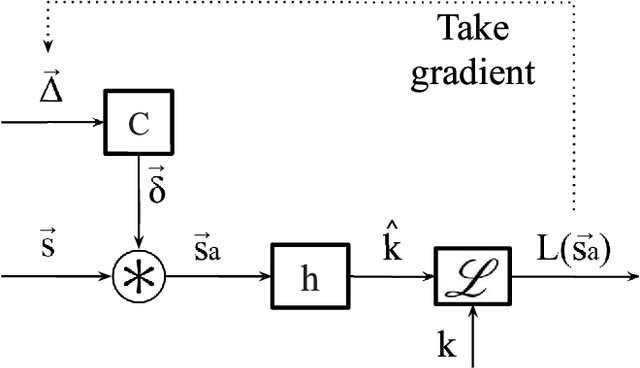

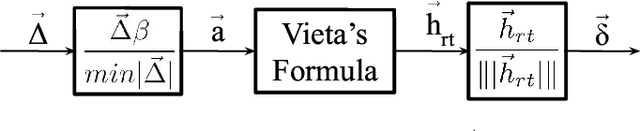

Abstract:Modulation Classification (MC) refers to the problem of classifying the modulation class of a wireless signal. In the wireless communications pipeline, MC is the first operation performed on the received signal and is critical for reliable decoding. This paper considers the problem of secure modulation classification, where a transmitter (Alice) wants to maximize MC accuracy at a legitimate receiver (Bob) while minimizing MC accuracy at an eavesdropper (Eve). The contribution of this work is to design novel adversarial learning techniques for secure MC. In particular, we present adversarial filtering based algorithms for secure MC, in which Alice uses a carefully designed adversarial filter to mask the transmitted signal, that can maximize MC accuracy at Bob while minimizing MC accuracy at Eve. We present two filtering based algorithms, namely gradient ascent filter (GAF), and a fast gradient filter method (FGFM), with varying levels of complexity. Our proposed adversarial filtering based approaches significantly outperform additive adversarial perturbations (used in the traditional ML community and other prior works on secure MC) and also have several other desirable properties. In particular, GAF and FGFM algorithms are a) computational efficient (allow fast decoding at Bob), b) power-efficient (do not require excessive transmit power at Alice); and c) SNR efficient (i.e., perform well even at low SNR values at Bob).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge