Nicholas Potteiger

Training RL Agents for Multi-Objective Network Defense Tasks

May 28, 2025

Abstract:Open-ended learning (OEL) -- which emphasizes training agents that achieve broad capability over narrow competency -- is emerging as a paradigm to develop artificial intelligence (AI) agents to achieve robustness and generalization. However, despite promising results that demonstrate the benefits of OEL, applying OEL to develop autonomous agents for real-world cybersecurity applications remains a challenge. We propose a training approach, inspired by OEL, to develop autonomous network defenders. Our results demonstrate that like in other domains, OEL principles can translate into more robust and generalizable agents for cyber defense. To apply OEL to network defense, it is necessary to address several technical challenges. Most importantly, it is critical to provide a task representation approach over a broad universe of tasks that maintains a consistent interface over goals, rewards and action spaces. This way, the learning agent can train with varying network conditions, attacker behaviors, and defender goals while being able to build on previously gained knowledge. With our tools and results, we aim to fundamentally impact research that applies AI to solve cybersecurity problems. Specifically, as researchers develop gyms and benchmarks for cyber defense, it is paramount that they consider diverse tasks with consistent representations, such as those we propose in our work.

Out-of-Distribution Detection for Neurosymbolic Autonomous Cyber Agents

Dec 03, 2024

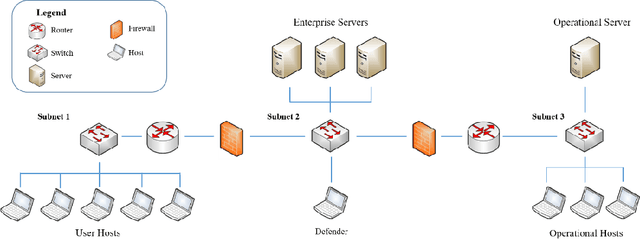

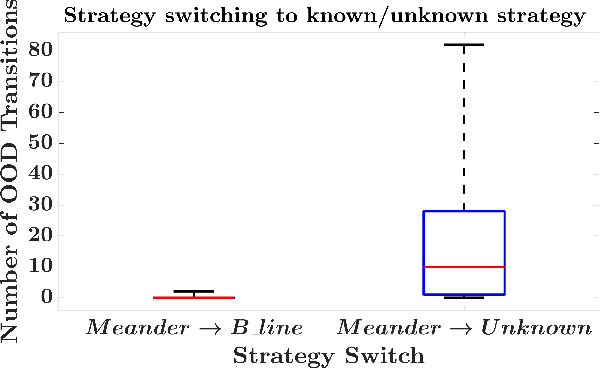

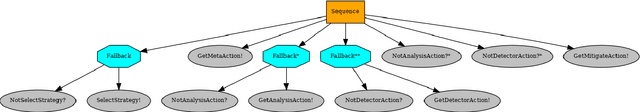

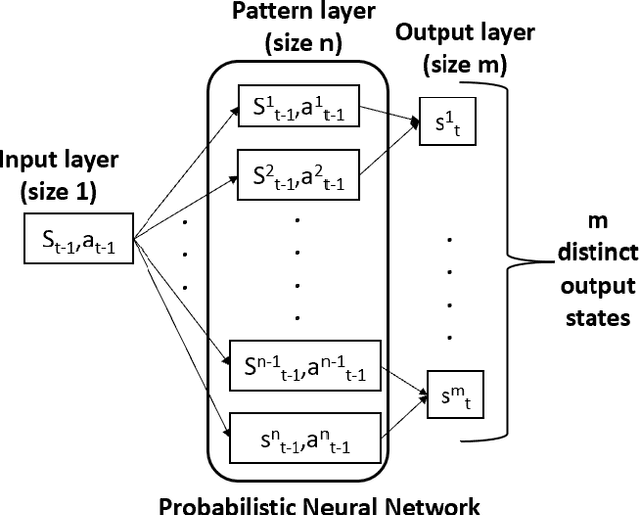

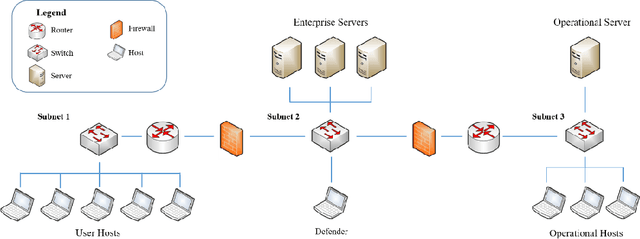

Abstract:Autonomous agents for cyber applications take advantage of modern defense techniques by adopting intelligent agents with conventional and learning-enabled components. These intelligent agents are trained via reinforcement learning (RL) algorithms, and can learn, adapt to, reason about and deploy security rules to defend networked computer systems while maintaining critical operational workflows. However, the knowledge available during training about the state of the operational network and its environment may be limited. The agents should be trustworthy so that they can reliably detect situations they cannot handle, and hand them over to cyber experts. In this work, we develop an out-of-distribution (OOD) Monitoring algorithm that uses a Probabilistic Neural Network (PNN) to detect anomalous or OOD situations of RL-based agents with discrete states and discrete actions. To demonstrate the effectiveness of the proposed approach, we integrate the OOD monitoring algorithm with a neurosymbolic autonomous cyber agent that uses behavior trees with learning-enabled components. We evaluate the proposed approach in a simulated cyber environment under different adversarial strategies. Experimental results over a large number of episodes illustrate the overall efficiency of our proposed approach.

Verification of Behavior Trees with Contingency Monitors

Nov 21, 2024

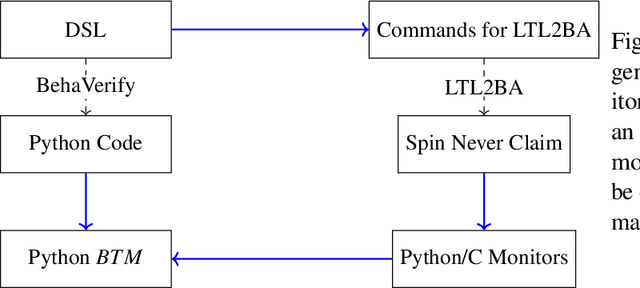

Abstract:Behavior Trees (BTs) are high level controllers that have found use in a wide range of robotics tasks. As they grow in popularity and usage, it is crucial to ensure that the appropriate tools and methods are available for ensuring they work as intended. To that end, we created a new methodology by which to create Runtime Monitors for BTs. These monitors can be used by the BT to correct when undesirable behavior is detected and are capable of handling LTL specifications. We demonstrate that in terms of runtime, the generated monitors are on par with monitors generated by existing tools and highlight certain features that make our method more desirable in various situations. We note that our method allows for our monitors to be swapped out with alternate monitors with fairly minimal user effort. Finally, our method ties in with our existing tool, BehaVerify, allowing for the verification of BTs with monitors.

* In Proceedings FMAS2024, arXiv:2411.13215

Designing Robust Cyber-Defense Agents with Evolving Behavior Trees

Oct 21, 2024

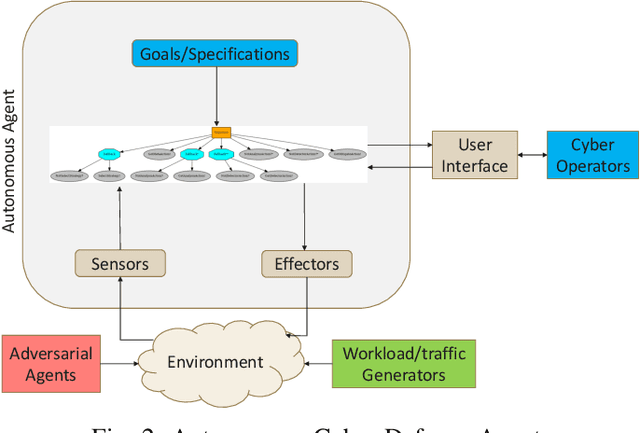

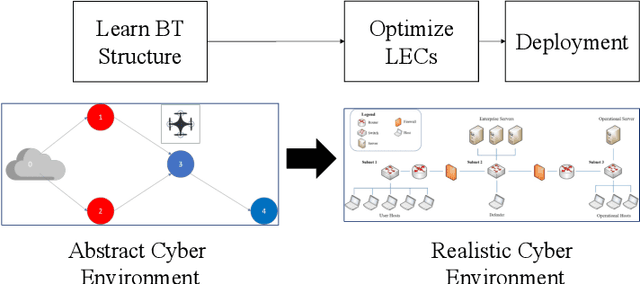

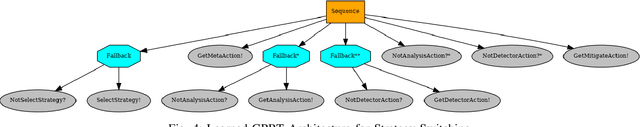

Abstract:Modern network defense can benefit from the use of autonomous systems, offloading tedious and time-consuming work to agents with standard and learning-enabled components. These agents, operating on critical network infrastructure, need to be robust and trustworthy to ensure defense against adaptive cyber-attackers and, simultaneously, provide explanations for their actions and network activity. However, learning-enabled components typically use models, such as deep neural networks, that are not transparent in their high-level decision-making leading to assurance challenges. Additionally, cyber-defense agents must execute complex long-term defense tasks in a reactive manner that involve coordination of multiple interdependent subtasks. Behavior trees are known to be successful in modelling interpretable, reactive, and modular agent policies with learning-enabled components. In this paper, we develop an approach to design autonomous cyber defense agents using behavior trees with learning-enabled components, which we refer to as Evolving Behavior Trees (EBTs). We learn the structure of an EBT with a novel abstract cyber environment and optimize learning-enabled components for deployment. The learning-enabled components are optimized for adapting to various cyber-attacks and deploying security mechanisms. The learned EBT structure is evaluated in a simulated cyber environment, where it effectively mitigates threats and enhances network visibility. For deployment, we develop a software architecture for evaluating EBT-based agents in computer network defense scenarios. Our results demonstrate that the EBT-based agent is robust to adaptive cyber-attacks and provides high-level explanations for interpreting its decisions and actions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge