Moumita Choudhury

Proceedings of 1st Workshop on Advancing Artificial Intelligence through Theory of Mind

Apr 28, 2025

Abstract:This volume includes a selection of papers presented at the Workshop on Advancing Artificial Intelligence through Theory of Mind held at AAAI 2025 in Philadelphia US on 3rd March 2025. The purpose of this volume is to provide an open access and curated anthology for the ToM and AI research community.

A Particle Swarm Inspired Approach for Continuous Distributed Constraint Optimization Problems

Oct 20, 2020

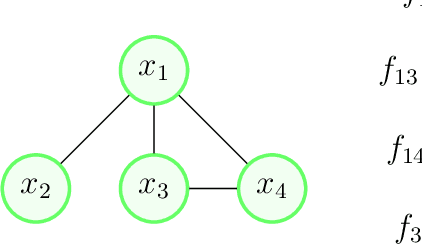

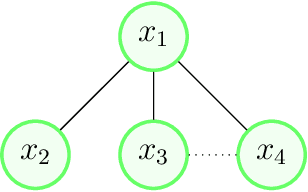

Abstract:Distributed Constraint Optimization Problems (DCOPs) are a widely studied framework for coordinating interactions in cooperative multi-agent systems. In classical DCOPs, variables owned by agents are assumed to be discrete. However, in many applications, such as target tracking or sleep scheduling in sensor networks, continuous-valued variables are more suitable than discrete ones. To better model such applications, researchers have proposed Continuous DCOPs (C-DCOPs), an extension of DCOPs, that can explicitly model problems with continuous variables. The state-of-the-art approaches for solving C-DCOPs experience either onerous memory or computation overhead and unsuitable for non-differentiable optimization problems. To address this issue, we propose a new C-DCOP algorithm, namely Particle Swarm Optimization Based C-DCOP (PCD), which is inspired by Particle Swarm Optimization (PSO), a well-known centralized population-based approach for solving continuous optimization problems. In recent years, population-based algorithms have gained significant attention in classical DCOPs due to their ability in producing high-quality solutions. Nonetheless, to the best of our knowledge, this class of algorithms has not been utilized to solve C-DCOPs and there has been no work evaluating the potential of PSO in solving classical DCOPs or C-DCOPs. In light of this observation, we adapted PSO, a centralized algorithm, to solve C-DCOPs in a decentralized manner. The resulting PCD algorithm not only produces good-quality solutions but also finds solutions without any requirement for derivative calculations. Moreover, we design a crossover operator that can be used by PCD to further improve the quality of solutions found. Finally, we theoretically prove that PCD is an anytime algorithm and empirically evaluate PCD against the state-of-the-art C-DCOP algorithms in a wide variety of benchmarks.

C-CoCoA: A Continuous Cooperative Constraint Approximation Algorithm to Solve Functional DCOPs

Feb 27, 2020

Abstract:Distributed Constraint Optimization Problems (DCOPs) have been widely used to coordinate interactions (i.e. constraints) in cooperative multi-agent systems. The traditional DCOP model assumes that variables owned by the agents can take only discrete values and constraints' cost functions are defined for every possible value assignment of a set of variables. While this formulation is often reasonable, there are many applications where the variables are continuous decision variables and constraints are in functional form. To overcome this limitation, Functional DCOP (F-DCOP) model is proposed that is able to model problems with continuous variables. The existing F-DCOPs algorithms experience huge computation and communication overhead. This paper applies continuous non-linear optimization methods on Cooperative Constraint Approximation (CoCoA) algorithm. We empirically show that our algorithm is able to provide high-quality solutions at the expense of smaller communication cost and execution time compared to the existing F-DCOP algorithms.

Learning Optimal Temperature Region for Solving Mixed Integer Functional DCOPs

Feb 27, 2020

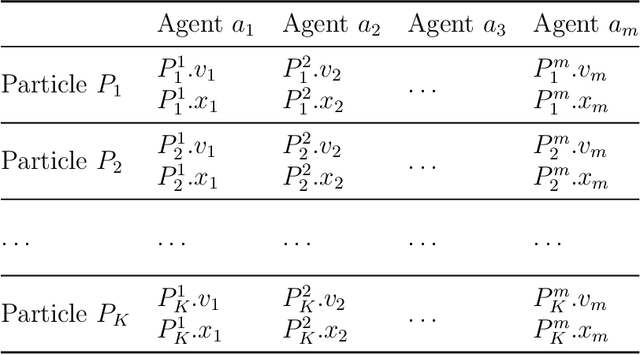

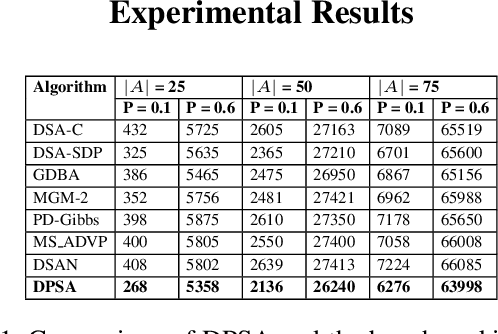

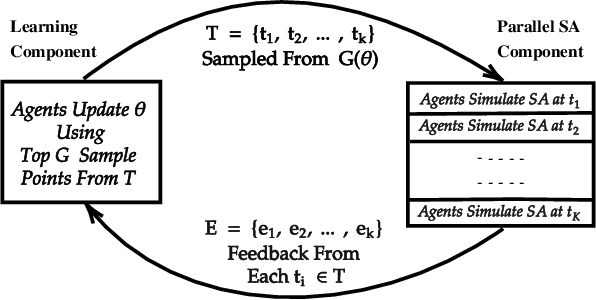

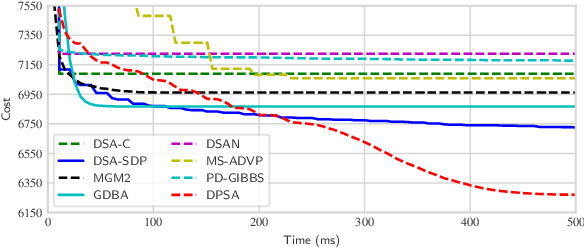

Abstract:Distributed Constraint Optimization Problems (DCOPs) are an important framework that models coordinated decision-making problem in multi-agent systems with a set of discrete variables. Later work has extended this to model problems with a set of continuous variables (F-DCOPs). In this paper, we combine both of these models into the Mixed Integer Functional DCOP (MIF-DCOP) model that can deal with problems regardless of its variables' type. We then propose a novel algorithm, called Distributed Parallel Simulated Annealing (DPSA), where agents cooperatively learn the optimal parameter configuration for the algorithm while also solving the given problem using the learned knowledge. Finally, we empirically benchmark our approach in DCOP, F-DCOP and MIF-DCOP settings and show that DPSA produces solutions of significantly better quality than the state-of-the-art non-exact algorithms in their corresponding setting.

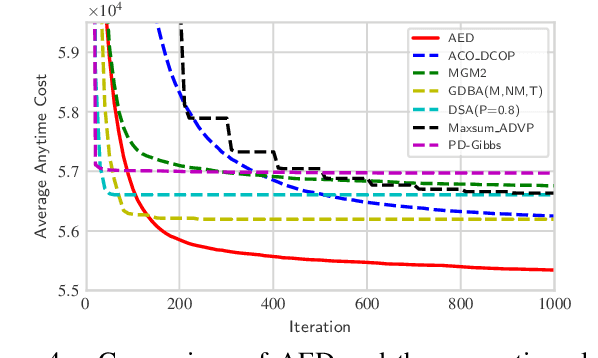

AED: An Anytime Evolutionary DCOP Algorithm

Sep 13, 2019

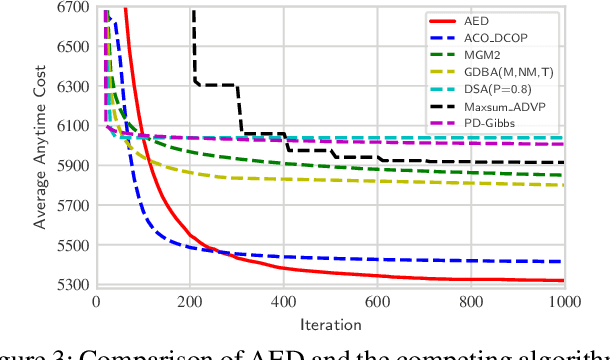

Abstract:Evolutionary optimization is a generic population-based metaheuristic that can be adapted to solve a wide variety of optimization problems and has proven very effective for combinatorial optimization problems. However, the potential of this metaheuristic has not been utilized in Distributed Constraint Optimization Problems (DCOPs), a well-known class of combinatorial optimization problems. In this paper, we present a new population-based algorithm, namely Anytime Evolutionary DCOP (AED), that adapts evolutionary optimization to solve DCOPs. In AED, the agents cooperatively construct an initial set of random solutions and gradually improve them through a new mechanism that considers the optimistic approximation of local benefits. Moreover, we propose a new anytime update mechanism for AED that identifies the best among a distributed set of candidate solutions and notifies all the agents when a new best is found. In our theoretical analysis, we prove that AED is anytime. Finally, we present empirical results indicating AED outperforms the state-of-the-art DCOP algorithms in terms of solution quality.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge