Mohammad Saeid Anwar

HeteroEdge: Addressing Asymmetry in Heterogeneous Collaborative Autonomous Systems

May 05, 2023

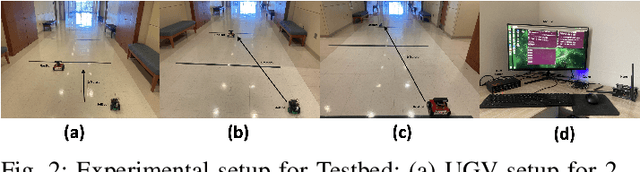

Abstract:Gathering knowledge about surroundings and generating situational awareness for IoT devices is of utmost importance for systems developed for smart urban and uncontested environments. For example, a large-area surveillance system is typically equipped with multi-modal sensors such as cameras and LIDARs and is required to execute deep learning algorithms for action, face, behavior, and object recognition. However, these systems face power and memory constraints due to their ubiquitous nature, making it crucial to optimize data processing, deep learning algorithm input, and model inference communication. In this paper, we propose a self-adaptive optimization framework for a testbed comprising two Unmanned Ground Vehicles (UGVs) and two NVIDIA Jetson devices. This framework efficiently manages multiple tasks (storage, processing, computation, transmission, inference) on heterogeneous nodes concurrently. It involves compressing and masking input image frames, identifying similar frames, and profiling devices to obtain boundary conditions for optimization.. Finally, we propose and optimize a novel parameter split-ratio, which indicates the proportion of the data required to be offloaded to another device while considering the networking bandwidth, busy factor, memory (CPU, GPU, RAM), and power constraints of the devices in the testbed. Our evaluations captured while executing multiple tasks (e.g., PoseNet, SegNet, ImageNet, DetectNet, DepthNet) simultaneously, reveal that executing 70% (split-ratio=70%) of the data on the auxiliary node minimizes the offloading latency by approx. 33% (18.7 ms/image to 12.5 ms/image) and the total operation time by approx. 47% (69.32s to 36.43s) compared to the baseline configuration (executing on the primary node).

A Systematic Study on Object Recognition Using Millimeter-wave Radar

May 03, 2023

Abstract:Due to its light and weather-independent sensing, millimeter-wave (MMW) radar is essential in smart environments. Intelligent vehicle systems and industry-grade MMW radars have integrated such capabilities. Industry-grade MMW radars are expensive and hard to get for community-purpose smart environment applications. However, commercially available MMW radars have hidden underpinning challenges that need to be investigated for tasks like recognizing objects and activities, real-time person tracking, object localization, etc. Image and video data are straightforward to gather, understand, and annotate for such jobs. Image and video data are light and weather-dependent, susceptible to the occlusion effect, and present privacy problems. To eliminate dependence and ensure privacy, commercial MMW radars should be tested. MMW radar's practicality and performance in varied operating settings must be addressed before promoting it. To address the problems, we collected a dataset using Texas Instruments' Automotive mmWave Radar (AWR2944) and reported the best experimental settings for object recognition performance using different deep learning algorithms. Our extensive data gathering technique allows us to systematically explore and identify object identification task problems under cross-ambience conditions. We investigated several solutions and published detailed experimental data.

A Reliable and Low Latency Synchronizing Middleware for Co-simulation of a Heterogeneous Multi-Robot Systems

Nov 10, 2022Abstract:Search and rescue, wildfire monitoring, and flood/hurricane impact assessment are mission-critical services for recent IoT networks. Communication synchronization, dependability, and minimal communication jitter are major simulation and system issues for the time-based physics-based ROS simulator, event-based network-based wireless simulator, and complex dynamics of mobile and heterogeneous IoT devices deployed in actual environments. Simulating a heterogeneous multi-robot system before deployment is difficult due to synchronizing physics (robotics) and network simulators. Due to its master-based architecture, most TCP/IP-based synchronization middlewares use ROS1. A real-time ROS2 architecture with masterless packet discovery synchronizes robotics and wireless network simulations. A velocity-aware Transmission Control Protocol (TCP) technique for ground and aerial robots using Data Distribution Service (DDS) publish-subscribe transport minimizes packet loss, synchronization, transmission, and communication jitters. Gazebo and NS-3 simulate and test. Simulator-agnostic middleware. LOS/NLOS and TCP/UDP protocols tested our ROS2-based synchronization middleware for packet loss probability and average latency. A thorough ablation research replaced NS-3 with EMANE, a real-time wireless network simulator, and masterless ROS2 with master-based ROS1. Finally, we tested network synchronization and jitter using one aerial drone (Duckiedrone) and two ground vehicles (TurtleBot3 Burger) on different terrains in masterless (ROS2) and master-enabled (ROS1) clusters. Our middleware shows that a large-scale IoT infrastructure with a diverse set of stationary and robotic devices can achieve low-latency communications (12% and 11% reduction in simulation and real) while meeting mission-critical application reliability (10% and 15% packet loss reduction) and high-fidelity requirements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge