Michael Schapira

Unleashing Automated Congestion Control Customization in the Wild

May 18, 2025Abstract:Congestion control (CC) crucially impacts user experience across Internet services like streaming, gaming, AR/VR, and connected cars. Traditionally, CC algorithm design seeks universal control rules that yield high performance across diverse application domains and networks. However, varying service needs and network conditions challenge this approach. We share operational experience with a system that automatically customizes congestion control logic to service needs and network conditions. We discuss design, deployment challenges, and solutions, highlighting performance benefits through case studies in streaming, gaming, connected cars, and more. Our system leverages PCC Vivace, an online-learning based congestion control protocol developed by researchers. Hence, along with insights from customizing congestion control, we also discuss lessons learned and modifications made to adapt PCC Vivace for real-world deployment.

Verifying the Generalization of Deep Learning to Out-of-Distribution Domains

Jun 04, 2024

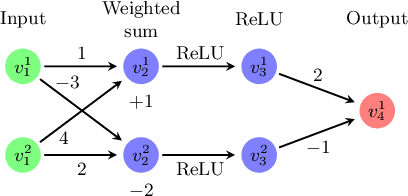

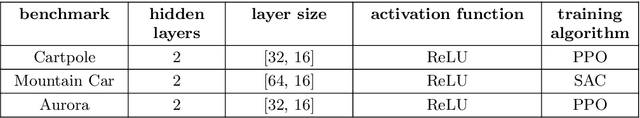

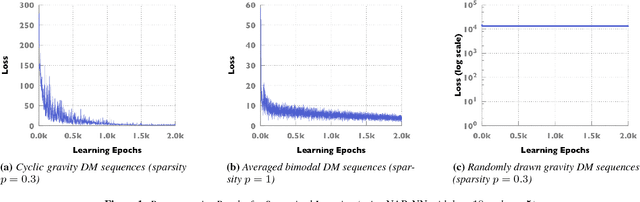

Abstract:Deep neural networks (DNNs) play a crucial role in the field of machine learning, demonstrating state-of-the-art performance across various application domains. However, despite their success, DNN-based models may occasionally exhibit challenges with generalization, i.e., may fail to handle inputs that were not encountered during training. This limitation is a significant challenge when it comes to deploying deep learning for safety-critical tasks, as well as in real-world settings characterized by substantial variability. We introduce a novel approach for harnessing DNN verification technology to identify DNN-driven decision rules that exhibit robust generalization to previously unencountered input domains. Our method assesses generalization within an input domain by measuring the level of agreement between independently trained deep neural networks for inputs in this domain. We also efficiently realize our approach by using off-the-shelf DNN verification engines, and extensively evaluate it on both supervised and unsupervised DNN benchmarks, including a deep reinforcement learning (DRL) system for Internet congestion control -- demonstrating the applicability of our approach for real-world settings. Moreover, our research introduces a fresh objective for formal verification, offering the prospect of mitigating the challenges linked to deploying DNN-driven systems in real-world scenarios.

A Deep Learning Perspective on Network Routing

Mar 05, 2023Abstract:Routing is, arguably, the most fundamental task in computer networking, and the most extensively studied one. A key challenge for routing in real-world environments is the need to contend with uncertainty about future traffic demands. We present a new approach to routing under demand uncertainty: tackling this challenge as stochastic optimization, and employing deep learning to learn complex patterns in traffic demands. We show that our method provably converges to the global optimum in well-studied theoretical models of multicommodity flow. We exemplify the practical usefulness of our approach by zooming in on the real-world challenge of traffic engineering (TE) on wide-area networks (WANs). Our extensive empirical evaluation on real-world traffic and network topologies establishes that our approach's TE quality almost matches that of an (infeasible) omniscient oracle, outperforming previously proposed approaches, and also substantially lowers runtimes.

Verifying Generalization in Deep Learning

Feb 11, 2023

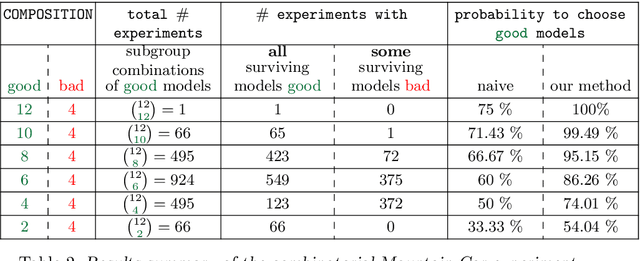

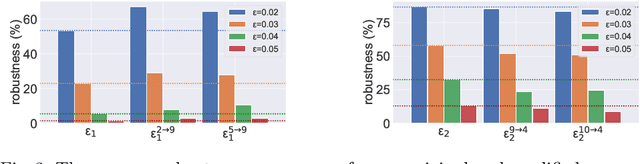

Abstract:Deep neural networks (DNNs) are the workhorses of deep learning, which constitutes the state of the art in numerous application domains. However, DNN-based decision rules are notoriously prone to poor generalization, i.e., may prove inadequate on inputs not encountered during training. This limitation poses a significant obstacle to employing deep learning for mission-critical tasks, and also in real-world environments that exhibit high variability. We propose a novel, verification-driven methodology for identifying DNN-based decision rules that generalize well to new input domains. Our approach quantifies generalization to an input domain by the extent to which decisions reached by independently trained DNNs are in agreement for inputs in this domain. We show how, by harnessing the power of DNN verification, our approach can be efficiently and effectively realized. We evaluate our verification-based approach on three deep reinforcement learning (DRL) benchmarks, including a system for real-world Internet congestion control. Our results establish the usefulness of our approach, and, in particular, its superiority over gradient-based methods. More broadly, our work puts forth a novel objective for formal verification, with the potential for mitigating the risks associated with deploying DNN-based systems in the wild.

Verification-Aided Deep Ensemble Selection

Feb 08, 2022

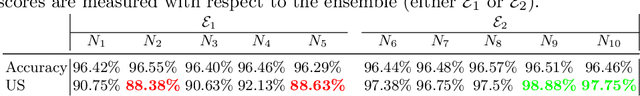

Abstract:Deep neural networks (DNNs) have become the technology of choice for realizing a variety of complex tasks. However, as highlighted by many recent studies, even an imperceptible perturbation to a correctly classified input can lead to misclassification by a DNN. This renders DNNs vulnerable to strategic input manipulations by attackers, and also prone to oversensitivity to environmental noise. To mitigate this phenomenon, practitioners apply joint classification by an ensemble of DNNs. By aggregating the classification outputs of different individual DNNs for the same input, ensemble-based classification reduces the risk of misclassifications due to the specific realization of the stochastic training process of any single DNN. However, the effectiveness of a DNN ensemble is highly dependent on its members not simultaneously erring on many different inputs. In this case study, we harness recent advances in DNN verification to devise a methodology for identifying ensemble compositions that are less prone to simultaneous errors, even when the input is adversarially perturbed -- resulting in more robustly-accurate ensemble-based classification. Our proposed framework uses a DNN verifier as a backend, and includes heuristics that help reduce the high complexity of directly verifying ensembles. More broadly, our work puts forth a novel universal objective for formal verification that can potentially improve the robustness of real-world, deep-learning-based systems across a variety of application domains.

Towards Scalable Verification of RL-Driven Systems

May 25, 2021

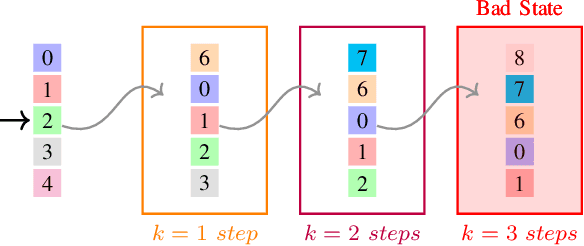

Abstract:Deep neural networks (DNNs) have gained significant popularity in recent years, becoming the state of the art in a variety of domains. In particular, deep reinforcement learning (DRL) has recently been employed to train DNNs that act as control policies for various types of real-world systems. In this work, we present the whiRL 2.0 tool, which implements a new approach for verifying complex properties of interest for such DRL systems. To demonstrate the benefits of whiRL 2.0, we apply it to case studies from the communication networks domain that have recently been used to motivate formal verification of DRL systems, and which exhibit characteristics that are conducive for scalable verification. We propose techniques for performing k-induction and automated invariant inference on such systems, and use these techniques for proving safety and liveness properties of interest that were previously impossible to verify due to the scalability barriers of prior approaches. Furthermore, we show how our proposed techniques provide insights into the inner workings and the generalizability of DRL systems. whiRL 2.0 is publicly available online.

Online Safety Assurance for Deep Reinforcement Learning

Oct 07, 2020

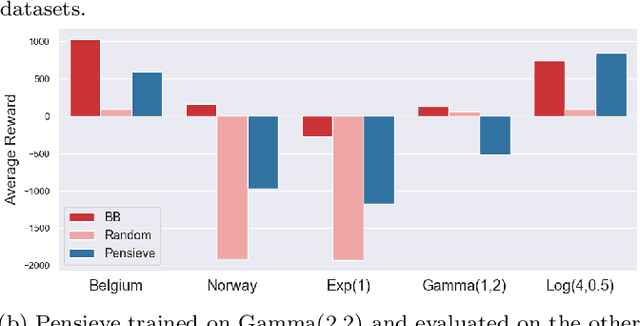

Abstract:Recently, deep learning has been successfully applied to a variety of networking problems. A fundamental challenge is that when the operational environment for a learning-augmented system differs from its training environment, such systems often make badly informed decisions, leading to bad performance. We argue that safely deploying learning-driven systems requires being able to determine, in real time, whether system behavior is coherent, for the purpose of defaulting to a reasonable heuristic when this is not so. We term this the online safety assurance problem (OSAP). We present three approaches to quantifying decision uncertainty that differ in terms of the signal used to infer uncertainty. We illustrate the usefulness of online safety assurance in the context of the proposed deep reinforcement learning (RL) approach to video streaming. While deep RL for video streaming bests other approaches when the operational and training environments match, it is dominated by simple heuristics when the two differ. Our preliminary findings suggest that transitioning to a default policy when decision uncertainty is detected is key to enjoying the performance benefits afforded by leveraging ML without compromising on safety.

A Machine Learning Approach to Routing

Nov 11, 2017

Abstract:Can ideas and techniques from machine learning be leveraged to automatically generate "good" routing configurations? We investigate the power of data-driven routing protocols. Our results suggest that applying ideas and techniques from deep reinforcement learning to this context yields high performance, motivating further research along these lines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge