Mhairi Aitken

Data Justice Stories: A Repository of Case Studies

Apr 06, 2022Abstract:The idea of "data justice" is of recent academic vintage. It has arisen over the past decade in Anglo-European research institutions as an attempt to bring together a critique of the power dynamics that underlie accelerating trends of datafication with a normative commitment to the principles of social justice-a commitment to the achievement of a society that is equitable, fair, and capable of confronting the root causes of injustice.However, despite the seeming novelty of such a data justice pedigree, this joining up of the critique of the power imbalances that have shaped the digital and "big data" revolutions with a commitment to social equity and constructive societal transformation has a deeper historical, and more geographically diverse, provenance. As the stories of the data justice initiatives, activism, and advocacy contained in this volume well evidence, practices of data justice across the globe have, in fact, largely preceded the elaboration and crystallisation of the idea of data justice in contemporary academic discourse. In telling these data justice stories, we hope to provide the reader with two interdependent tools of data justice thinking: First, we aim to provide the reader with the critical leverage needed to discern those distortions and malformations of data justice that manifest in subtle and explicit forms of power, domination, and coercion. Second, we aim to provide the reader with access to the historically effective forms of normativity and ethical insight that have been marshalled by data justice activists and advocates as tools of societal transformation-so that these forms of normativity and insight can be drawn on, in turn, as constructive resources to spur future transformative data justice practices.

Advancing Data Justice Research and Practice: An Integrated Literature Review

Apr 06, 2022

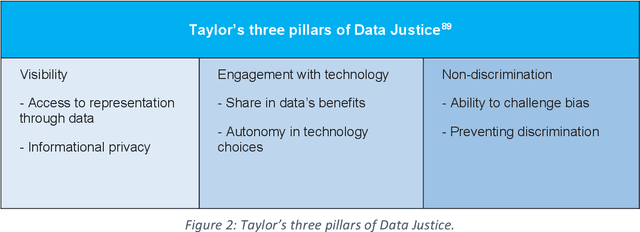

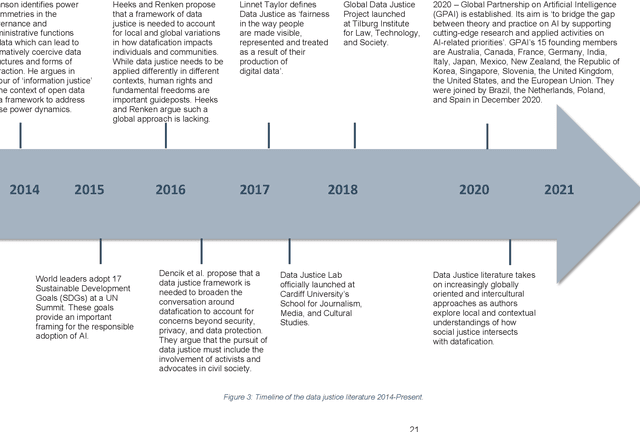

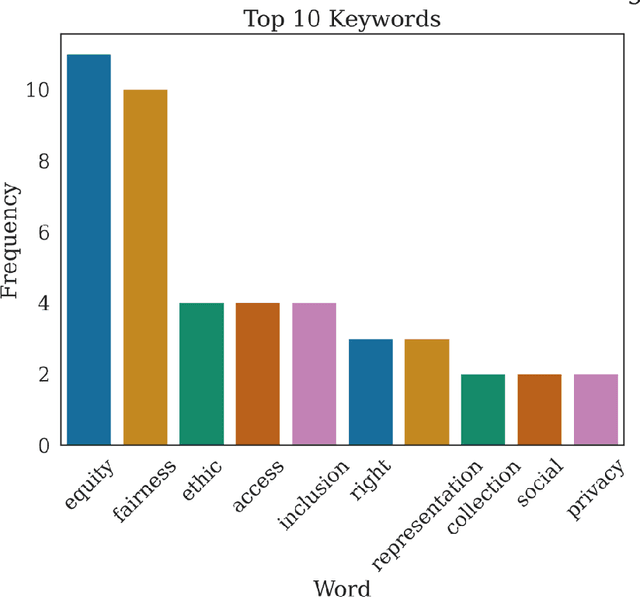

Abstract:The Advancing Data Justice Research and Practice (ADJRP) project aims to widen the lens of current thinking around data justice and to provide actionable resources that will help policymakers, practitioners, and impacted communities gain a broader understanding of what equitable, freedom-promoting, and rights-sustaining data collection, governance, and use should look like in increasingly dynamic and global data innovation ecosystems. In this integrated literature review we hope to lay the conceptual groundwork needed to support this aspiration. The introduction motivates the broadening of data justice that is undertaken by the literature review which follows. First, we address how certain limitations of the current study of data justice drive the need for a re-location of data justice research and practice. We map out the strengths and shortcomings of the contemporary state of the art and then elaborate on the challenges faced by our own effort to broaden the data justice perspective in the decolonial context. The body of the literature review covers seven thematic areas. For each theme, the ADJRP team has systematically collected and analysed key texts in order to tell the critical empirical story of how existing social structures and power dynamics present challenges to data justice and related justice fields. In each case, this critical empirical story is also supplemented by the transformational story of how activists, policymakers, and academics are challenging longstanding structures of inequity to advance social justice in data innovation ecosystems and adjacent areas of technological practice.

Human rights, democracy, and the rule of law assurance framework for AI systems: A proposal

Feb 06, 2022Abstract:Following on from the publication of its Feasibility Study in December 2020, the Council of Europe's Ad Hoc Committee on Artificial Intelligence (CAHAI) and its subgroups initiated efforts to formulate and draft its Possible Elements of a Legal Framework on Artificial Intelligence, based on the Council of Europe's standards on human rights, democracy, and the rule of law. This document was ultimately adopted by the CAHAI plenary in December 2021. To support this effort, The Alan Turing Institute undertook a programme of research that explored the governance processes and practical tools needed to operationalise the integration of human right due diligence with the assurance of trustworthy AI innovation practices. The resulting framework was completed and submitted to the Council of Europe in September 2021. It presents an end-to-end approach to the assurance of AI project lifecycles that integrates context-based risk analysis and appropriate stakeholder engagement with comprehensive impact assessment, and transparent risk management, impact mitigation, and innovation assurance practices. Taken together, these interlocking processes constitute a Human Rights, Democracy and the Rule of Law Assurance Framework (HUDERAF). The HUDERAF combines the procedural requirements for principles-based human rights due diligence with the governance mechanisms needed to set up technical and socio-technical guardrails for responsible and trustworthy AI innovation practices. Its purpose is to provide an accessible and user-friendly set of mechanisms for facilitating compliance with a binding legal framework on artificial intelligence, based on the Council of Europe's standards on human rights, democracy, and the rule of law, and to ensure that AI innovation projects are carried out with appropriate levels of public accountability, transparency, and democratic governance.

Artificial intelligence, human rights, democracy, and the rule of law: a primer

Apr 02, 2021Abstract:In September 2019, the Council of Europe's Committee of Ministers adopted the terms of reference for the Ad Hoc Committee on Artificial Intelligence (CAHAI). The CAHAI is charged with examining the feasibility and potential elements of a legal framework for the design, development, and deployment of AI systems that accord with Council of Europe standards across the interrelated areas of human rights, democracy, and the rule of law. As a first and necessary step in carrying out this responsibility, the CAHAI's Feasibility Study, adopted by its plenary in December 2020, has explored options for an international legal response that fills existing gaps in legislation and tailors the use of binding and non-binding legal instruments to the specific risks and opportunities presented by AI systems. The Study examines how the fundamental rights and freedoms that are already codified in international human rights law can be used as the basis for such a legal framework. The purpose of this primer is to introduce the main concepts and principles presented in the CAHAI's Feasibility Study for a general, non-technical audience. It also aims to provide some background information on the areas of AI innovation, human rights law, technology policy, and compliance mechanisms covered therein. In keeping with the Council of Europe's commitment to broad multi-stakeholder consultations, outreach, and engagement, this primer has been designed to help facilitate the meaningful and informed participation of an inclusive group of stakeholders as the CAHAI seeks feedback and guidance regarding the essential issues raised by the Feasibility Study.

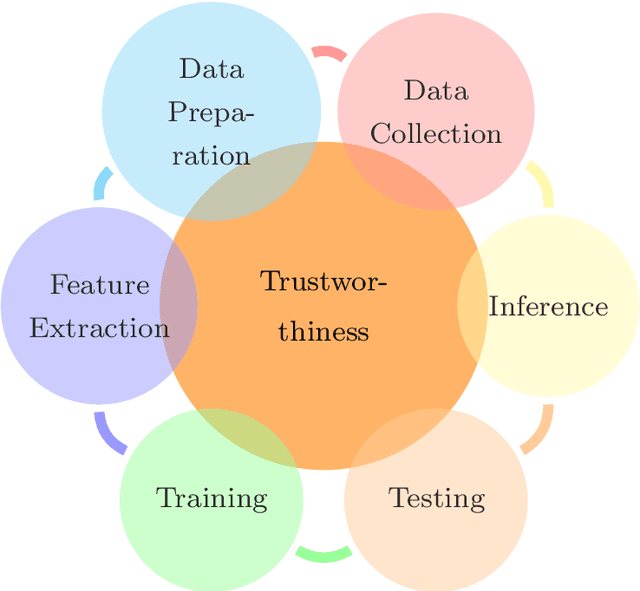

Technologies for Trustworthy Machine Learning: A Survey in a Socio-Technical Context

Jul 17, 2020

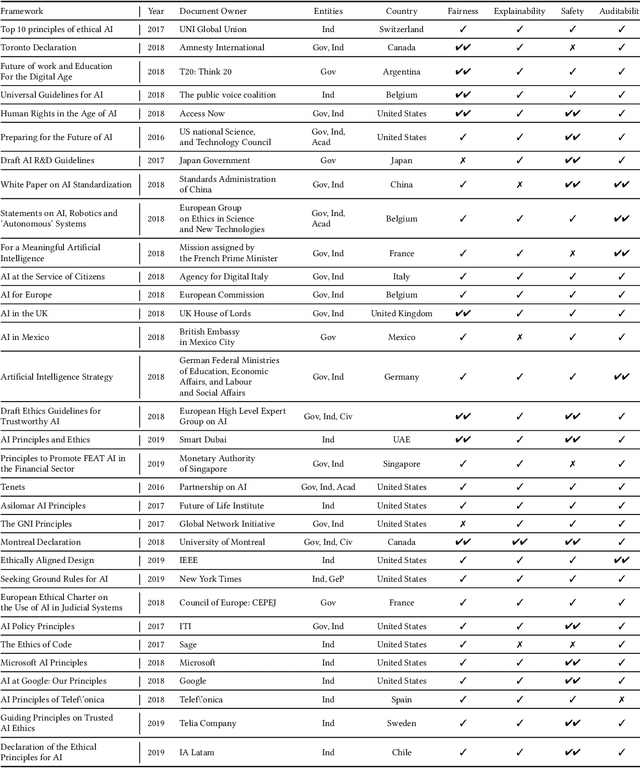

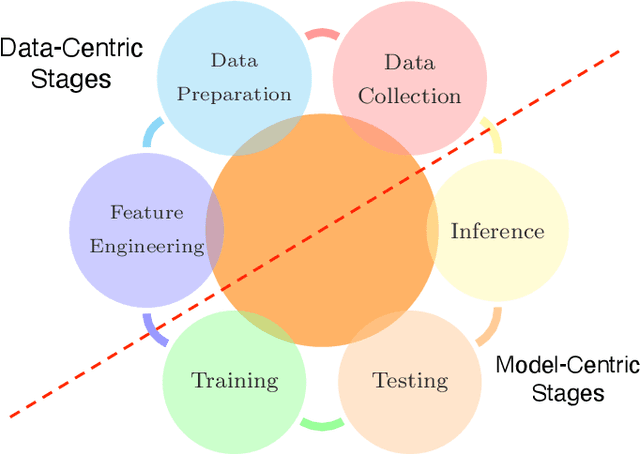

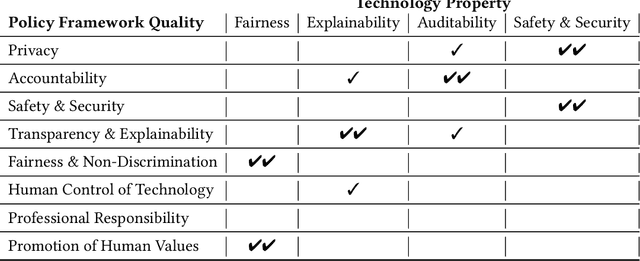

Abstract:Concerns about the societal impact of AI-based services and systems has encouraged governments and other organisations around the world to propose AI policy frameworks to address fairness, accountability, transparency and related topics. To achieve the objectives of these frameworks, the data and software engineers who build machine-learning systems require knowledge about a variety of relevant supporting tools and techniques. In this paper we provide an overview of technologies that support building trustworthy machine learning systems, i.e., systems whose properties justify that people place trust in them. We argue that four categories of system properties are instrumental in achieving the policy objectives, namely fairness, explainability, auditability and safety & security (FEAS). We discuss how these properties need to be considered across all stages of the machine learning life cycle, from data collection through run-time model inference. As a consequence, we survey in this paper the main technologies with respect to all four of the FEAS properties, for data-centric as well as model-centric stages of the machine learning system life cycle. We conclude with an identification of open research problems, with a particular focus on the connection between trustworthy machine learning technologies and their implications for individuals and society.

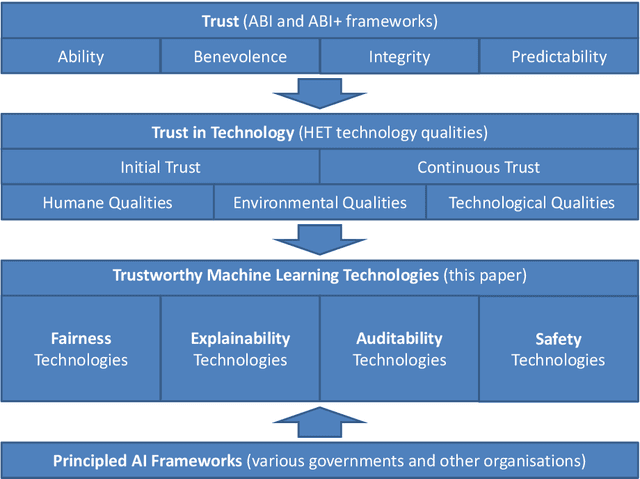

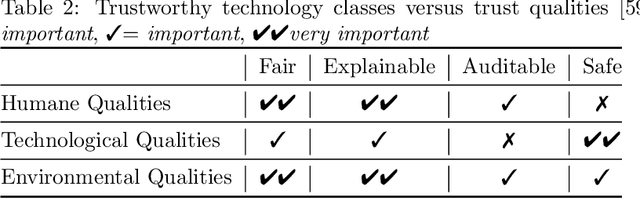

The relationship between trust in AI and trustworthy machine learning technologies

Dec 03, 2019

Abstract:To build AI-based systems that users and the public can justifiably trust one needs to understand how machine learning technologies impact trust put in these services. To guide technology developments, this paper provides a systematic approach to relate social science concepts of trust with the technologies used in AI-based services and products. We conceive trust as discussed in the ABI (Ability, Benevolence, Integrity) framework and use a recently proposed mapping of ABI on qualities of technologies. We consider four categories of machine learning technologies, namely these for Fairness, Explainability, Auditability and Safety (FEAS) and discuss if and how these possess the required qualities. Trust can be impacted throughout the life cycle of AI-based systems, and we introduce the concept of Chain of Trust to discuss technological needs for trust in different stages of the life cycle. FEAS has obvious relations with known frameworks and therefore we relate FEAS to a variety of international Principled AI policy and technology frameworks that have emerged in recent years.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge