Carlos Gonzalez Zelaya

The relationship between trust in AI and trustworthy machine learning technologies

Dec 03, 2019

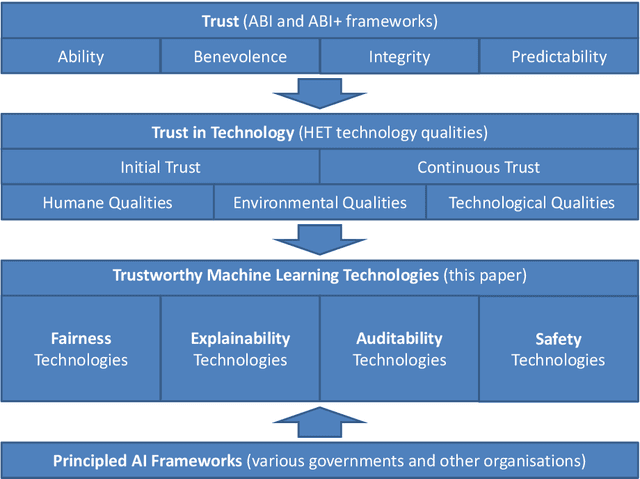

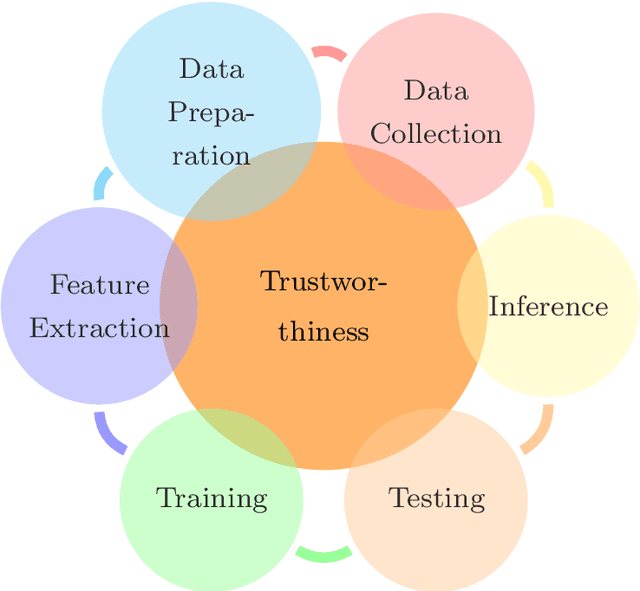

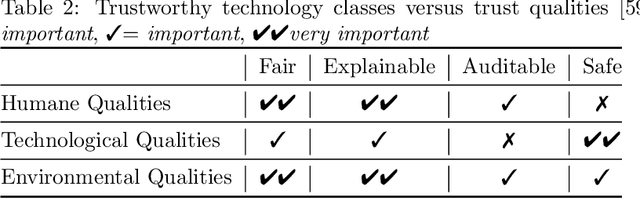

Abstract:To build AI-based systems that users and the public can justifiably trust one needs to understand how machine learning technologies impact trust put in these services. To guide technology developments, this paper provides a systematic approach to relate social science concepts of trust with the technologies used in AI-based services and products. We conceive trust as discussed in the ABI (Ability, Benevolence, Integrity) framework and use a recently proposed mapping of ABI on qualities of technologies. We consider four categories of machine learning technologies, namely these for Fairness, Explainability, Auditability and Safety (FEAS) and discuss if and how these possess the required qualities. Trust can be impacted throughout the life cycle of AI-based systems, and we introduce the concept of Chain of Trust to discuss technological needs for trust in different stages of the life cycle. FEAS has obvious relations with known frameworks and therefore we relate FEAS to a variety of international Principled AI policy and technology frameworks that have emerged in recent years.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge