Mehrad Mortazavi

RoMu4o: A Robotic Manipulation Unit For Orchard Operations Automating Proximal Hyperspectral Leaf Sensing

Jan 18, 2025Abstract:Driven by the need to address labor shortages and meet the demands of a rapidly growing population, robotic automation has become a critical component in precision agriculture. Leaf-level hyperspectral spectroscopy is shown to be a powerful tool for phenotyping, monitoring crop health, identifying essential nutrients within plants as well as detecting diseases and water stress. This work introduces RoMu4o, a robotic manipulation unit for orchard operations offering an automated solution for proximal hyperspectral leaf sensing. This ground robot is equipped with a 6DOF robotic arm and vision system for real-time deep learning-based image processing and motion planning. We developed robust perception and manipulation pipelines that enable the robot to successfully grasp target leaves and perform spectroscopy. These frameworks operate synergistically to identify and extract the 3D structure of leaves from an observed batch of foliage, propose 6D poses, and generate collision-free constraint-aware paths for precise leaf manipulation. The end-effector of the arm features a compact design that integrates an independent lighting source with a hyperspectral sensor, enabling high-fidelity data acquisition while streamlining the calibration process for accurate measurements. Our ground robot is engineered to operate in unstructured orchard environments. However, the performance of the system is evaluated in both indoor and outdoor plant models. The system demonstrated reliable performance for 1-LPB hyperspectral sampling, achieving 95% success rate in lab trials and 79% in field trials. Field experiments revealed an overall success rate of 70% for autonomous leaf grasping and hyperspectral measurement in a pistachio orchard. The open-source repository is available at: https://github.com/mehradmrt/UCM-AgBot-ROS2

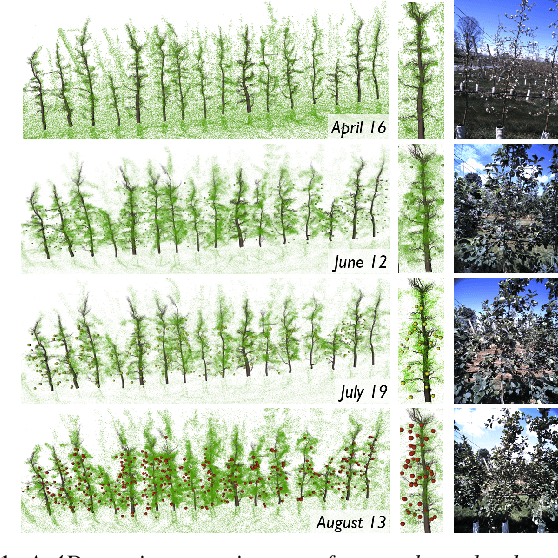

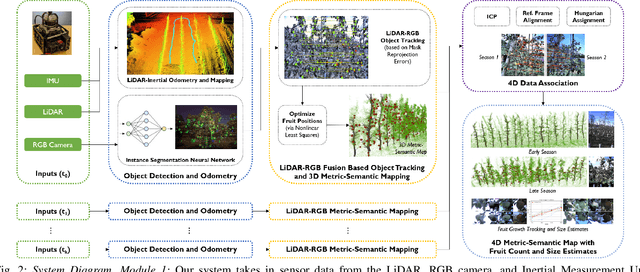

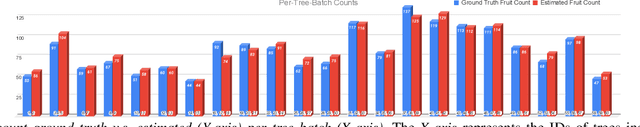

4D Metric-Semantic Mapping for Persistent Orchard Monitoring: Method and Dataset

Sep 29, 2024

Abstract:Automated persistent and fine-grained monitoring of orchards at the individual tree or fruit level helps maximize crop yield and optimize resources such as water, fertilizers, and pesticides while preventing agricultural waste. Towards this goal, we present a 4D spatio-temporal metric-semantic mapping method that fuses data from multiple sensors, including LiDAR, RGB camera, and IMU, to monitor the fruits in an orchard across their growth season. A LiDAR-RGB fusion module is designed for 3D fruit tracking and localization, which first segments fruits using a deep neural network and then tracks them using the Hungarian Assignment algorithm. Additionally, the 4D data association module aligns data from different growth stages into a common reference frame and tracks fruits spatio-temporally, providing information such as fruit counts, sizes, and positions. We demonstrate our method's accuracy in 4D metric-semantic mapping using data collected from a real orchard under natural, uncontrolled conditions with seasonal variations. We achieve a 3.1 percent error in total fruit count estimation for over 1790 fruits across 60 apple trees, along with accurate size estimation results with a mean error of 1.1 cm. The datasets, consisting of LiDAR, RGB, and IMU data of five fruit species captured across their growth seasons, along with corresponding ground truth data, will be made publicly available at: https://4d-metric-semantic-mapping.org/

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge