Md Modasshir

DeepURL: Deep Pose Estimation Framework for Underwater Relative Localization

Mar 13, 2020

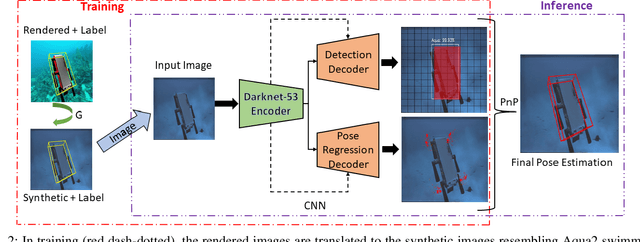

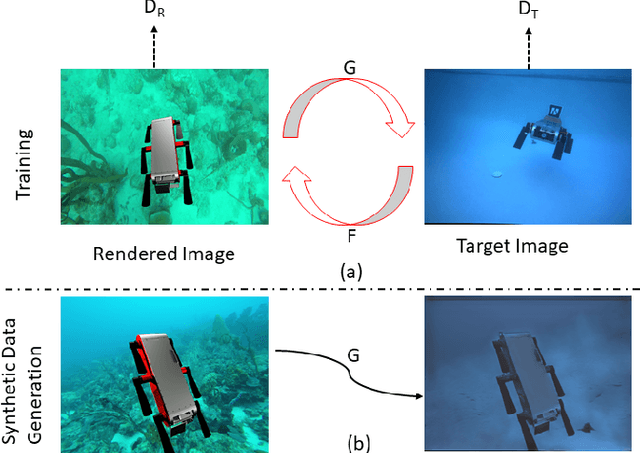

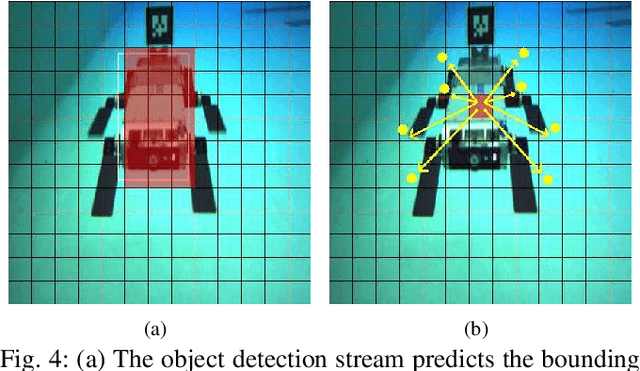

Abstract:In this paper, we propose a real-time deep-learning approach for determining the 6D relative pose of Autonomous Underwater Vehicles (AUV) from a single image. A team of autonomous robots localizing themselves, in a communication-constrained underwater environment, is essential for many applications such as underwater exploration, mapping, multi-robot convoying, and other multi-robot tasks. Due to the profound difficulty of collecting ground truth images with accurate 6D poses underwater, this work utilizes rendered images from the Unreal Game Engine simulation for training. An image translation network is employed to bridge the gap between the rendered and the real images producing synthetic images for training. The proposed method predicts the 6D pose of an AUV from a single image as 2D image keypoints representing 8 corners of the 3D model of the AUV, and then the 6D pose in the camera coordinates is determined using RANSAC-based PnP. Experimental results in underwater environments (swimming pool and ocean) with different cameras demonstrate the robustness of the proposed technique, where the trained system decreased translation error by 75.5% and orientation error by 64.6% over the state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge