Max Zwiessele

Universal Marginaliser for Deep Amortised Inference for Probabilistic Programs

Oct 16, 2019

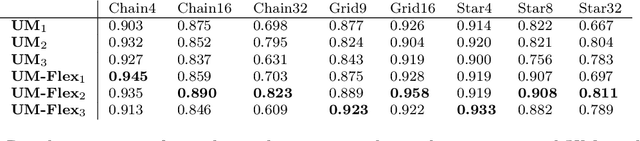

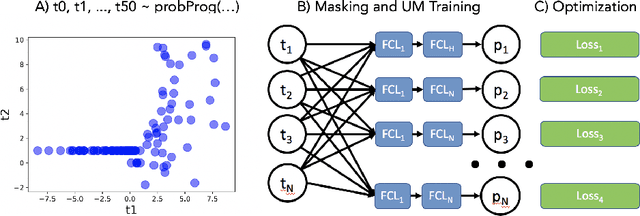

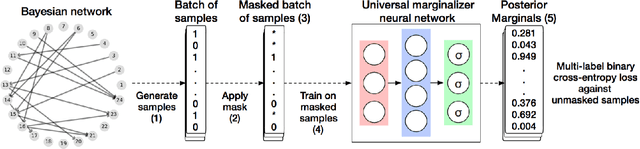

Abstract:Probabilistic programming languages (PPLs) are powerful modelling tools which allow to formalise our knowledge about the world and reason about its inherent uncertainty. Inference methods used in PPL can be computationally costly due to significant time burden and/or storage requirements; or they can lack theoretical guarantees of convergence and accuracy when applied to large scale graphical models. To this end, we present the Universal Marginaliser (UM), a novel method for amortised inference, in PPL. We show how combining samples drawn from the original probabilistic program prior with an appropriate augmentation method allows us to train one neural network to approximate any of the corresponding conditional marginal distributions, with any separation into latent and observed variables, and thus amortise the cost of inference. Finally, we benchmark the method on multiple probabilistic programs, in Pyro, with different model structure.

Universal Marginalizer for Amortised Inference and Embedding of Generative Models

Nov 12, 2018

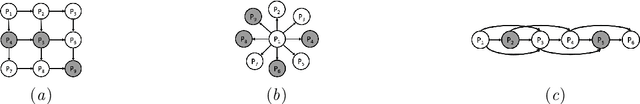

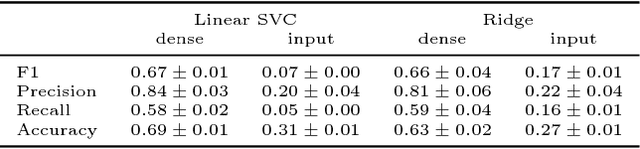

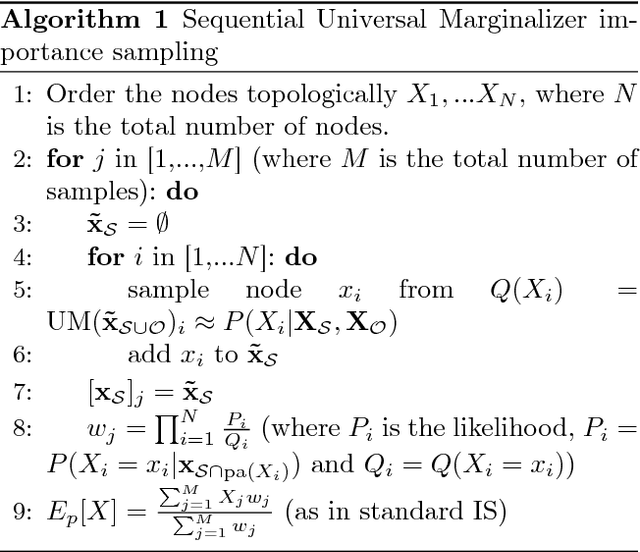

Abstract:Probabilistic graphical models are powerful tools which allow us to formalise our knowledge about the world and reason about its inherent uncertainty. There exist a considerable number of methods for performing inference in probabilistic graphical models; however, they can be computationally costly due to significant time burden and/or storage requirements; or they lack theoretical guarantees of convergence and accuracy when applied to large scale graphical models. To this end, we propose the Universal Marginaliser Importance Sampler (UM-IS) -- a hybrid inference scheme that combines the flexibility of a deep neural network trained on samples from the model and inherits the asymptotic guarantees of importance sampling. We show how combining samples drawn from the graphical model with an appropriate masking function allows us to train a single neural network to approximate any of the corresponding conditional marginal distributions, and thus amortise the cost of inference. We also show that the graph embeddings can be applied for tasks such as: clustering, classification and interpretation of relationships between the nodes. Finally, we benchmark the method on a large graph (>1000 nodes), showing that UM-IS outperforms sampling-based methods by a large margin while being computationally efficient.

Differentially Private Gaussian Processes

May 30, 2017

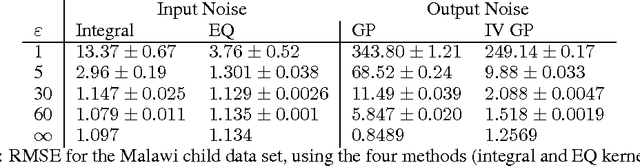

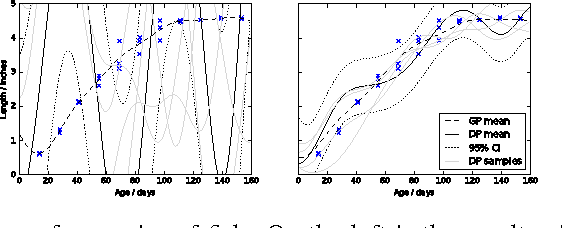

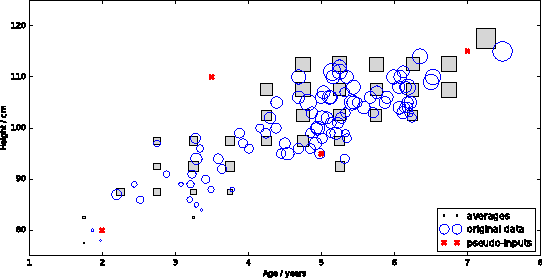

Abstract:A major challenge for machine learning is increasing the availability of data while respecting the privacy of individuals. Here we combine the provable privacy guarantees of the Differential Privacy framework with the flexibility of Gaussian processes (GPs). We propose a method using GPs to provide Differentially Private (DP) regression. We then improve this method by crafting the DP noise covariance structure to efficiently protect the training data, while minimising the scale of the added noise. We find that, for the dataset used, this cloaking method achieves the greatest accuracy, while still providing privacy guarantees, and offers practical DP for regression over multi-dimensional inputs. Together these methods provide a starter toolkit for combining differential privacy and GPs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge