Márcio Catelan

Image-Based Multi-Survey Classification of Light Curves with a Pre-Trained Vision Transformer

Jul 15, 2025

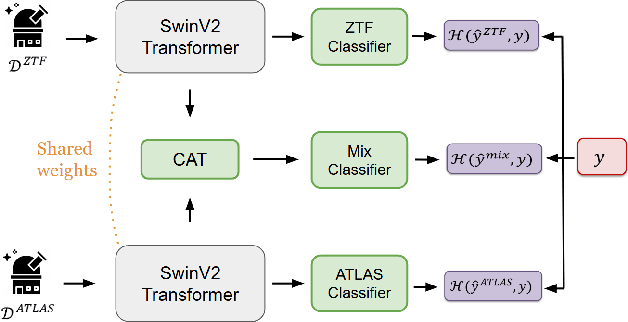

Abstract:We explore the use of Swin Transformer V2, a pre-trained vision Transformer, for photometric classification in a multi-survey setting by leveraging light curves from the Zwicky Transient Facility (ZTF) and the Asteroid Terrestrial-impact Last Alert System (ATLAS). We evaluate different strategies for integrating data from these surveys and find that a multi-survey architecture which processes them jointly achieves the best performance. These results highlight the importance of modeling survey-specific characteristics and cross-survey interactions, and provide guidance for building scalable classifiers for future time-domain astronomy.

A self-regulated convolutional neural network for classifying variable stars

May 20, 2025Abstract:Over the last two decades, machine learning models have been widely applied and have proven effective in classifying variable stars, particularly with the adoption of deep learning architectures such as convolutional neural networks, recurrent neural networks, and transformer models. While these models have achieved high accuracy, they require high-quality, representative data and a large number of labelled samples for each star type to generalise well, which can be challenging in time-domain surveys. This challenge often leads to models learning and reinforcing biases inherent in the training data, an issue that is not easily detectable when validation is performed on subsamples from the same catalogue. The problem of biases in variable star data has been largely overlooked, and a definitive solution has yet to be established. In this paper, we propose a new approach to improve the reliability of classifiers in variable star classification by introducing a self-regulated training process. This process utilises synthetic samples generated by a physics-enhanced latent space variational autoencoder, incorporating six physical parameters from Gaia Data Release 3. Our method features a dynamic interaction between a classifier and a generative model, where the generative model produces ad-hoc synthetic light curves to reduce confusion during classifier training and populate underrepresented regions in the physical parameter space. Experiments conducted under various scenarios demonstrate that our self-regulated training approach outperforms traditional training methods for classifying variable stars on biased datasets, showing statistically significant improvements.

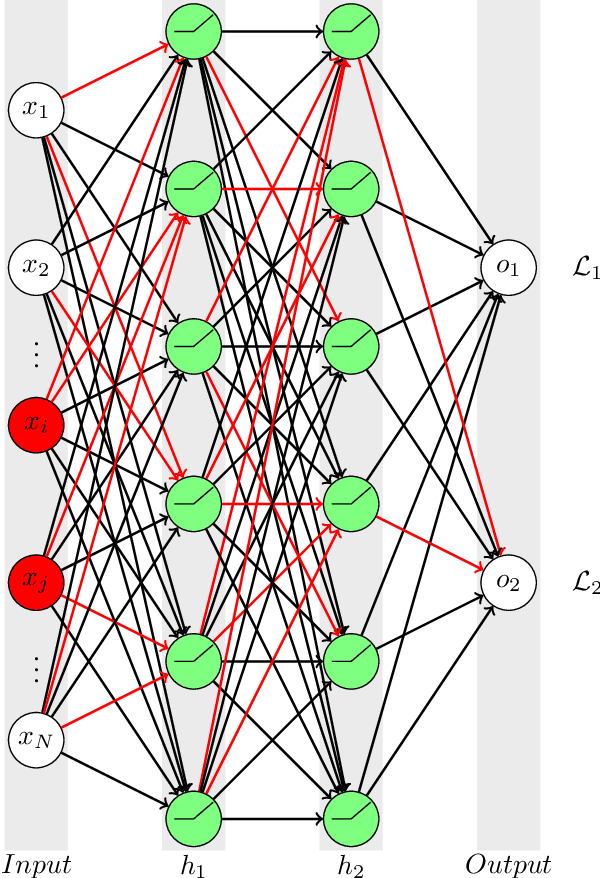

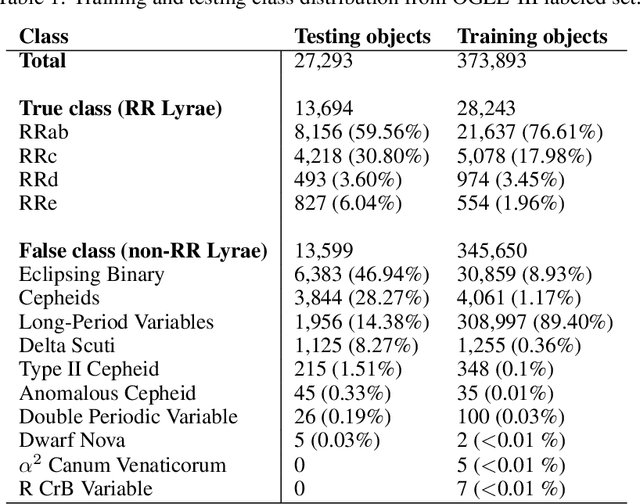

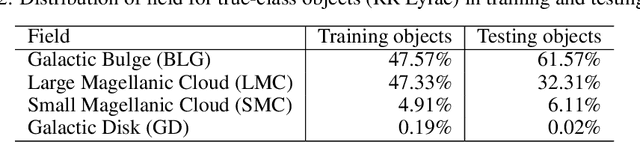

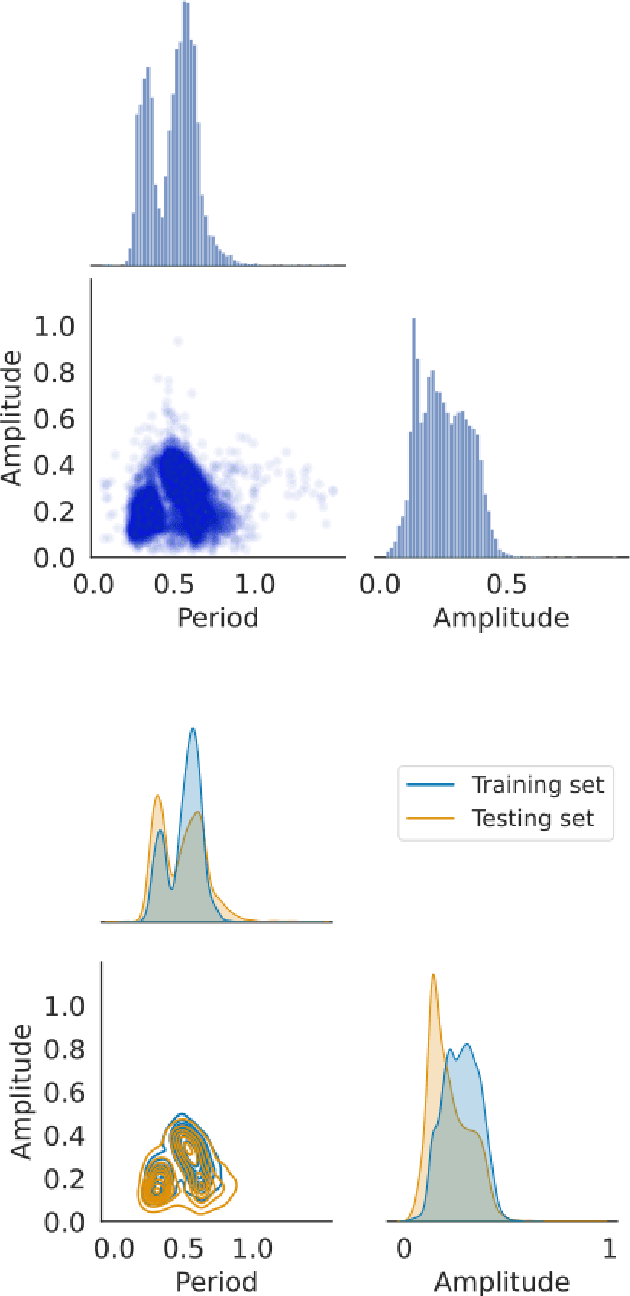

Informative regularization for a multi-layer perceptron RR Lyrae classifier under data shift

Mar 12, 2023

Abstract:In recent decades, machine learning has provided valuable models and algorithms for processing and extracting knowledge from time-series surveys. Different classifiers have been proposed and performed to an excellent standard. Nevertheless, few papers have tackled the data shift problem in labeled training sets, which occurs when there is a mismatch between the data distribution in the training set and the testing set. This drawback can damage the prediction performance in unseen data. Consequently, we propose a scalable and easily adaptable approach based on an informative regularization and an ad-hoc training procedure to mitigate the shift problem during the training of a multi-layer perceptron for RR Lyrae classification. We collect ranges for characteristic features to construct a symbolic representation of prior knowledge, which was used to model the informative regularizer component. Simultaneously, we design a two-step back-propagation algorithm to integrate this knowledge into the neural network, whereby one step is applied in each epoch to minimize classification error, while another is applied to ensure regularization. Our algorithm defines a subset of parameters (a mask) for each loss function. This approach handles the forgetting effect, which stems from a trade-off between these loss functions (learning from data versus learning expert knowledge) during training. Experiments were conducted using recently proposed shifted benchmark sets for RR Lyrae stars, outperforming baseline models by up to 3\% through a more reliable classifier. Our method provides a new path to incorporate knowledge from characteristic features into artificial neural networks to manage the underlying data shift problem.

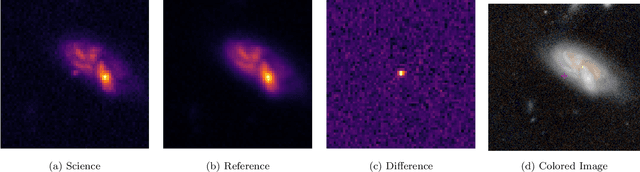

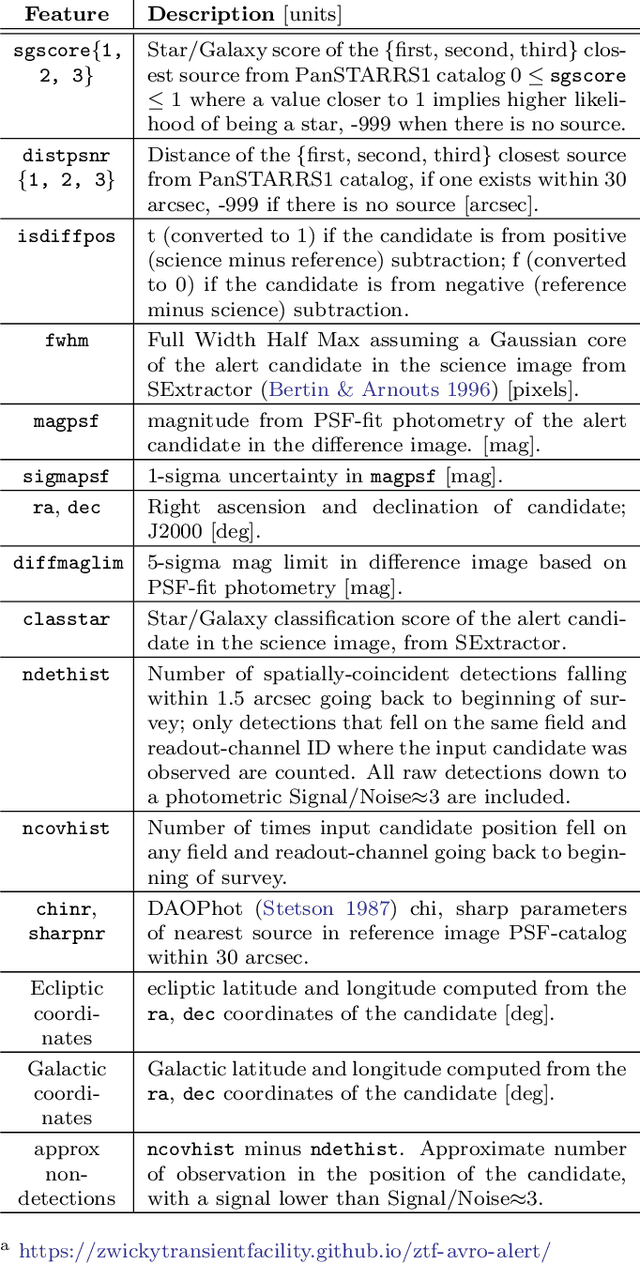

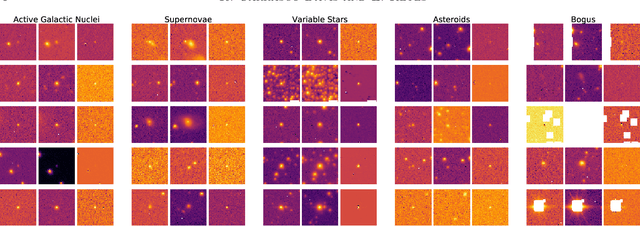

Alert Classification for the ALeRCE Broker System: The Real-time Stamp Classifier

Aug 07, 2020

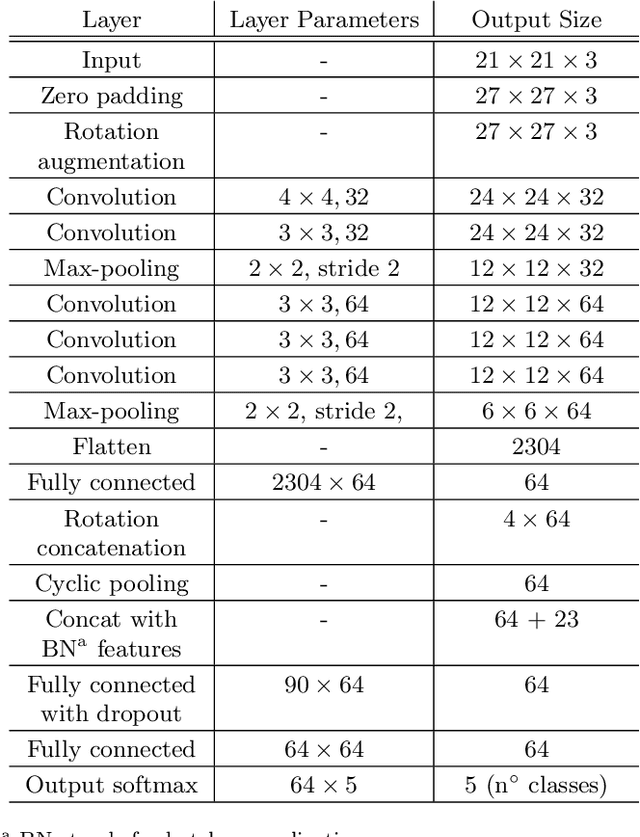

Abstract:We present a real-time stamp classifier of astronomical events for the ALeRCE (Automatic Learning for the Rapid Classification of Events) broker. The classifier is based on a convolutional neural network with an architecture designed to exploit rotational invariance of the images, and trained on alerts ingested from the Zwicky Transient Facility (ZTF). Using only the \textit{science, reference} and \textit{difference} images of the first detection as inputs, along with the metadata of the alert as features, the classifier is able to correctly classify alerts from active galactic nuclei, supernovae (SNe), variable stars, asteroids and bogus classes, with high accuracy ($\sim$94\%) in a balanced test set. In order to find and analyze SN candidates selected by our classifier from the ZTF alert stream, we designed and deployed a visualization tool called SN Hunter, where relevant information about each possible SN is displayed for the experts to choose among candidates to report to the Transient Name Server database. We have reported 3060 SN candidates to date (9.2 candidates per day on average), of which 394 have been confirmed spectroscopically. Our ability to report objects using only a single detection means that 92\% of the reported SNe occurred within one day after the first detection. ALeRCE has only reported candidates not otherwise detected or selected by other groups, therefore adding new early transients to the bulk of objects available for early follow-up. Our work represents an important milestone toward rapid alert classifications with the next generation of large etendue telescopes, such as the Vera C. Rubin Observatory's Legacy Survey of Space and Time.

Scalable End-to-end Recurrent Neural Network for Variable star classification

Feb 03, 2020

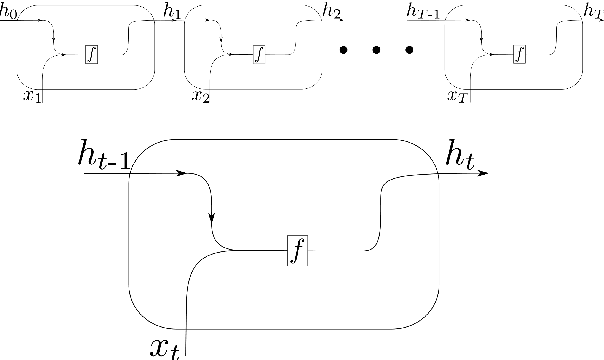

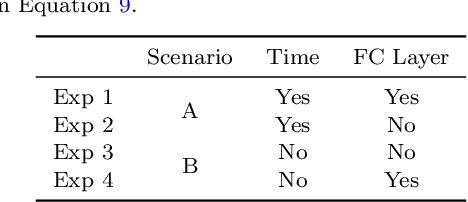

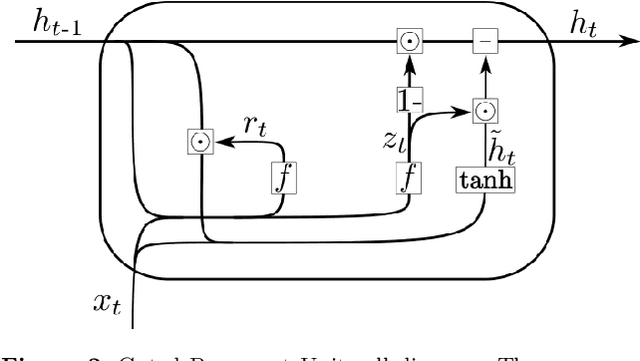

Abstract:During the last decade, considerable effort has been made to perform automatic classification of variable stars using machine learning techniques. Traditionally, light curves are represented as a vector of descriptors or features used as input for many algorithms. Some features are computationally expensive, cannot be updated quickly and hence for large datasets such as the LSST cannot be applied. Previous work has been done to develop alternative unsupervised feature extraction algorithms for light curves, but the cost of doing so still remains high. In this work, we propose an end-to-end algorithm that automatically learns the representation of light curves that allows an accurate automatic classification. We study a series of deep learning architectures based on Recurrent Neural Networks and test them in automated classification scenarios. Our method uses minimal data preprocessing, can be updated with a low computational cost for new observations and light curves, and can scale up to massive datasets. We transform each light curve into an input matrix representation whose elements are the differences in time and magnitude, and the outputs are classification probabilities. We test our method in three surveys: OGLE-III, Gaia and WISE. We obtain accuracies of about $95\%$ in the main classes and $75\%$ in the majority of subclasses. We compare our results with the Random Forest classifier and obtain competitive accuracies while being faster and scalable. The analysis shows that the computational complexity of our approach grows up linearly with the light curve size, while the traditional approach cost grows as $N\log{(N)}$.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge