Linyun Zhou

Deep Feature Response Discriminative Calibration

Nov 16, 2024

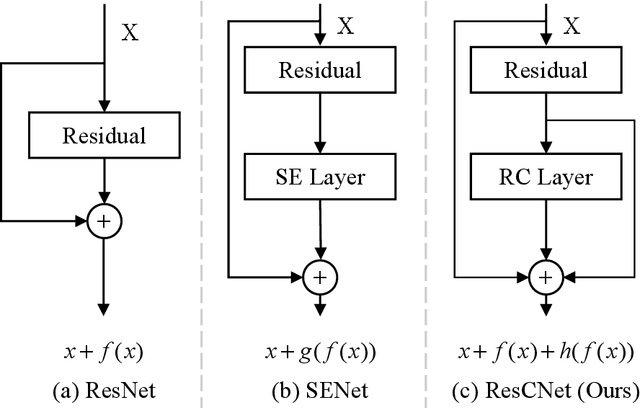

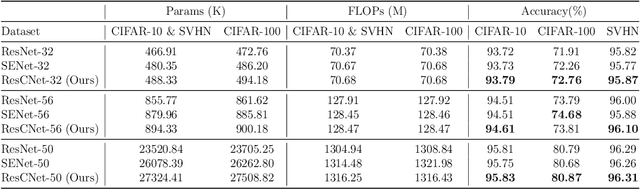

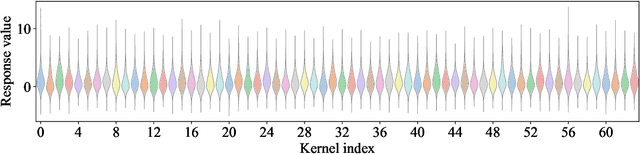

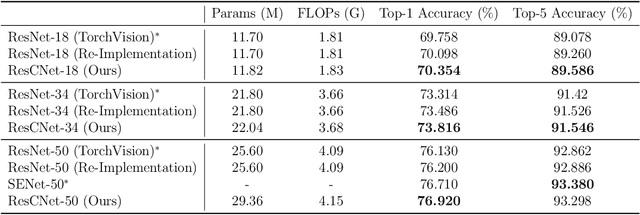

Abstract:Deep neural networks (DNNs) have numerous applications across various domains. Several optimization techniques, such as ResNet and SENet, have been proposed to improve model accuracy. These techniques improve the model performance by adjusting or calibrating feature responses according to a uniform standard. However, they lack the discriminative calibration for different features, thereby introducing limitations in the model output. Therefore, we propose a method that discriminatively calibrates feature responses. The preliminary experimental results indicate that the neural feature response follows a Gaussian distribution. Consequently, we compute confidence values by employing the Gaussian probability density function, and then integrate these values with the original response values. The objective of this integration is to improve the feature discriminability of the neural feature response. Based on the calibration values, we propose a plugin-based calibration module incorporated into a modified ResNet architecture, termed Response Calibration Networks (ResCNet). Extensive experiments on datasets like CIFAR-10, CIFAR-100, SVHN, and ImageNet demonstrate the effectiveness of the proposed approach. The developed code is publicly available at https://github.com/tcmyxc/ResCNet.

Propheter: Prophetic Teacher Guided Long-Tailed Distribution Learning

Apr 09, 2023Abstract:The problem of deep long-tailed learning, a prevalent challenge in the realm of generic visual recognition, persists in a multitude of real-world applications. To tackle the heavily-skewed dataset issue in long-tailed classification, prior efforts have sought to augment existing deep models with the elaborate class-balancing strategies, such as class rebalancing, data augmentation, and module improvement. Despite the encouraging performance, the limited class knowledge of the tailed classes in the training dataset still bottlenecks the performance of the existing deep models. In this paper, we propose an innovative long-tailed learning paradigm that breaks the bottleneck by guiding the learning of deep networks with external prior knowledge. This is specifically achieved by devising an elaborated ``prophetic'' teacher, termed as ``Propheter'', that aims to learn the potential class distributions. The target long-tailed prediction model is then optimized under the instruction of the well-trained ``Propheter'', such that the distributions of different classes are as distinguishable as possible from each other. Experiments on eight long-tailed benchmarks across three architectures demonstrate that the proposed prophetic paradigm acts as a promising solution to the challenge of limited class knowledge in long-tailed datasets. Our code and model can be found in the supplementary material.

Team DETR: Guide Queries as a Professional Team in Detection Transformers

Feb 28, 2023

Abstract:Recent proposed DETR variants have made tremendous progress in various scenarios due to their streamlined processes and remarkable performance. However, the learned queries usually explore the global context to generate the final set prediction, resulting in redundant burdens and unfaithful results. More specifically, a query is commonly responsible for objects of different scales and positions, which is a challenge for the query itself, and will cause spatial resource competition among queries. To alleviate this issue, we propose Team DETR, which leverages query collaboration and position constraints to embrace objects of interest more precisely. We also dynamically cater to each query member's prediction preference, offering the query better scale and spatial priors. In addition, the proposed Team DETR is flexible enough to be adapted to other existing DETR variants without increasing parameters and calculations. Extensive experiments on the COCO dataset show that Team DETR achieves remarkable gains, especially for small and large objects. Code is available at \url{https://github.com/horrible-dong/TeamDETR}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge