Li Duan

Birmingham City University

Detecting Misbehaviors of Large Vision-Language Models by Evidential Uncertainty Quantification

Feb 05, 2026Abstract:Large vision-language models (LVLMs) have shown substantial advances in multimodal understanding and generation. However, when presented with incompetent or adversarial inputs, they frequently produce unreliable or even harmful content, such as fact hallucinations or dangerous instructions. This misalignment with human expectations, referred to as \emph{misbehaviors} of LVLMs, raises serious concerns for deployment in critical applications. These misbehaviors are found to stem from epistemic uncertainty, specifically either conflicting internal knowledge or the absence of supporting information. However, existing uncertainty quantification methods, which typically capture only overall epistemic uncertainty, have shown limited effectiveness in identifying such issues. To address this gap, we propose Evidential Uncertainty Quantification (EUQ), a fine-grained method that captures both information conflict and ignorance for effective detection of LVLM misbehaviors. In particular, we interpret features from the model output head as either supporting (positive) or opposing (negative) evidence. Leveraging Evidence Theory, we model and aggregate this evidence to quantify internal conflict and knowledge gaps within a single forward pass. We extensively evaluate our method across four categories of misbehavior, including hallucinations, jailbreaks, adversarial vulnerabilities, and out-of-distribution (OOD) failures, using state-of-the-art LVLMs, and find that EUQ consistently outperforms strong baselines, showing that hallucinations correspond to high internal conflict and OOD failures to high ignorance. Furthermore, layer-wise evidential uncertainty dynamics analysis helps interpret the evolution of internal representations from a new perspective. The source code is available at https://github.com/HT86159/EUQ.

Title block detection and information extraction for enhanced building drawings search

Apr 11, 2025

Abstract:The architecture, engineering, and construction (AEC) industry still heavily relies on information stored in drawings for building construction, maintenance, compliance and error checks. However, information extraction (IE) from building drawings is often time-consuming and costly, especially when dealing with historical buildings. Drawing search can be simplified by leveraging the information stored in the title block portion of the drawing, which can be seen as drawing metadata. However, title block IE can be complex especially when dealing with historical drawings which do not follow existing standards for uniformity. This work performs a comparison of existing methods for this kind of IE task, and then proposes a novel title block detection and IE pipeline which outperforms existing methods, in particular when dealing with complex, noisy historical drawings. The pipeline is obtained by combining a lightweight Convolutional Neural Network and GPT-4o, the proposed inference pipeline detects building engineering title blocks with high accuracy, and then extract structured drawing metadata from the title blocks, which can be used for drawing search, filtering and grouping. The work demonstrates high accuracy and efficiency in IE for both vector (CAD) and hand-drawn (historical) drawings. A user interface (UI) that leverages the extracted metadata for drawing search is established and deployed on real projects, which demonstrates significant time savings. Additionally, an extensible domain-expert-annotated dataset for title block detection is developed, via an efficient AEC-friendly annotation workflow that lays the foundation for future work.

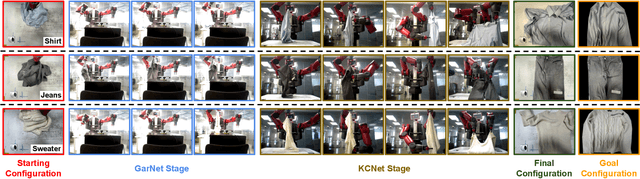

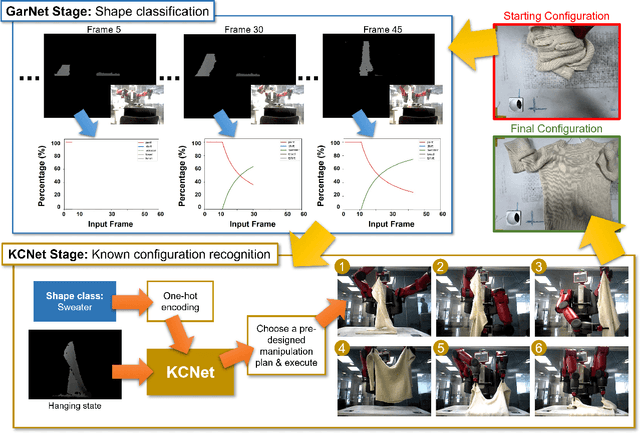

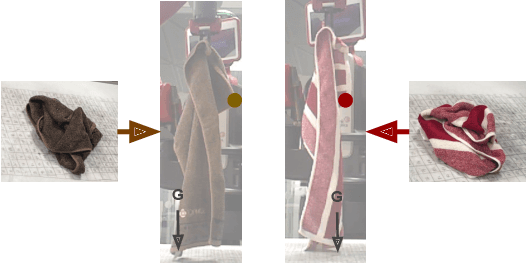

A Data-Centric Approach For Dual-Arm Robotic Garment Flattening

Aug 29, 2022

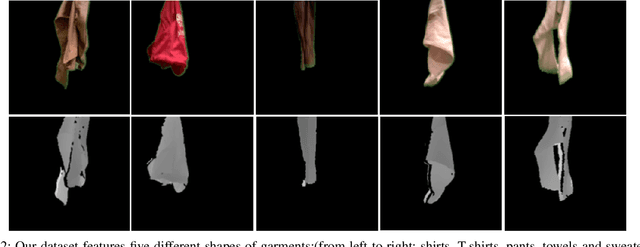

Abstract:Due to the high dimensionality of object states, a garment flattening pipeline requires recognising the configurations of garments for a robot to produce/select manipulation plans to flatten garments. In this paper, we propose a data-centric approach to identify known configurations of garments based on a known configuration network (KCNet) trained on depth images that capture the known configurations of garments and prior knowledge of garment shapes. In this paper, we propose a data-centric approach to identify the known configurations of garments based on a known configuration network (KCNet) trained on the depth images that capture the known configurations of garments and prior knowledge of garment shapes. The known configurations of garments are the configurations of garments when a robot hangs garments in the middle of the air. We found that it is possible to achieve 92\% accuracy if we let the robot recognise the common hanging configurations (the known configurations) of garments. We also demonstrate an effective robot garment flattening pipeline with our proposed approach on a dual-arm Baxter robot. The robot achieved an average operating time of 221.6 seconds and successfully manipulated garments of five different shapes.

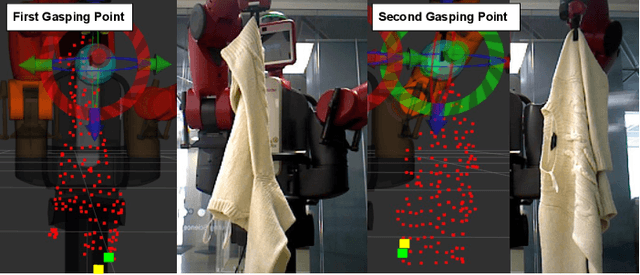

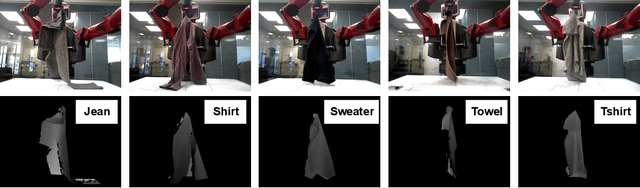

Recognising Known Configurations of Garments For Dual-Arm Robotic Flattening

Apr 30, 2022

Abstract:Robotic deformable-object manipulation is a challenge in the robotic industry because deformable objects have complicated and various object states. Predicting those object states and updating manipulation planning are time-consuming and computationally expensive. In this paper, we propose an effective robotic manipulation approach for recognising 'known configurations' of garments with a 'Known Configuration neural Network' (KCNet) and choosing pre-designed manipulation plans based on the recognised known configurations. Our robotic manipulation plan features a four-action strategy: finding two critical grasping points, stretching the garments, and lifting down the garments. We demonstrate that our approach only needs 98 seconds on average to flatten garments of five categories.

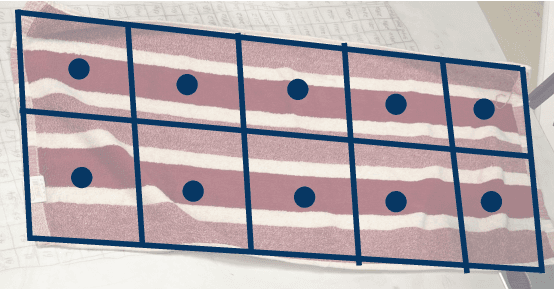

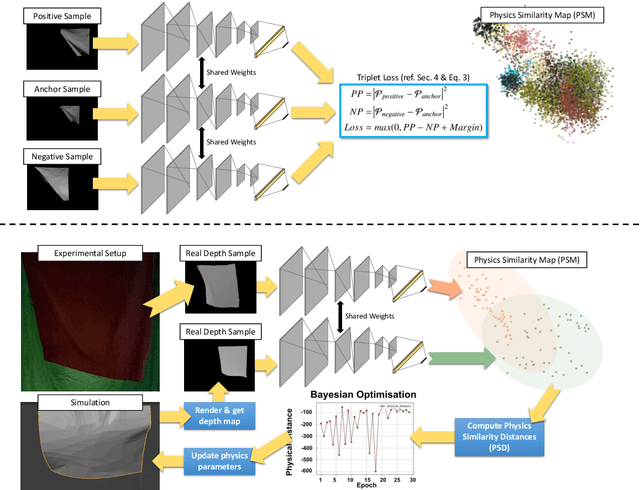

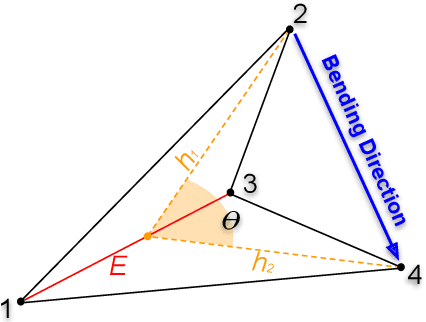

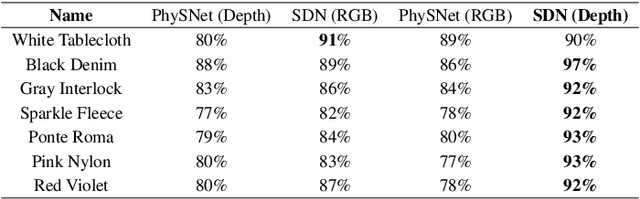

Learning Physics Properties of Fabrics and Garments with a Physics Similarity Neural Network

Dec 20, 2021

Abstract:In this paper, we propose to predict the physics parameters of real fabrics and garments by learning their physics similarities between simulated fabrics via a Physics Similarity Network (PhySNet). For this, we estimate wind speeds generated by an electric fan and the area weight to predict bending stiffness of simulated and real fabrics and garments. We found that PhySNet coupled with a Bayesian optimiser can predict physics parameters and improve the state-of-art by 34%for real fabrics and 68% for real garments.

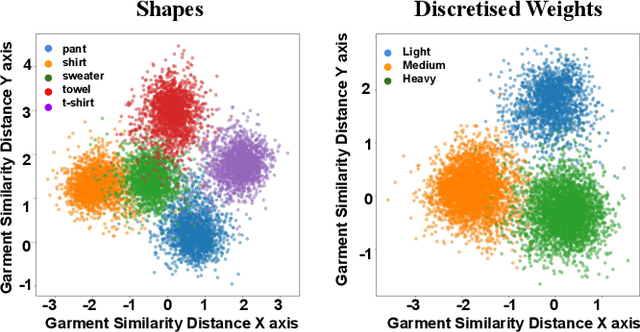

Garment Similarity Network (GarNet): A Continuous Perception Robotic Approach for Predicting Shapes and Visually Perceived Weights of Unseen Garments

Sep 16, 2021

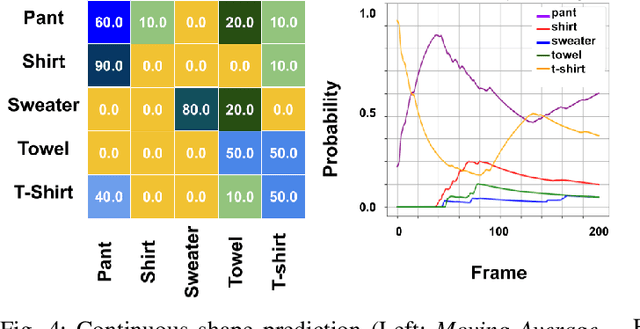

Abstract:We present in this paper a Garment Similarity Network (GarNet) that learns geometric and physical similarities between known garments by continuously observing a garment while a robot picks it up from a table. The aim is to capture and encode geometric and physical characteristics of a garment into a manifold where a decision can be carried out, such as predicting the garment's shape class and its visually perceived weight. Our approach features an early stop strategy, which means that GarNet does not need to observe the entire video sequence to make a prediction and maintain high prediction accuracy values. In our experiments, we find that GarNet achieves prediction accuracies of 98% for shape classification and 95% for predicting weights. We compare our approach with state-of-art methods, and we observe that our approach advances the state-of-art methods from 70.8% to 98% for shape classification.

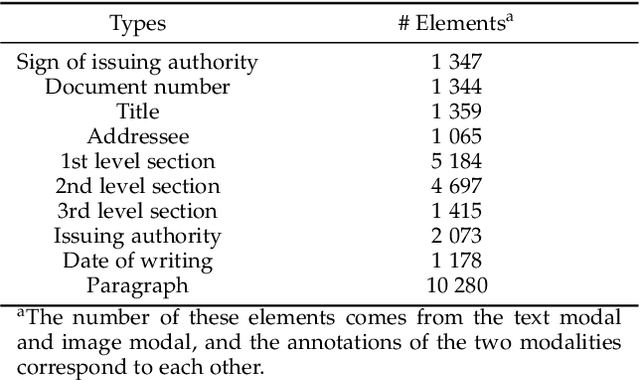

Metaknowledge Extraction Based on Multi-Modal Documents

Feb 05, 2021

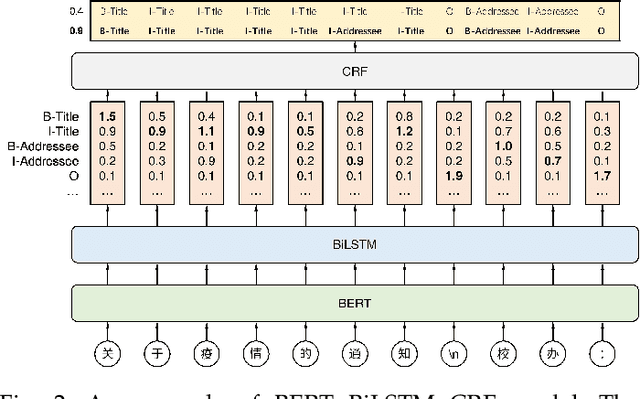

Abstract:The triple-based knowledge in large-scale knowledge bases is most likely lacking in structural logic and problematic of conducting knowledge hierarchy. In this paper, we introduce the concept of metaknowledge to knowledge engineering research for the purpose of structural knowledge construction. Therefore, the Metaknowledge Extraction Framework and Document Structure Tree model are presented to extract and organize metaknowledge elements (titles, authors, abstracts, sections, paragraphs, etc.), so that it is feasible to extract the structural knowledge from multi-modal documents. Experiment results have proved the effectiveness of metaknowledge elements extraction by our framework. Meanwhile, detailed examples are given to demonstrate what exactly metaknowledge is and how to generate it. At the end of this paper, we propose and analyze the task flow of metaknowledge applications and the associations between knowledge and metaknowledge.

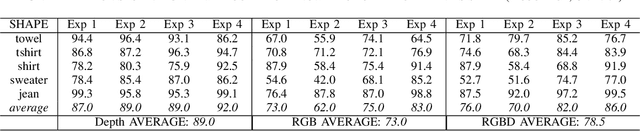

Continuous Perception for Classifying Shapes and Weights of Garmentsfor Robotic Vision Applications

Nov 11, 2020

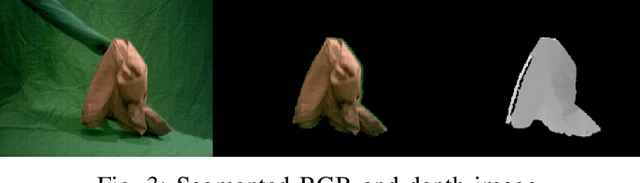

Abstract:We present an approach to continuous perception for robotic laundry tasks. Our assumption is that the visual prediction of a garment's shapes and weights is possible via a neural network that learns the dynamic changes of garments from video sequences. Continuous perception is leveraged during training by inputting consecutive frames, of which the network learns how a garment deforms. To evaluate our hypothesis, we captured a dataset of 40K RGB and 40K depth video sequences while a garment is being manipulated. We also conducted ablation studies to understand whether the neural network learns the physical and dynamic properties of garments. Our findings suggest that a modified AlexNet-LSTM architecture has the best classification performance for the garment's shape and weights. To further provide evidence that continuous perception facilitates the prediction of the garment's shapes and weights, we evaluated our network on unseen video sequences and computed the 'Moving Average' over a sequence of predictions. We found that our network has a classification accuracy of 48% and 60% for shapes and weights of garments, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge