Continuous Perception for Classifying Shapes and Weights of Garmentsfor Robotic Vision Applications

Paper and Code

Nov 11, 2020

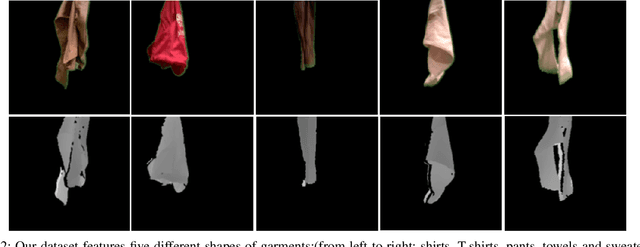

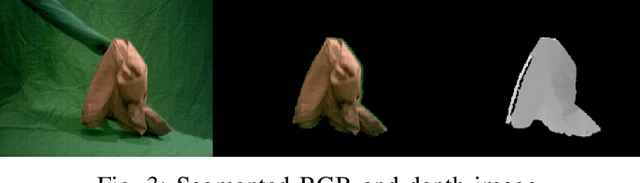

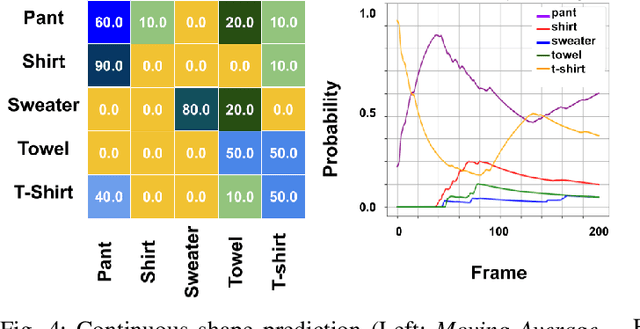

We present an approach to continuous perception for robotic laundry tasks. Our assumption is that the visual prediction of a garment's shapes and weights is possible via a neural network that learns the dynamic changes of garments from video sequences. Continuous perception is leveraged during training by inputting consecutive frames, of which the network learns how a garment deforms. To evaluate our hypothesis, we captured a dataset of 40K RGB and 40K depth video sequences while a garment is being manipulated. We also conducted ablation studies to understand whether the neural network learns the physical and dynamic properties of garments. Our findings suggest that a modified AlexNet-LSTM architecture has the best classification performance for the garment's shape and weights. To further provide evidence that continuous perception facilitates the prediction of the garment's shapes and weights, we evaluated our network on unseen video sequences and computed the 'Moving Average' over a sequence of predictions. We found that our network has a classification accuracy of 48% and 60% for shapes and weights of garments, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge