Lapo Frati

How Weight Resampling and Optimizers Shape the Dynamics of Continual Learning and Forgetting in Neural Networks

Jul 02, 2025

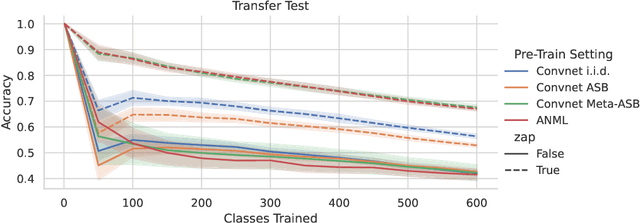

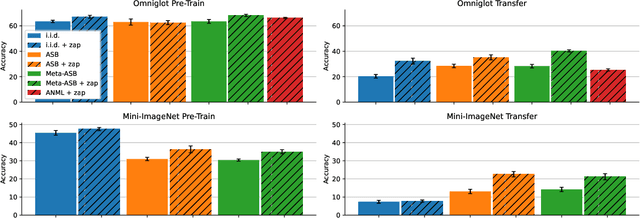

Abstract:Recent work in continual learning has highlighted the beneficial effect of resampling weights in the last layer of a neural network (``zapping"). Although empirical results demonstrate the effectiveness of this approach, the underlying mechanisms that drive these improvements remain unclear. In this work, we investigate in detail the pattern of learning and forgetting that take place inside a convolutional neural network when trained in challenging settings such as continual learning and few-shot transfer learning, with handwritten characters and natural images. Our experiments show that models that have undergone zapping during training more quickly recover from the shock of transferring to a new domain. Furthermore, to better observe the effect of continual learning in a multi-task setting we measure how each individual task is affected. This shows that, not only zapping, but the choice of optimizer can also deeply affect the dynamics of learning and forgetting, causing complex patterns of synergy/interference between tasks to emerge when the model learns sequentially at transfer time.

Reset It and Forget It: Relearning Last-Layer Weights Improves Continual and Transfer Learning

Oct 12, 2023

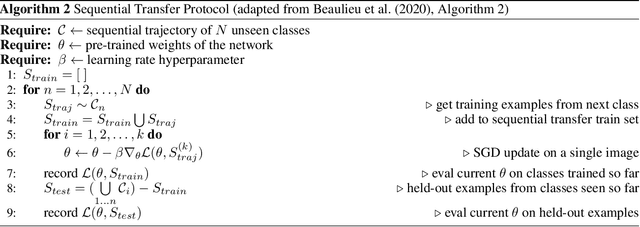

Abstract:This work identifies a simple pre-training mechanism that leads to representations exhibiting better continual and transfer learning. This mechanism -- the repeated resetting of weights in the last layer, which we nickname "zapping" -- was originally designed for a meta-continual-learning procedure, yet we show it is surprisingly applicable in many settings beyond both meta-learning and continual learning. In our experiments, we wish to transfer a pre-trained image classifier to a new set of classes, in a few shots. We show that our zapping procedure results in improved transfer accuracy and/or more rapid adaptation in both standard fine-tuning and continual learning settings, while being simple to implement and computationally efficient. In many cases, we achieve performance on par with state of the art meta-learning without needing the expensive higher-order gradients, by using a combination of zapping and sequential learning. An intuitive explanation for the effectiveness of this zapping procedure is that representations trained with repeated zapping learn features that are capable of rapidly adapting to newly initialized classifiers. Such an approach may be considered a computationally cheaper type of, or alternative to, meta-learning rapidly adaptable features with higher-order gradients. This adds to recent work on the usefulness of resetting neural network parameters during training, and invites further investigation of this mechanism.

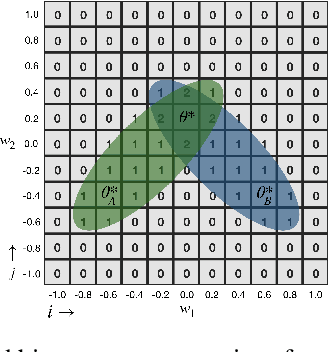

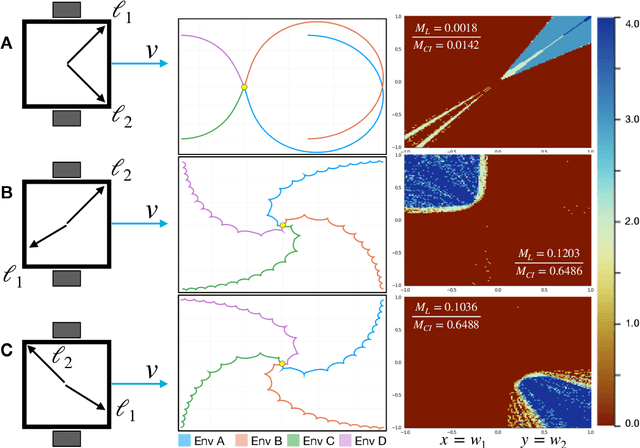

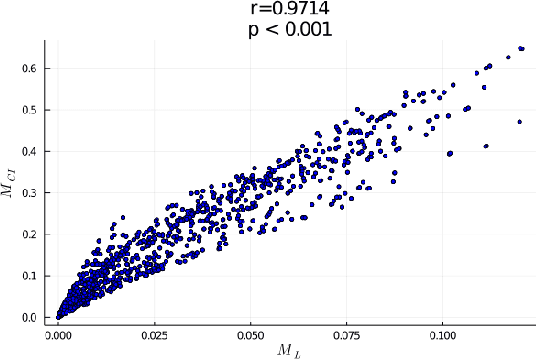

A good body is all you need: avoiding catastrophic interference via agent architecture search

Aug 20, 2021

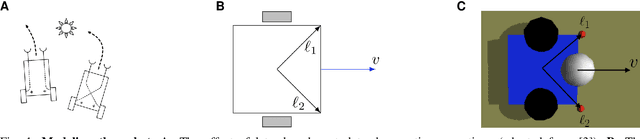

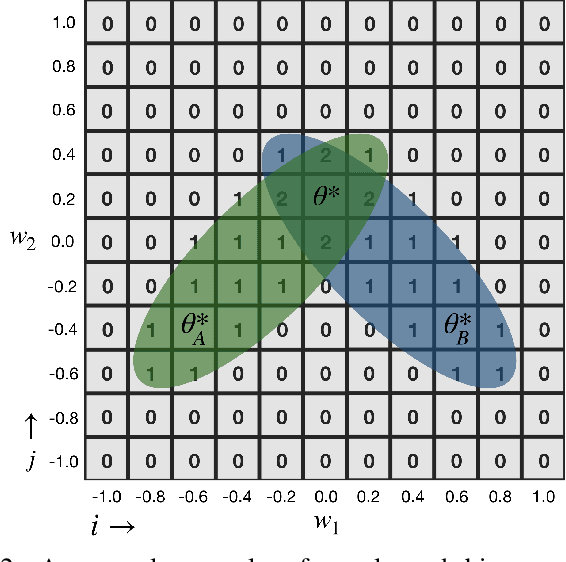

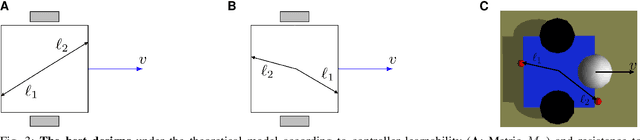

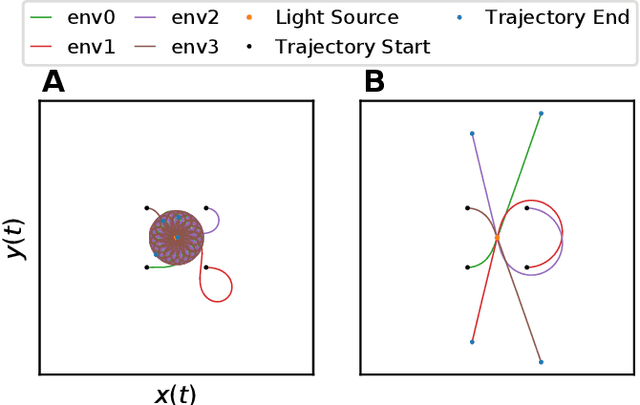

Abstract:In robotics, catastrophic interference continues to restrain policy training across environments. Efforts to combat catastrophic interference to date focus on novel neural architectures or training methods, with a recent emphasis on policies with good initial settings that facilitate training in new environments. However, none of these methods to date have taken into account how the physical architecture of the robot can obstruct or facilitate catastrophic interference, just as the choice of neural architecture can. In previous work we have shown how aspects of a robot's physical structure (specifically, sensor placement) can facilitate policy learning by increasing the fraction of optimal policies for a given physical structure. Here we show for the first time that this proxy measure of catastrophic interference correlates with sample efficiency across several search methods, proving that favorable loss landscapes can be induced by the correct choice of physical structure. We show that such structures can be found via co-optimization -- optimization of a robot's structure and control policy simultaneously -- yielding catastrophic interference resistant robot structures and policies, and that this is more efficient than control policy optimization alone. Finally, we show that such structures exhibit sensor homeostasis across environments and introduce this as the mechanism by which certain robots overcome catastrophic interference.

Learning to Continually Learn

Mar 04, 2020

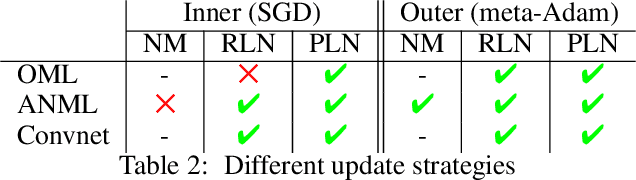

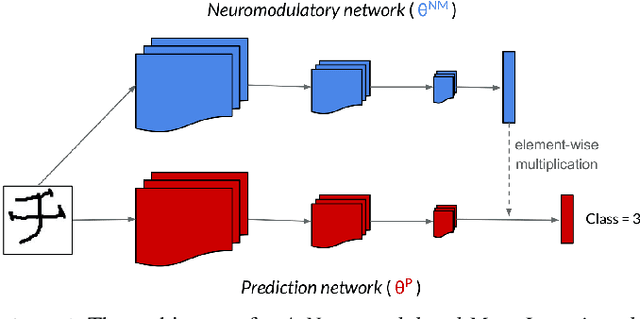

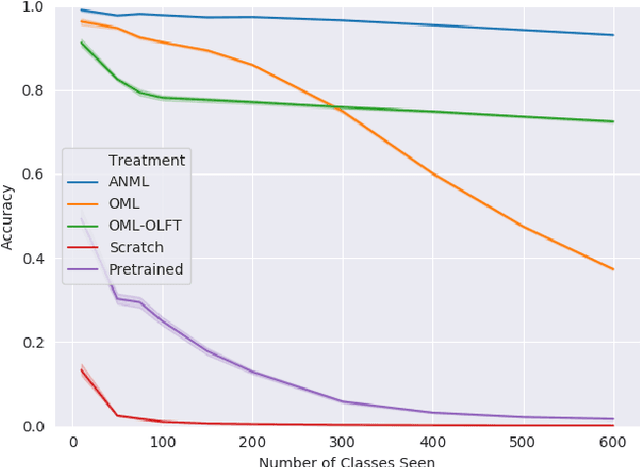

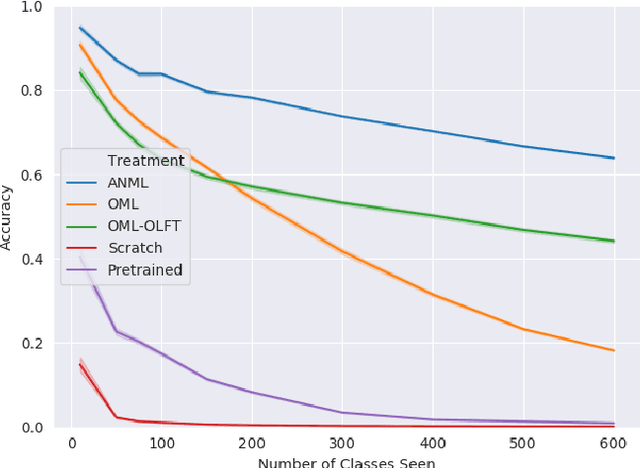

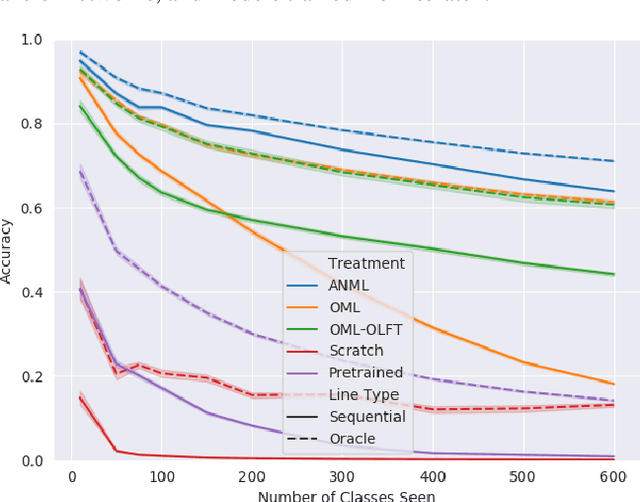

Abstract:Continual lifelong learning requires an agent or model to learn many sequentially ordered tasks, building on previous knowledge without catastrophically forgetting it. Much work has gone towards preventing the default tendency of machine learning models to catastrophically forget, yet virtually all such work involves manually-designed solutions to the problem. We instead advocate meta-learning a solution to catastrophic forgetting, allowing AI to learn to continually learn. Inspired by neuromodulatory processes in the brain, we propose A Neuromodulated Meta-Learning Algorithm (ANML). It differentiates through a sequential learning process to meta-learn an activation-gating function that enables context-dependent selective activation within a deep neural network. Specifically, a neuromodulatory (NM) neural network gates the forward pass of another (otherwise normal) neural network called the prediction learning network (PLN). The NM network also thus indirectly controls selective plasticity (i.e. the backward pass of) the PLN. ANML enables continual learning without catastrophic forgetting at scale: it produces state-of-the-art continual learning performance, sequentially learning as many as 600 classes (over 9,000 SGD updates).

Embodiment dictates learnability in neural controllers

Oct 15, 2019

Abstract:Catastrophic forgetting continues to severely restrict the learnability of controllers suitable for multiple task environments. Efforts to combat catastrophic forgetting reported in the literature to date have focused on how control systems can be updated more rapidly, hastening their adjustment from good initial settings to new environments, or more circumspectly, suppressing their ability to overfit to any one environment. When using robots, the environment includes the robot's own body, its shape and material properties, and how its actuators and sensors are distributed along its mechanical structure. Here we demonstrate for the first time how one such design decision (sensor placement) can alter the landscape of the loss function itself, either expanding or shrinking the weight manifolds containing suitable controllers for each individual task, thus increasing or decreasing their probability of overlap across tasks, and thus reducing or inducing the potential for catastrophic forgetting.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge