Kevin H. Huang

A High-dimensional Convergence Theorem for U-statistics with Applications to Kernel-based Testing

Feb 24, 2023

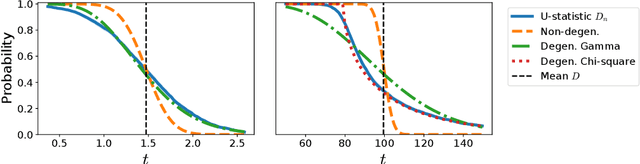

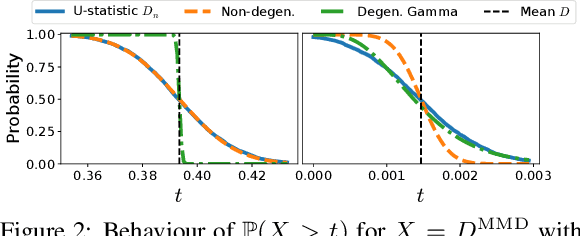

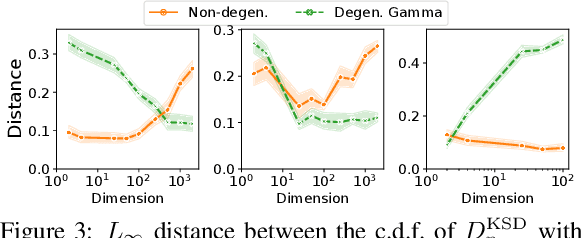

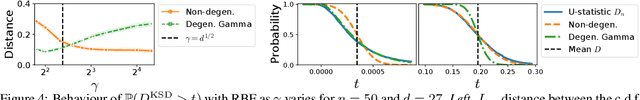

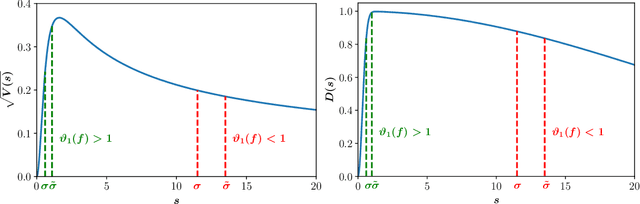

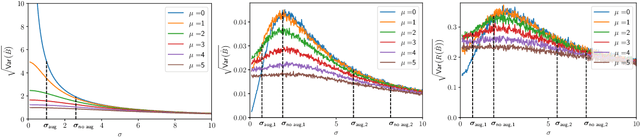

Abstract:We prove a convergence theorem for U-statistics of degree two, where the data dimension $d$ is allowed to scale with sample size $n$. We find that the limiting distribution of a U-statistic undergoes a phase transition from the non-degenerate Gaussian limit to the degenerate limit, regardless of its degeneracy and depending only on a moment ratio. A surprising consequence is that a non-degenerate U-statistic in high dimensions can have a non-Gaussian limit with a larger variance and asymmetric distribution. Our bounds are valid for any finite $n$ and $d$, independent of individual eigenvalues of the underlying function, and dimension-independent under a mild assumption. As an application, we apply our theory to two popular kernel-based distribution tests, MMD and KSD, whose high-dimensional performance has been challenging to study. In a simple empirical setting, our results correctly predict how the test power at a fixed threshold scales with $d$ and the bandwidth.

Quantifying the Effects of Data Augmentation

Feb 18, 2022

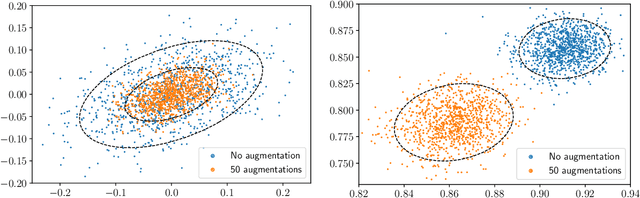

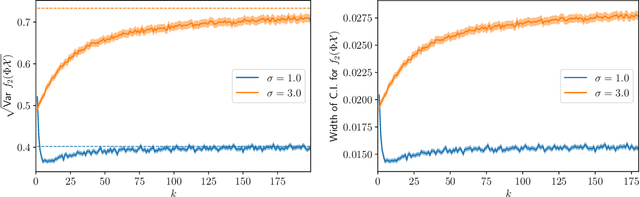

Abstract:We provide results that exactly quantify how data augmentation affects the convergence rate and variance of estimates. They lead to some unexpected findings: Contrary to common intuition, data augmentation may increase rather than decrease uncertainty of estimates, such as the empirical prediction risk. Our main theoretical tool is a limit theorem for functions of randomly transformed, high-dimensional random vectors. The proof draws on work in probability on noise stability of functions of many variables. The pathological behavior we identify is not a consequence of complex models, but can occur even in the simplest settings -- one of our examples is a linear ridge regressor with two parameters. On the other hand, our results also show that data augmentation can have real, quantifiable benefits.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge