Karan Bhukar

Scalable and Efficient Large-Scale Log Analysis with LLMs: An IT Software Support Case Study

Nov 17, 2025Abstract:IT environments typically have logging mechanisms to monitor system health and detect issues. However, the huge volume of generated logs makes manual inspection impractical, highlighting the importance of automated log analysis in IT Software Support. In this paper, we propose a log analytics tool that leverages Large Language Models (LLMs) for log data processing and issue diagnosis, enabling the generation of automated insights and summaries. We further present a novel approach for efficiently running LLMs on CPUs to process massive log volumes in minimal time without compromising output quality. We share the insights and lessons learned from deployment of the tool - in production since March 2024 - scaled across 70 software products, processing over 2000 tickets for issue diagnosis, achieving a time savings of 300+ man hours and an estimated $15,444 per month in manpower costs compared to the traditional log analysis practices.

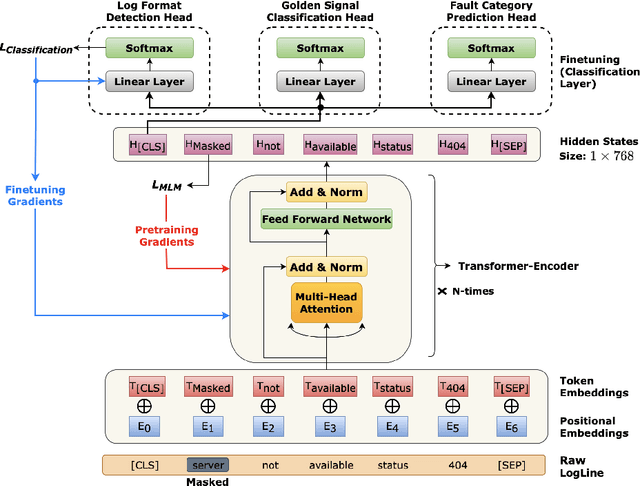

Learning Representations on Logs for AIOps

Aug 18, 2023

Abstract:AI for IT Operations (AIOps) is a powerful platform that Site Reliability Engineers (SREs) use to automate and streamline operational workflows with minimal human intervention. Automated log analysis is a critical task in AIOps as it provides key insights for SREs to identify and address ongoing faults. Tasks such as log format detection, log classification, and log parsing are key components of automated log analysis. Most of these tasks require supervised learning; however, there are multiple challenges due to limited labelled log data and the diverse nature of log data. Large Language Models (LLMs) such as BERT and GPT3 are trained using self-supervision on a vast amount of unlabeled data. These models provide generalized representations that can be effectively used for various downstream tasks with limited labelled data. Motivated by the success of LLMs in specific domains like science and biology, this paper introduces a LLM for log data which is trained on public and proprietary log data. The results of our experiments demonstrate that the proposed LLM outperforms existing models on multiple downstream tasks. In summary, AIOps powered by LLMs offers an efficient and effective solution for automating log analysis tasks and enabling SREs to focus on higher-level tasks. Our proposed LLM, trained on public and proprietary log data, offers superior performance on multiple downstream tasks, making it a valuable addition to the AIOps platform.

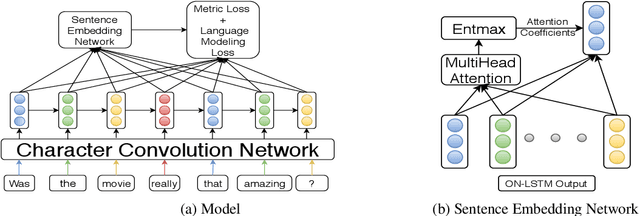

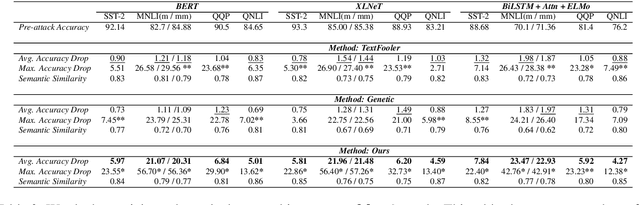

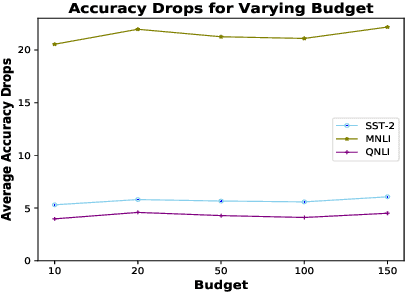

Target Model Agnostic Adversarial Attacks with Query Budgets on Language Understanding Models

Jun 13, 2021

Abstract:Despite significant improvements in natural language understanding models with the advent of models like BERT and XLNet, these neural-network based classifiers are vulnerable to blackbox adversarial attacks, where the attacker is only allowed to query the target model outputs. We add two more realistic restrictions on the attack methods, namely limiting the number of queries allowed (query budget) and crafting attacks that easily transfer across different pre-trained models (transferability), which render previous attack models impractical and ineffective. Here, we propose a target model agnostic adversarial attack method with a high degree of attack transferability across the attacked models. Our empirical studies show that in comparison to baseline methods, our method generates highly transferable adversarial sentences under the restriction of limited query budgets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge