Jung-Sun Lee

Distinct Theta Synchrony across Speech Modes: Perceived, Spoken, Whispered, and Imagined

Nov 11, 2025Abstract:Human speech production encompasses multiple modes such as perceived, overt, whispered, and imagined, each reflecting distinct neural mechanisms. Among these, theta-band synchrony has been closely associated with language processing, attentional control, and inner speech. However, previous studies have largely focused on a single mode, such as overt speech, and have rarely conducted an integrated comparison of theta synchrony across different speech modes. In this study, we analyzed differences in theta-band neural synchrony across speech modes based on connectivity metrics, focusing on region-wise variations. The results revealed that overt and whispered speech exhibited broader and stronger frontotemporal synchrony, reflecting active motor-phonological coupling during overt articulation, whereas perceived speech showed dominant posterior and temporal synchrony patterns, consistent with auditory perception and comprehension processes. In contrast, imagined speech demonstrated a more spatially confined but internally coherent synchronization pattern, primarily involving frontal and supplementary motor regions. These findings indicate that the extent and spatial distribution of theta synchrony differ substantially across modes, with overt articulation engaging widespread cortical interactions, whispered speech showing intermediate engagement, and perception relying predominantly on temporoparietal networks. Therefore, this study aims to elucidate the differences in theta-band neural synchrony across various speech modes, thereby uncovering both the shared and distinct neural dynamics underlying language perception and imagined speech.

Toward Robust EEG-based Intention Decoding during Misarticulated Speech in Aphasia

Nov 11, 2025Abstract:Aphasia severely limits verbal communication due to impaired language production, often leading to frequent misarticulations during speech attempts. Despite growing interest in brain-computer interface technologies, relatively little attention has been paid to developing EEG-based communication support systems tailored for aphasic patients. To address this gap, we recruited a single participant with expressive aphasia and conducted an Korean-based automatic speech task. EEG signals were recorded during task performance, and each trial was labeled as either correct or incorrect depending on whether the intended word was successfully spoken. Spectral analysis revealed distinct neural activation patterns between the two trial types: misarticulated trials exhibited excessive delta power across widespread channels and increased theta-alpha activity in frontal regions. Building upon these findings, we developed a soft multitask learning framework with maximum mean discrepancy regularization that focus on delta features to jointly optimize class discrimination while aligning the EEG feature distributions of correct and misarticulated trials. The proposed model achieved 58.6 % accuracy for correct and 45.5 % for misarticulated trials-outperforming the baseline by over 45 % on the latter-demonstrating robust intention decoding even under articulation errors. These results highlight the feasibility of EEG-based assistive systems capable of supporting real-world, imperfect speech conditions in aphasia patients.

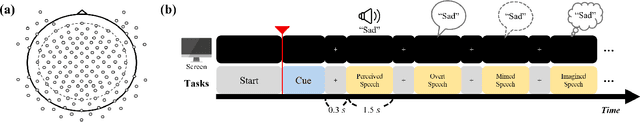

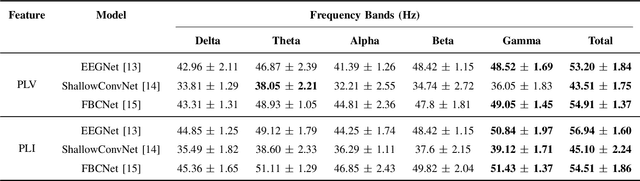

Towards Unified Neural Decoding of Perceived, Spoken and Imagined Speech from EEG Signals

Nov 14, 2024

Abstract:Brain signals accompany various information relevant to human actions and mental imagery, making them crucial to interpreting and understanding human intentions. Brain-computer interface technology leverages this brain activity to generate external commands for controlling the environment, offering critical advantages to individuals with paralysis or locked-in syndrome. Within the brain-computer interface domain, brain-to-speech research has gained attention, focusing on the direct synthesis of audible speech from brain signals. Most current studies decode speech from brain activity using invasive techniques and emphasize spoken speech data. However, humans express various speech states, and distinguishing these states through non-invasive approaches remains a significant yet challenging task. This research investigated the effectiveness of deep learning models for non-invasive-based neural signal decoding, with an emphasis on distinguishing between different speech paradigms, including perceived, overt, whispered, and imagined speech, across multiple frequency bands. The model utilizing the spatial conventional neural network module demonstrated superior performance compared to other models, especially in the gamma band. Additionally, imagined speech in the theta frequency band, where deep learning also showed strong effects, exhibited statistically significant differences compared to the other speech paradigms.

Enhanced Generative Adversarial Networks for Unseen Word Generation from EEG Signals

Nov 14, 2023Abstract:Recent advances in brain-computer interface (BCI) technology, particularly based on generative adversarial networks (GAN), have shown great promise for improving decoding performance for BCI. Within the realm of Brain-Computer Interfaces (BCI), GANs find application in addressing many areas. They serve as a valuable tool for data augmentation, which can solve the challenge of limited data availability, and synthesis, effectively expanding the dataset and creating novel data formats, thus enhancing the robustness and adaptability of BCI systems. Research in speech-related paradigms has significantly expanded, with a critical impact on the advancement of assistive technologies and communication support for individuals with speech impairments. In this study, GANs were investigated, particularly for the BCI field, and applied to generate text from EEG signals. The GANs could generalize all subjects and decode unseen words, indicating its ability to capture underlying speech patterns consistent across different individuals. The method has practical applications in neural signal-based speech recognition systems and communication aids for individuals with speech difficulties.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge