Josué Page Vizcaíno

Sparsity-based background removal for STORM super-resolution images

Jan 15, 2024

Abstract:Single-molecule localization microscopy techniques, like stochastic optical reconstruction microscopy (STORM), visualize biological specimens by stochastically exciting sparse blinking emitters. The raw images suffer from unwanted background fluorescence, which must be removed to achieve super-resolution. We introduce a sparsity-based background removal method by adapting a neural network (SLNet) from a different microscopy domain. The SLNet computes a low-rank representation of the images, and then, by subtracting it from the raw images, the sparse component is computed, representing the frames without the background. We compared our approach with widely used background removal methods, such as the median background removal or the rolling ball algorithm, on two commonly used STORM datasets, one glial cell, and one microtubule dataset. The SLNet delivers STORM frames with less background, leading to higher emitters' localization precision and higher-resolution reconstructed images than commonly used methods. Notably, the SLNet is lightweight and easily trainable (<5 min). Since it is trained in an unsupervised manner, no prior information is required and can be applied to any STORM dataset. We uploaded a pre-trained SLNet to the Bioimage model zoo, easily accessible through ImageJ. Our results show that our sparse decomposition method could be an essential and efficient STORM pre-processing tool.

Fast light-field 3D microscopy with out-of-distribution detection and adaptation through Conditional Normalizing Flows

Jun 14, 2023

Abstract:Real-time 3D fluorescence microscopy is crucial for the spatiotemporal analysis of live organisms, such as neural activity monitoring. The eXtended field-of-view light field microscope (XLFM), also known as Fourier light field microscope, is a straightforward, single snapshot solution to achieve this. The XLFM acquires spatial-angular information in a single camera exposure. In a subsequent step, a 3D volume can be algorithmically reconstructed, making it exceptionally well-suited for real-time 3D acquisition and potential analysis. Unfortunately, traditional reconstruction methods (like deconvolution) require lengthy processing times (0.0220 Hz), hampering the speed advantages of the XLFM. Neural network architectures can overcome the speed constraints at the expense of lacking certainty metrics, which renders them untrustworthy for the biomedical realm. This work proposes a novel architecture to perform fast 3D reconstructions of live immobilized zebrafish neural activity based on a conditional normalizing flow. It reconstructs volumes at 8 Hz spanning 512x512x96 voxels, and it can be trained in under two hours due to the small dataset requirements (10 image-volume pairs). Furthermore, normalizing flows allow for exact Likelihood computation, enabling distribution monitoring, followed by out-of-distribution detection and retraining of the system when a novel sample is detected. We evaluate the proposed method on a cross-validation approach involving multiple in-distribution samples (genetically identical zebrafish) and various out-of-distribution ones.

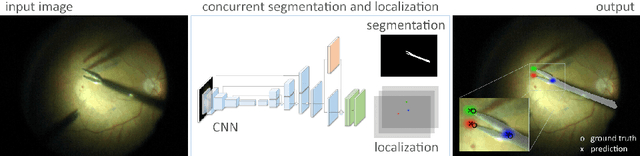

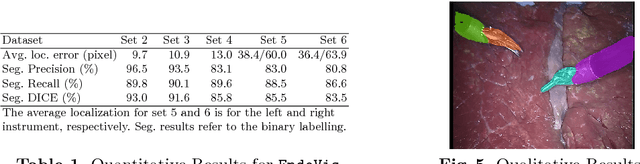

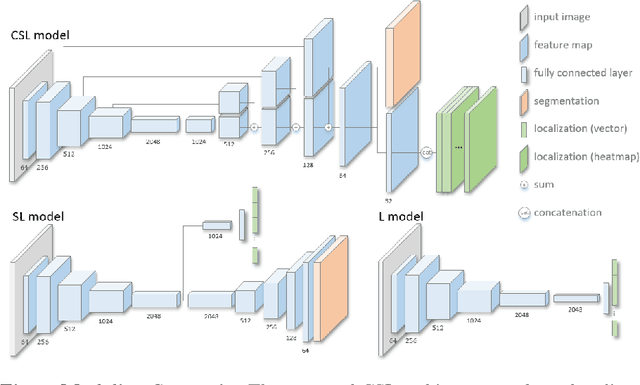

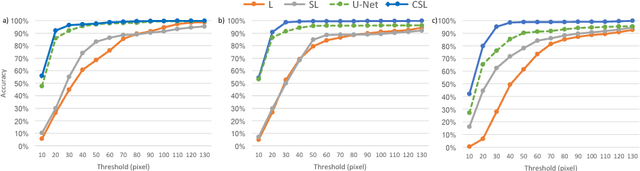

Concurrent Segmentation and Localization for Tracking of Surgical Instruments

Aug 01, 2017

Abstract:Real-time instrument tracking is a crucial requirement for various computer-assisted interventions. In order to overcome problems such as specular reflections and motion blur, we propose a novel method that takes advantage of the interdependency between localization and segmentation of the surgical tool. In particular, we reformulate the 2D instrument pose estimation as heatmap regression and thereby enable a concurrent, robust and near real-time regression of both tasks via deep learning. As demonstrated by our experimental results, this modeling leads to a significantly improved performance than directly regressing the tool position and allows our method to outperform the state of the art on a Retinal Microsurgery benchmark and the MICCAI EndoVis Challenge 2015.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge