Joris Renkens

Lazy Explanation-Based Approximation for Probabilistic Logic Programming

Jul 10, 2015

Abstract:We introduce a lazy approach to the explanation-based approximation of probabilistic logic programs. It uses only the most significant part of the program when searching for explanations. The result is a fast and anytime approximate inference algorithm which returns hard lower and upper bounds on the exact probability. We experimentally show that this method outperforms state-of-the-art approximate inference.

Inference and learning in probabilistic logic programs using weighted Boolean formulas

Apr 25, 2013

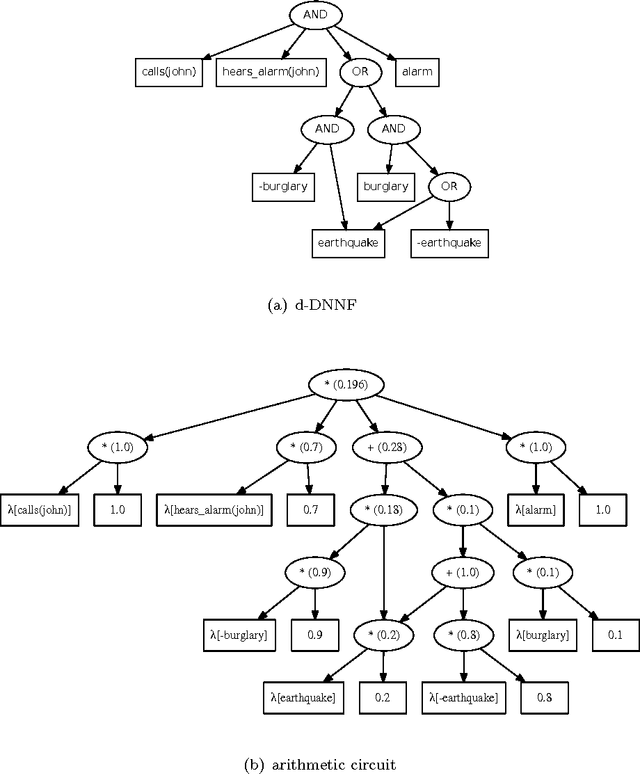

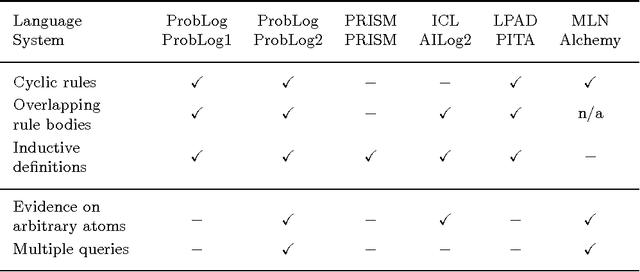

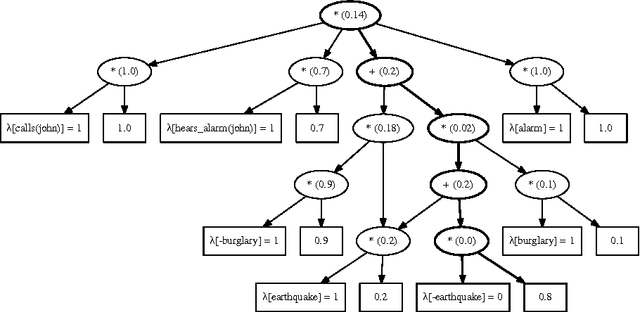

Abstract:Probabilistic logic programs are logic programs in which some of the facts are annotated with probabilities. This paper investigates how classical inference and learning tasks known from the graphical model community can be tackled for probabilistic logic programs. Several such tasks such as computing the marginals given evidence and learning from (partial) interpretations have not really been addressed for probabilistic logic programs before. The first contribution of this paper is a suite of efficient algorithms for various inference tasks. It is based on a conversion of the program and the queries and evidence to a weighted Boolean formula. This allows us to reduce the inference tasks to well-studied tasks such as weighted model counting, which can be solved using state-of-the-art methods known from the graphical model and knowledge compilation literature. The second contribution is an algorithm for parameter estimation in the learning from interpretations setting. The algorithm employs Expectation Maximization, and is built on top of the developed inference algorithms. The proposed approach is experimentally evaluated. The results show that the inference algorithms improve upon the state-of-the-art in probabilistic logic programming and that it is indeed possible to learn the parameters of a probabilistic logic program from interpretations.

* To appear in Theory and Practice of Logic Programming (TPLP)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge