Joanne Hoffman

2D View Aggregation for Lymph Node Detection Using a Shallow Hierarchy of Linear Classifiers

Aug 14, 2014

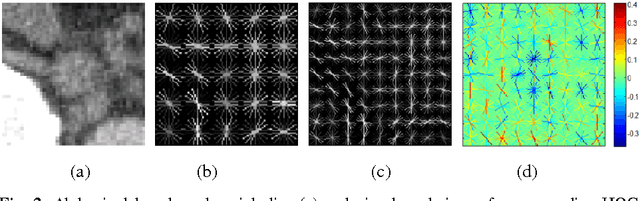

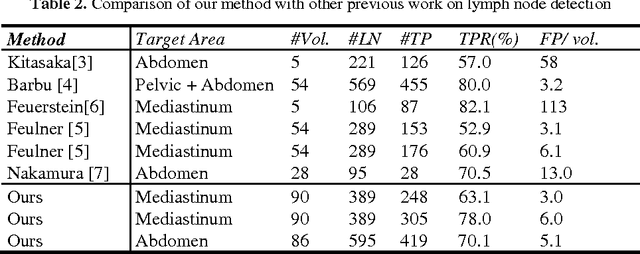

Abstract:Enlarged lymph nodes (LNs) can provide important information for cancer diagnosis, staging, and measuring treatment reactions, making automated detection a highly sought goal. In this paper, we propose a new algorithm representation of decomposing the LN detection problem into a set of 2D object detection subtasks on sampled CT slices, largely alleviating the curse of dimensionality issue. Our 2D detection can be effectively formulated as linear classification on a single image feature type of Histogram of Oriented Gradients (HOG), covering a moderate field-of-view of 45 by 45 voxels. We exploit both simple pooling and sparse linear fusion schemes to aggregate these 2D detection scores for the final 3D LN detection. In this manner, detection is more tractable and does not need to perform perfectly at instance level (as weak hypotheses) since our aggregation process will robustly harness collective information for LN detection. Two datasets (90 patients with 389 mediastinal LNs and 86 patients with 595 abdominal LNs) are used for validation. Cross-validation demonstrates 78.0% sensitivity at 6 false positives/volume (FP/vol.) (86.1% at 10 FP/vol.) and 73.1% sensitivity at 6 FP/vol. (87.2% at 10 FP/vol.), for the mediastinal and abdominal datasets respectively. Our results compare favorably to previous state-of-the-art methods.

A New 2.5D Representation for Lymph Node Detection using Random Sets of Deep Convolutional Neural Network Observations

Jun 06, 2014

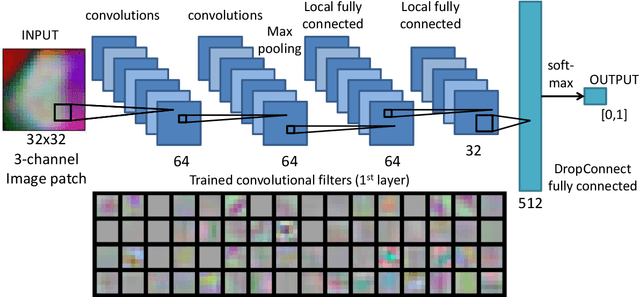

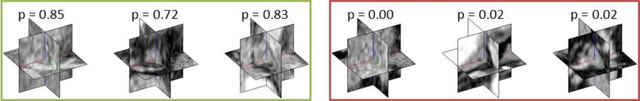

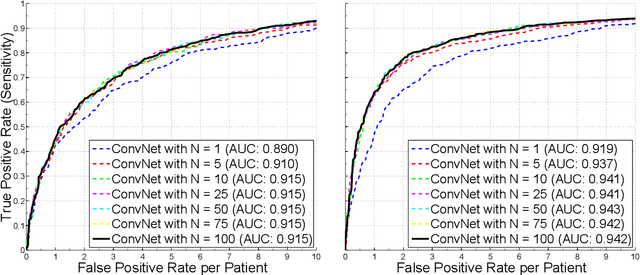

Abstract:Automated Lymph Node (LN) detection is an important clinical diagnostic task but very challenging due to the low contrast of surrounding structures in Computed Tomography (CT) and to their varying sizes, poses, shapes and sparsely distributed locations. State-of-the-art studies show the performance range of 52.9% sensitivity at 3.1 false-positives per volume (FP/vol.), or 60.9% at 6.1 FP/vol. for mediastinal LN, by one-shot boosting on 3D HAAR features. In this paper, we first operate a preliminary candidate generation stage, towards 100% sensitivity at the cost of high FP levels (40 per patient), to harvest volumes of interest (VOI). Our 2.5D approach consequently decomposes any 3D VOI by resampling 2D reformatted orthogonal views N times, via scale, random translations, and rotations with respect to the VOI centroid coordinates. These random views are then used to train a deep Convolutional Neural Network (CNN) classifier. In testing, the CNN is employed to assign LN probabilities for all N random views that can be simply averaged (as a set) to compute the final classification probability per VOI. We validate the approach on two datasets: 90 CT volumes with 388 mediastinal LNs and 86 patients with 595 abdominal LNs. We achieve sensitivities of 70%/83% at 3 FP/vol. and 84%/90% at 6 FP/vol. in mediastinum and abdomen respectively, which drastically improves over the previous state-of-the-art work.

* This article will be presented at MICCAI (Medical Image Computing and Computer-Assisted Interventions) 2014

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge