João Barbosa Breda

Automatic Segmentation of the Optic Nerve Head Region in Optical Coherence Tomography: A Methodological Review

Sep 06, 2021

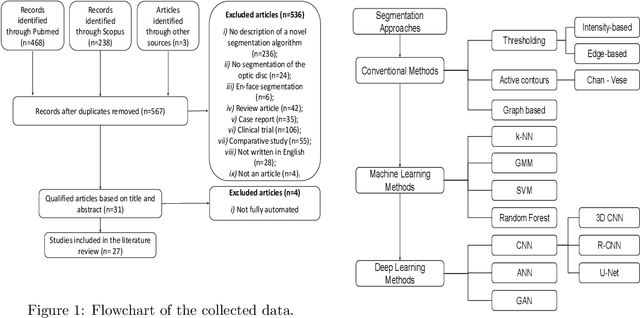

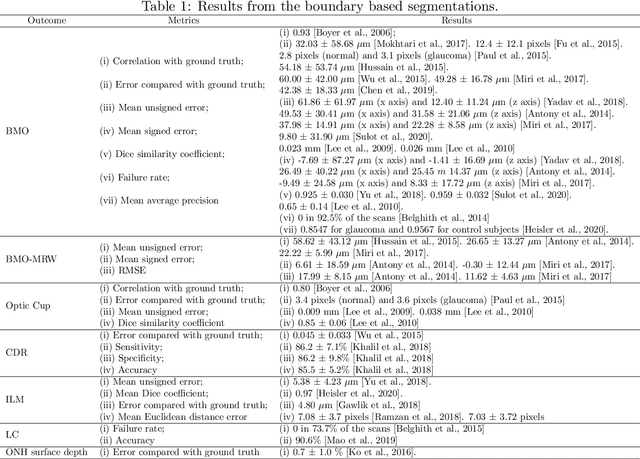

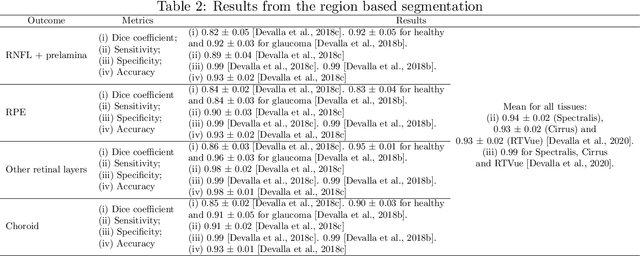

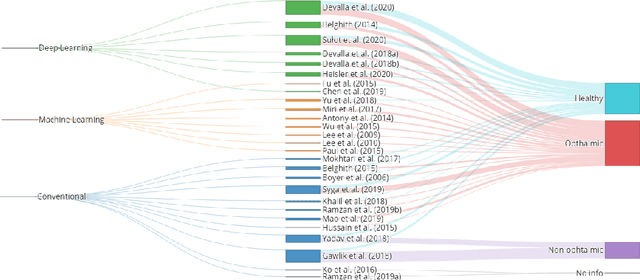

Abstract:The optic nerve head represents the intraocular section of the optic nerve (ONH), which is prone to damage by intraocular pressure. The advent of optical coherence tomography (OCT) has enabled the evaluation of novel optic nerve head parameters, namely the depth and curvature of the lamina cribrosa (LC). Together with the Bruch's membrane opening minimum-rim-width, these seem to be promising optic nerve head parameters for diagnosis and monitoring of retinal diseases such as glaucoma. Nonetheless, these optical coherence tomography derived biomarkers are mostly extracted through manual segmentation, which is time-consuming and prone to bias, thus limiting their usability in clinical practice. The automatic segmentation of optic nerve head in OCT scans could further improve the current clinical management of glaucoma and other diseases. This review summarizes the current state-of-the-art in automatic segmentation of the ONH in OCT. PubMed and Scopus were used to perform a systematic review. Additional works from other databases (IEEE, Google Scholar and ARVO IOVS) were also included, resulting in a total of 27 reviewed studies. For each algorithm, the methods, the size and type of dataset used for validation, and the respective results were carefully analyzed. The results show that deep learning-based algorithms provide the highest accuracy, sensitivity and specificity for segmenting the different structures of the ONH including the LC. However, a lack of consensus regarding the definition of segmented regions, extracted parameters and validation approaches has been observed, highlighting the importance and need of standardized methodologies for ONH segmentation.

Pointwise visual field estimation from optical coherence tomography in glaucoma: a structure-function analysis using deep learning

Jun 07, 2021

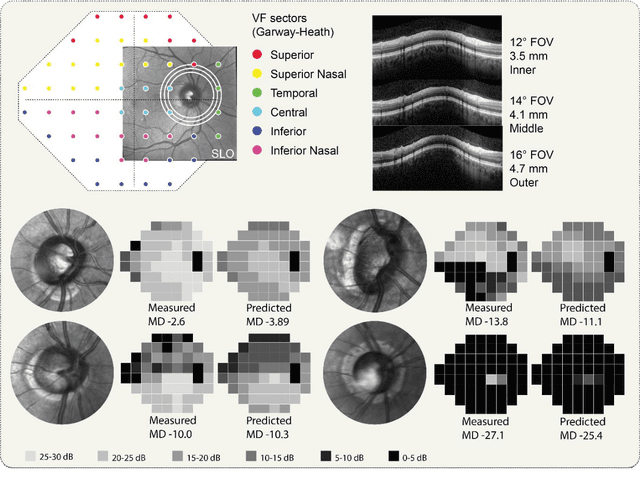

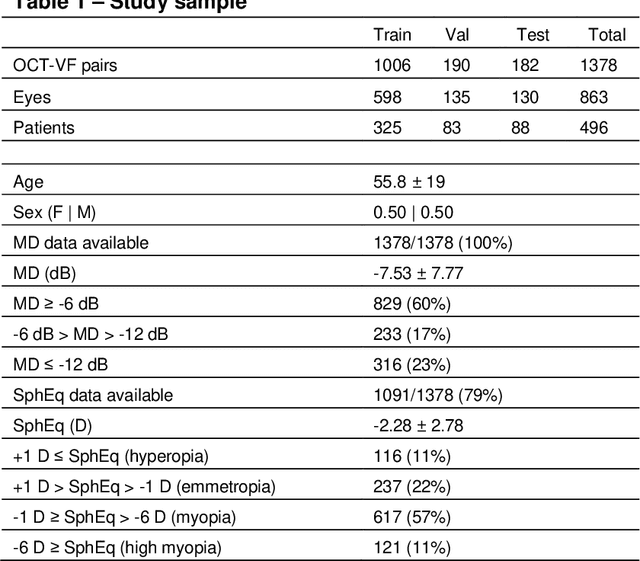

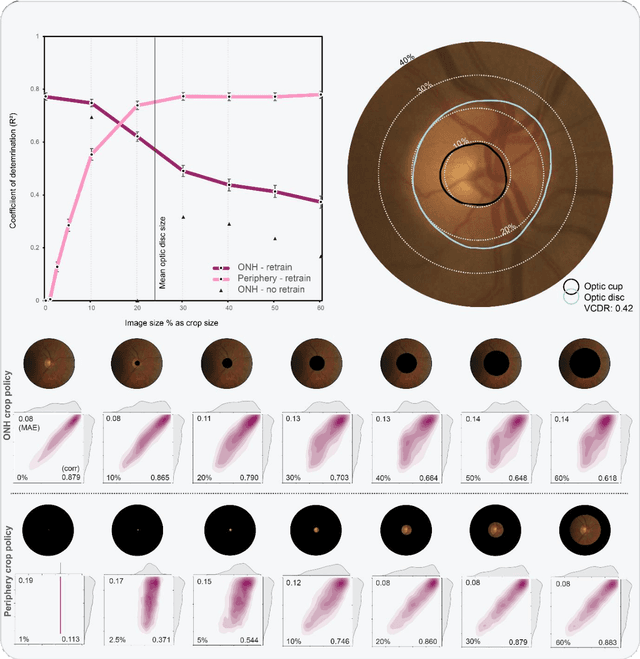

Abstract:Background/Aims: Standard Automated Perimetry (SAP) is the gold standard to monitor visual field (VF) loss in glaucoma management, but is prone to intra-subject variability. We developed and validated a deep learning (DL) regression model that estimates pointwise and overall VF loss from unsegmented optical coherence tomography (OCT) scans. Methods: Eight DL regression models were trained with various retinal imaging modalities: circumpapillary OCT at 3.5mm, 4.1mm, 4.7mm diameter, and scanning laser ophthalmoscopy (SLO) en face images to estimate mean deviation (MD) and 52 threshold values. This retrospective study used data from patients who underwent a complete glaucoma examination, including a reliable Humphrey Field Analyzer (HFA) 24-2 SITA Standard VF exam and a SPECTRALIS OCT scan using the Glaucoma Module Premium Edition. Results: A total of 1378 matched OCT-VF pairs of 496 patients (863 eyes) were included for training and evaluation of the DL models. Average sample MD was -7.53dB (from -33.8dB to +2.0dB). For 52 VF threshold values estimation, the circumpapillary OCT scan with the largest radius (4.7mm) achieved the best performance among all individual models (Pearson r=0.77, 95% CI=[0.72-0.82]). For MD, prediction averaging of OCT-trained models (3.5mm, 4.1mm, 4.7mm) resulted in a Pearson r of 0.78 [0.73-0.83] on the validation set and comparable performance on the test set (Pearson r=0.79 [0.75-0.82]). Conclusion: DL on unsegmented OCT scans accurately predicts pointwise and mean deviation of 24-2 VF in glaucoma patients. Automated VF from unsegmented OCT could be a solution for patients unable to produce reliable perimetry results.

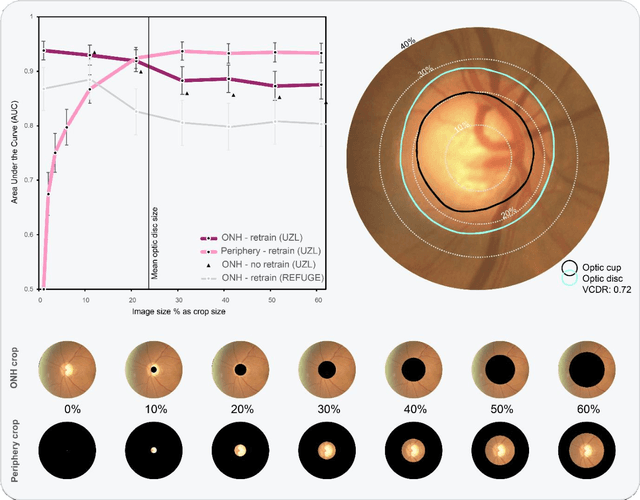

Glaucoma detection beyond the optic disc: The importance of the peripapillary region using explainable deep learning

Mar 22, 2021

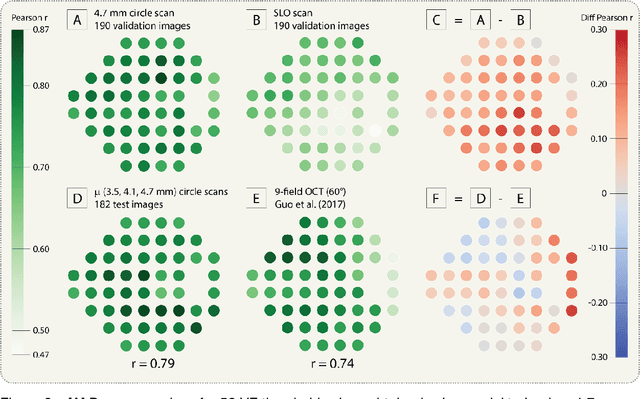

Abstract:Today, a large number of glaucoma cases remain undetected, resulting in irreversible blindness. In a quest for cost-effective screening, deep learning-based methods are being evaluated to detect glaucoma from color fundus images. Although unprecedented sensitivity and specificity values are reported, recent glaucoma detection deep learning models lack in decision transparency. Here, we propose a methodology that advances explainable deep learning in the field of glaucoma detection and vertical cup-disc ratio (VCDR), an important risk factor. We trained and evaluated a total of 64 deep learning models using fundus images that undergo a certain cropping policy. We defined the circular crop radius as a percentage of image size, centered on the optic nerve head (ONH), with an equidistant spaced range from 10%-60% (ONH crop policy). The inverse of the cropping mask was also applied to quantify the performance of models trained on ONH information exclusively (periphery crop policy). The performance of the models evaluated on original images resulted in an area under the curve (AUC) of 0.94 [95% CI: 0.92-0.96] for glaucoma detection, and a coefficient of determination (R^2) equal to 77% [95% CI: 0.77-0.79] for VCDR estimation. Models that were trained on images with absence of the ONH are still able to obtain significant performance (0.88 [95% CI: 0.85-0.90] AUC for glaucoma detection and 37% [95% CI: 0.35-0.40] R^2 score for VCDR estimation in the most extreme setup of 60% ONH crop). We validated our glaucoma detection models on a recent public data set (REFUGE) that contains images captured with a different camera, still achieving an AUC of 0.80 [95% CI: 0.76-0.84] when ONH crop policy of 60% image size was applied. Our findings provide the first irrefutable evidence that deep learning can detect glaucoma from fundus image regions outside the ONH.

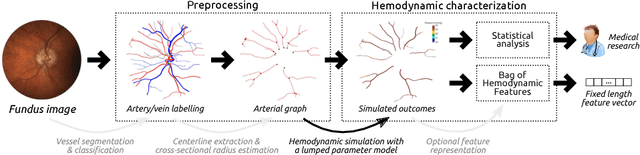

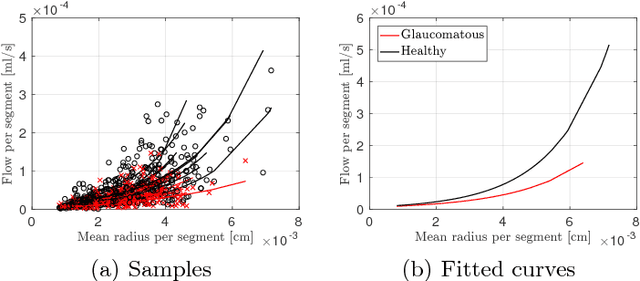

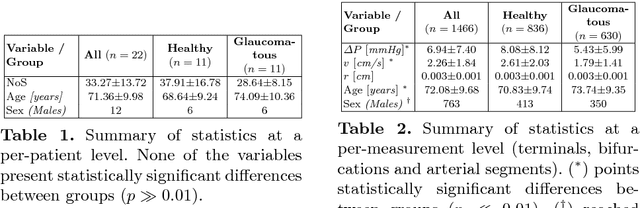

Towards a glaucoma risk index based on simulated hemodynamics from fundus images

Oct 25, 2018

Abstract:Glaucoma is the leading cause of irreversible but preventable blindness in the world. Its major treatable risk factor is the intra-ocular pressure, although other biomarkers are being explored to improve the understanding of the pathophysiology of the disease. It has been recently observed that glaucoma induces changes in the ocular hemodynamics. However, its effects on the functional behavior of the retinal arterioles have not been studied yet. In this paper we propose a first approach for characterizing those changes using computational hemodynamics. The retinal blood flow is simulated using a 0D model for a steady, incompressible non Newtonian fluid in rigid domains. The simulation is performed on patient-specific arterial trees extracted from fundus images. We also propose a novel feature representation technique to comprise the outcomes of the simulation stage into a fixed length feature vector that can be used for classification studies. Our experiments on a new database of fundus images show that our approach is able to capture representative changes in the hemodynamics of glaucomatous patients. Code and data are publicly available in https://ignaciorlando.github.io.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge