Jingping Shi

Layer-Wise Adaptive Updating for Few-Shot Image Classification

Jul 16, 2020

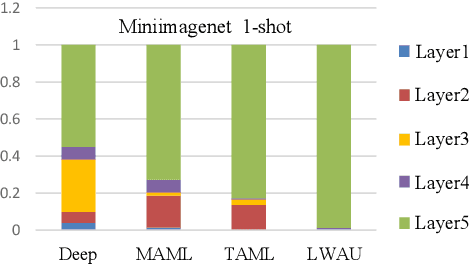

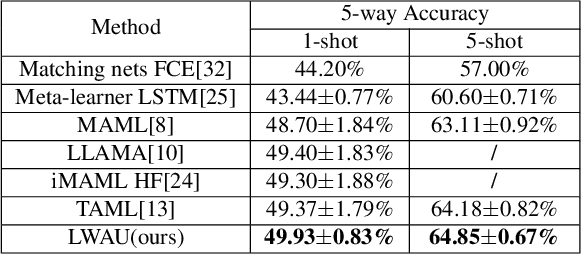

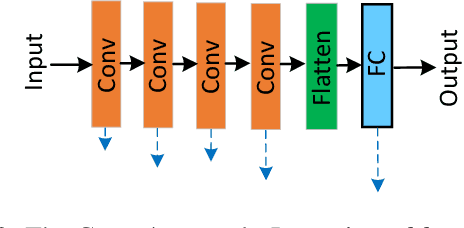

Abstract:Few-shot image classification (FSIC), which requires a model to recognize new categories via learning from few images of these categories, has attracted lots of attention. Recently, meta-learning based methods have been shown as a promising direction for FSIC. Commonly, they train a meta-learner (meta-learning model) to learn easy fine-tuning weight, and when solving an FSIC task, the meta-learner efficiently fine-tunes itself to a task-specific model by updating itself on few images of the task. In this paper, we propose a novel meta-learning based layer-wise adaptive updating (LWAU) method for FSIC. LWAU is inspired by an interesting finding that compared with common deep models, the meta-learner pays much more attention to update its top layer when learning from few images. According to this finding, we assume that the meta-learner may greatly prefer updating its top layer to updating its bottom layers for better FSIC performance. Therefore, in LWAU, the meta-learner is trained to learn not only the easy fine-tuning model but also its favorite layer-wise adaptive updating rule to improve its learning efficiency. Extensive experiments show that with the layer-wise adaptive updating rule, the proposed LWAU: 1) outperforms existing few-shot classification methods with a clear margin; 2) learns from few images more efficiently by at least 5 times than existing meta-learners when solving FSIC.

Rethink and Redesign Meta learning

Dec 24, 2018

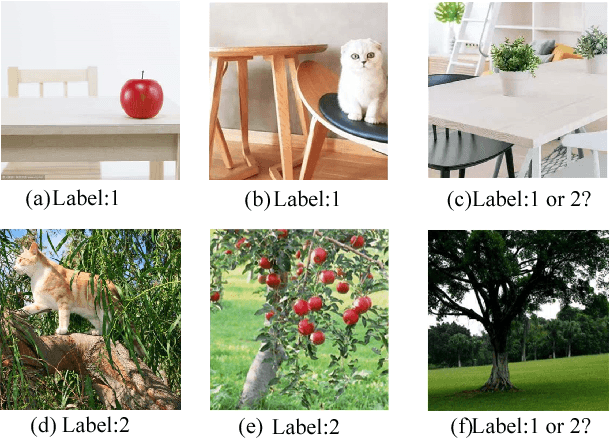

Abstract:Recently, meta-learning has shown as a promising way to improve the ability to learn from few-data for many computer vision tasks. However, existing meta-learning approaches still fall behind human greatly, and like many deep learning algorithms, they also suffer from overfitting. We named this problem as Task-Over-Fitting (TOF) problem that the meta-learner over-fits to the training tasks, not to the training data. We human beings can learn from few-data, mainly due to that we are so smart to leverage past knowledge to understand the images of new categories rapidly. Furthermore, be benefiting from our flexible attention mechanism, we can accurately extract and select key features from images and further solve few-shot learning tasks with excellent performance. In this paper, we rethink the meta-learning algorithm and find that existing meta-learning approaches miss considering attention mechanism and past knowledge. To this end, we present a novel paradigm of meta-learning approach with three developments to introduce attention mechanism and past knowledge step by step. In this way, we can narrow the problem space and improve the performance of meta-learning, and the TOF problem can also be significantly reduced. Extensive experiments demonstrate the effectiveness of our designation and methods with state-of-the-art performance not only on several few-shot learning benchmarks but also on the Cross-Entropy across Tasks (CET) metric.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge