Jianfeng Deng

A Novel Generative Model with Causality Constraint for Mitigating Biases in Recommender Systems

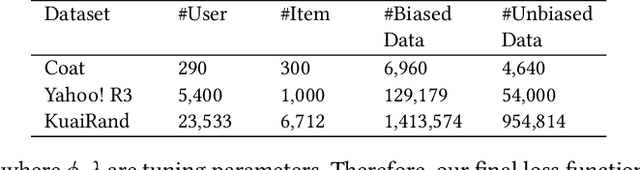

May 22, 2025Abstract:Accurately predicting counterfactual user feedback is essential for building effective recommender systems. However, latent confounding bias can obscure the true causal relationship between user feedback and item exposure, ultimately degrading recommendation performance. Existing causal debiasing approaches often rely on strong assumptions-such as the availability of instrumental variables (IVs) or strong correlations between latent confounders and proxy variables-that are rarely satisfied in real-world scenarios. To address these limitations, we propose a novel generative framework called Latent Causality Constraints for Debiasing representation learning in Recommender Systems (LCDR). Specifically, LCDR leverages an identifiable Variational Autoencoder (iVAE) as a causal constraint to align the latent representations learned by a standard Variational Autoencoder (VAE) through a unified loss function. This alignment allows the model to leverage even weak or noisy proxy variables to recover latent confounders effectively. The resulting representations are then used to improve recommendation performance. Extensive experiments on three real-world datasets demonstrate that LCDR consistently outperforms existing methods in both mitigating bias and improving recommendation accuracy.

Mitigating Dual Latent Confounding Biases in Recommender Systems

Oct 16, 2024

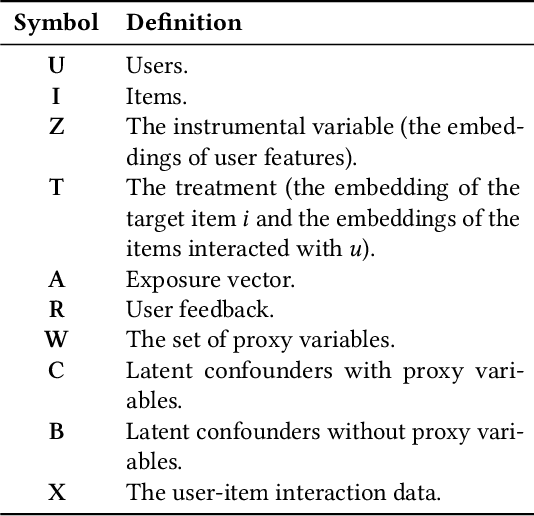

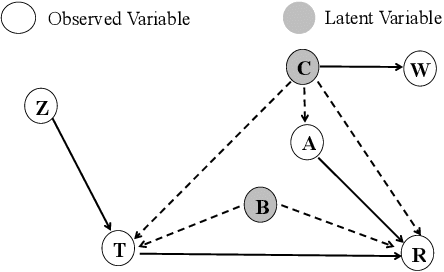

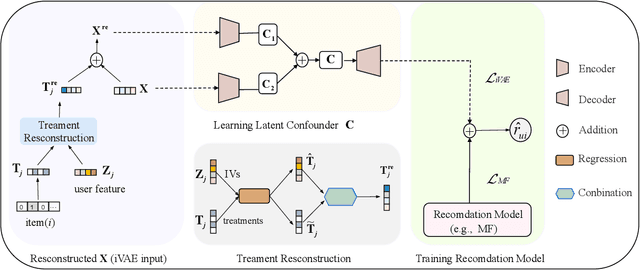

Abstract:Recommender systems are extensively utilised across various areas to predict user preferences for personalised experiences and enhanced user engagement and satisfaction. Traditional recommender systems, however, are complicated by confounding bias, particularly in the presence of latent confounders that affect both item exposure and user feedback. Existing debiasing methods often fail to capture the complex interactions caused by latent confounders in interaction data, especially when dual latent confounders affect both the user and item sides. To address this, we propose a novel debiasing method that jointly integrates the Instrumental Variables (IV) approach and identifiable Variational Auto-Encoder (iVAE) for Debiased representation learning in Recommendation systems, referred to as IViDR. Specifically, IViDR leverages the embeddings of user features as IVs to address confounding bias caused by latent confounders between items and user feedback, and reconstructs the embedding of items to obtain debiased interaction data. Moreover, IViDR employs an Identifiable Variational Auto-Encoder (iVAE) to infer identifiable representations of latent confounders between item exposure and user feedback from both the original and debiased interaction data. Additionally, we provide theoretical analyses of the soundness of using IV and the identifiability of the latent representations. Extensive experiments on both synthetic and real-world datasets demonstrate that IViDR outperforms state-of-the-art models in reducing bias and providing reliable recommendations.

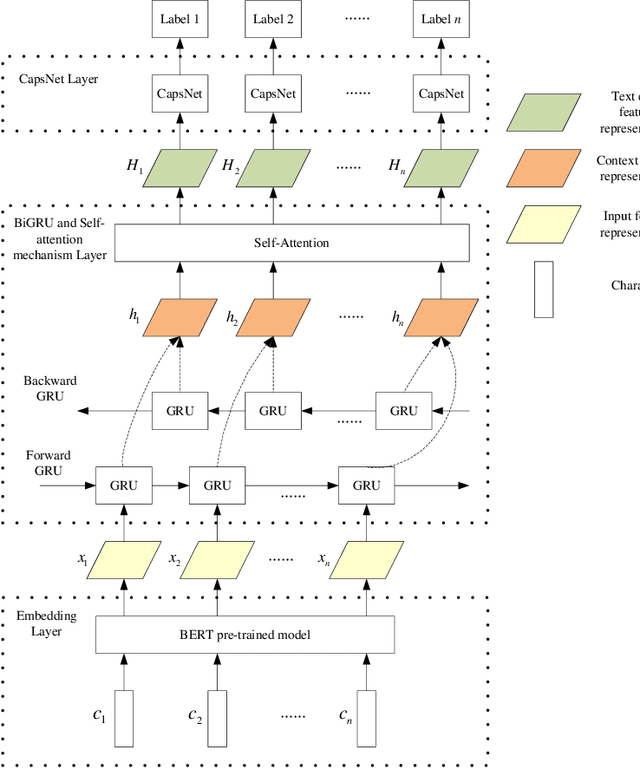

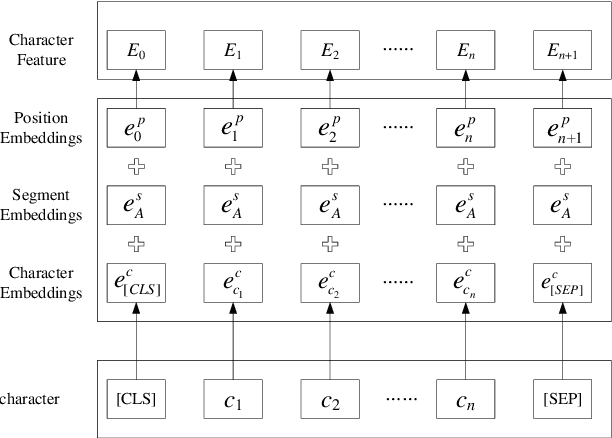

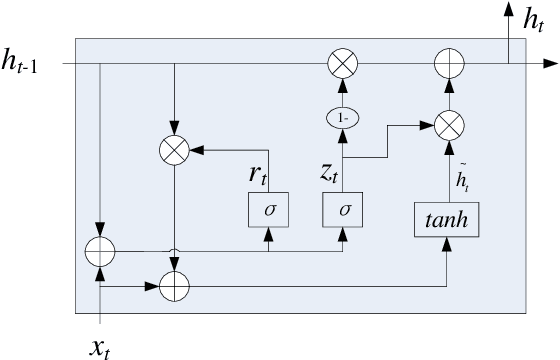

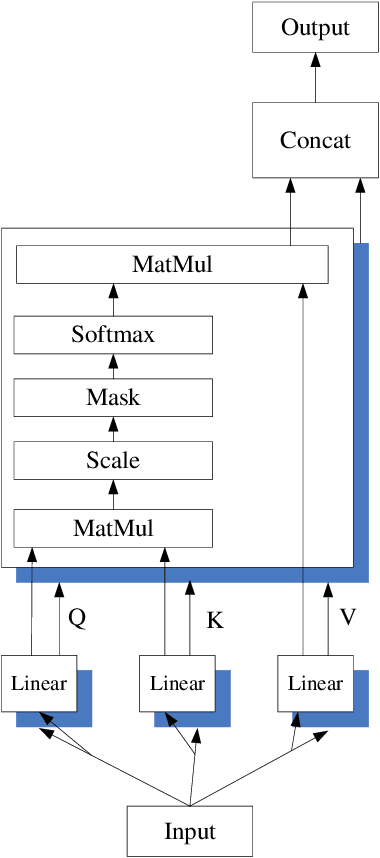

Self-attention-based BiGRU and capsule network for named entity recognition

Jan 30, 2020

Abstract:Named entity recognition(NER) is one of the tasks of natural language processing(NLP). In view of the problem that the traditional character representation ability is weak and the neural network method is unable to capture the important sequence information. An self-attention-based bidirectional gated recurrent unit(BiGRU) and capsule network(CapsNet) for NER is proposed. This model generates character vectors through bidirectional encoder representation of transformers(BERT) pre-trained model. BiGRU is used to capture sequence context features, and self-attention mechanism is proposed to give different focus on the information captured by hidden layer of BiGRU. Finally, we propose to use CapsNet for entity recognition. We evaluated the recognition performance of the model on two datasets. Experimental results show that the model has better performance without relying on external dictionary information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge