Jiafan Lu

IESR:Efficient MCTS-Based Modular Reasoning for Text-to-SQL with Large Language Models

Feb 05, 2026Abstract:Text-to-SQL is a key natural language processing task that maps natural language questions to SQL queries, enabling intuitive interaction with web-based databases. Although current methods perform well on benchmarks like BIRD and Spider, they struggle with complex reasoning, domain knowledge, and hypothetical queries, and remain costly in enterprise deployment. To address these issues, we propose a framework named IESR(Information Enhanced Structured Reasoning) for lightweight large language models: (i) leverages LLMs for key information understanding and schema linking, and decoupling mathematical computation and SQL generation, (ii) integrates a multi-path reasoning mechanism based on Monte Carlo Tree Search (MCTS) with majority voting, and (iii) introduces a trajectory consistency verification module with a discriminator model to ensure accuracy and consistency. Experimental results demonstrate that IESR achieves state-of-the-art performance on the complex reasoning benchmark LogicCat (24.28 EX) and the Archer dataset (37.28 EX) using only compact lightweight models without fine-tuning. Furthermore, our analysis reveals that current coder models exhibit notable biases and deficiencies in physical knowledge, mathematical computation, and common-sense reasoning, highlighting important directions for future research. We released code at https://github.com/Ffunkytao/IESR-SLM.

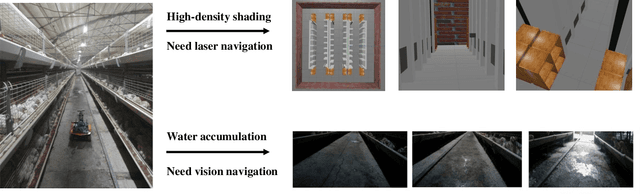

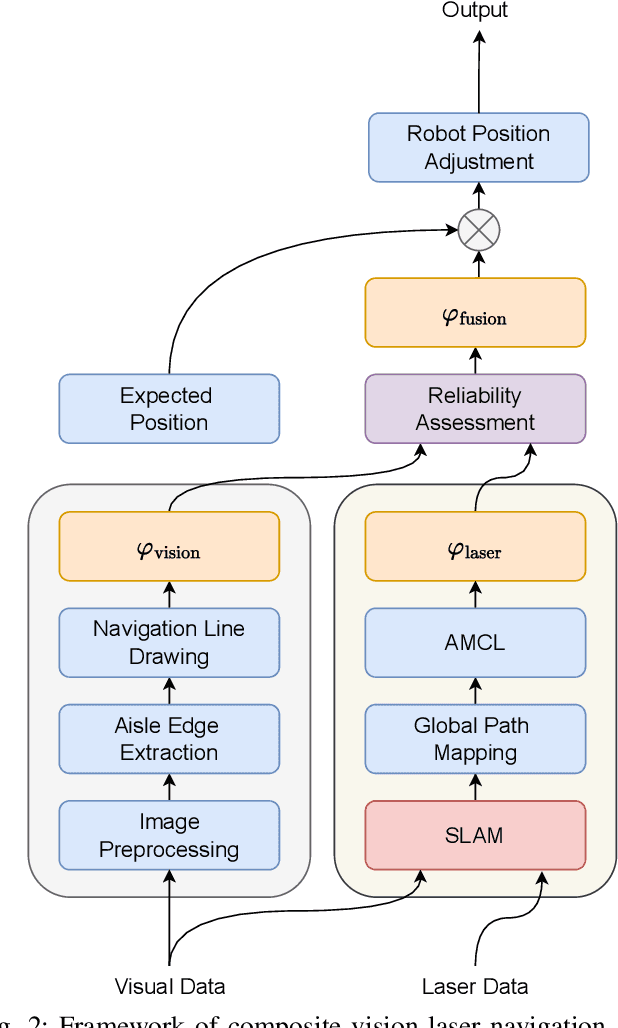

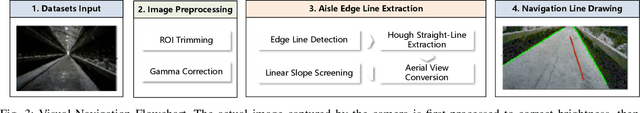

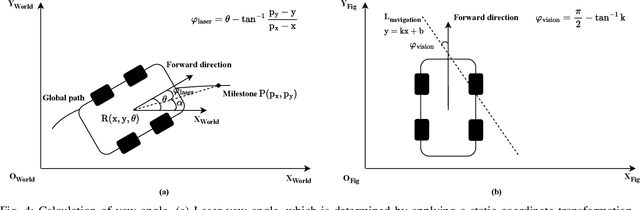

The Composite Visual-Laser Navigation Method Applied in Indoor Poultry Farming Environments

Apr 11, 2025

Abstract:Indoor poultry farms require inspection robots to maintain precise environmental control, which is crucial for preventing the rapid spread of disease and large-scale bird mortality. However, the complex conditions within these facilities, characterized by areas of intense illumination and water accumulation, pose significant challenges. Traditional navigation methods that rely on a single sensor often perform poorly in such environments, resulting in issues like laser drift and inaccuracies in visual navigation line extraction. To overcome these limitations, we propose a novel composite navigation method that integrates both laser and vision technologies. This approach dynamically computes a fused yaw angle based on the real-time reliability of each sensor modality, thereby eliminating the need for physical navigation lines. Experimental validation in actual poultry house environments demonstrates that our method not only resolves the inherent drawbacks of single-sensor systems, but also significantly enhances navigation precision and operational efficiency. As such, it presents a promising solution for improving the performance of inspection robots in complex indoor poultry farming settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge