Jesse Murray

RSVQA: Visual Question Answering for Remote Sensing Data

Mar 16, 2020

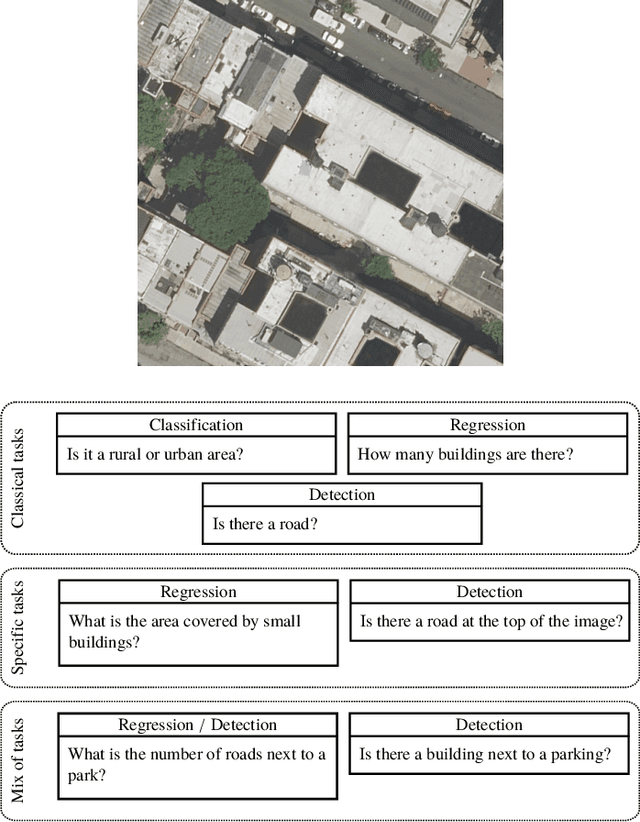

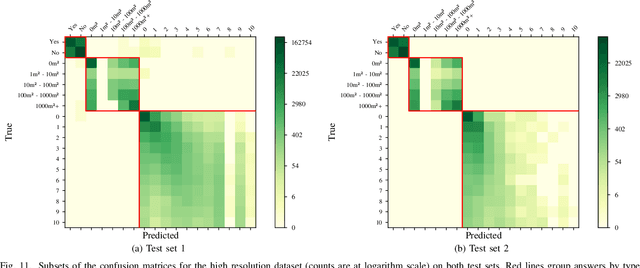

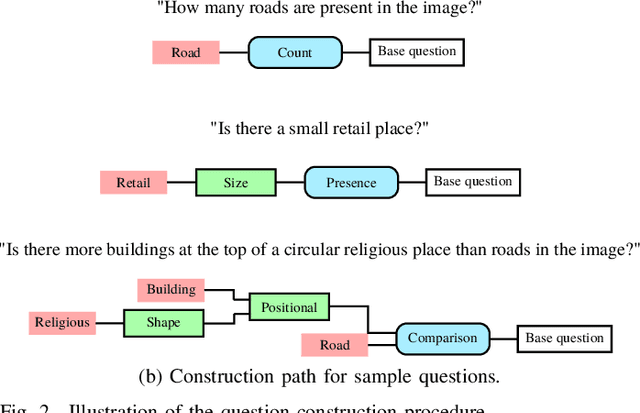

Abstract:This paper introduces the task of visual question answering for remote sensing data (RSVQA). Remote sensing images contain a wealth of information which can be useful for a wide range of tasks including land cover classification, object counting or detection. However, most of the available methodologies are task-specific, thus inhibiting generic and easy access to the information contained in remote sensing data. As a consequence, accurate remote sensing product generation still requires expert knowledge. With RSVQA, we propose a system to extract information from remote sensing data that is accessible to every user: we use questions formulated in natural language and use them to interact with the images. With the system, images can be queried to obtain high level information specific to the image content or relational dependencies between objects visible in the images. Using an automatic method introduced in this article, we built two datasets (using low and high resolution data) of image/question/answer triplets. The information required to build the questions and answers is queried from OpenStreetMap (OSM). The datasets can be used to train (when using supervised methods) and evaluate models to solve the RSVQA task. We report the results obtained by applying a model based on Convolutional Neural Networks (CNNs) for the visual part and on a Recurrent Neural Network (RNN) for the natural language part to this task. The model is trained on the two datasets, yielding promising results in both cases.

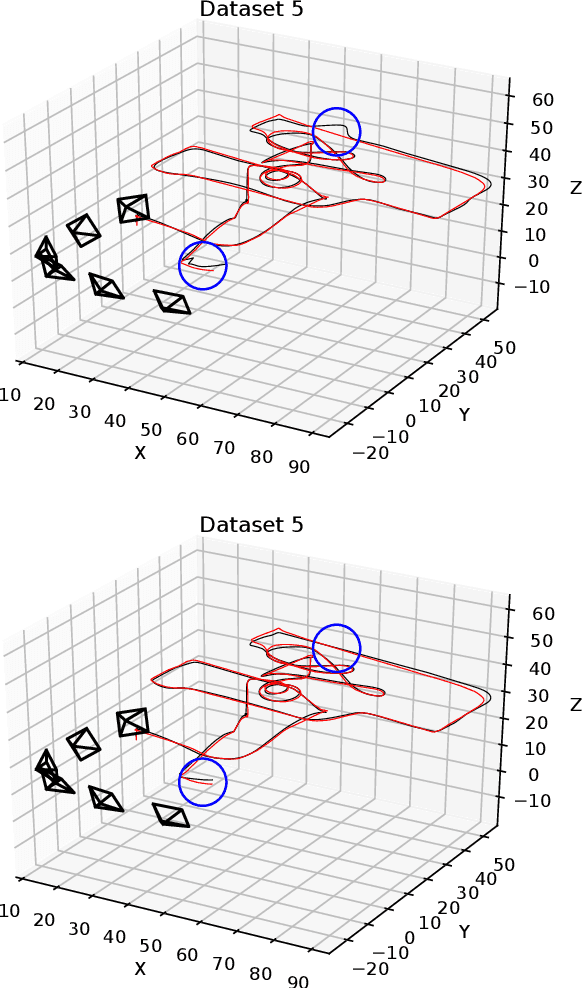

Reconstruction of 3D flight trajectories from ad-hoc camera networks

Mar 10, 2020

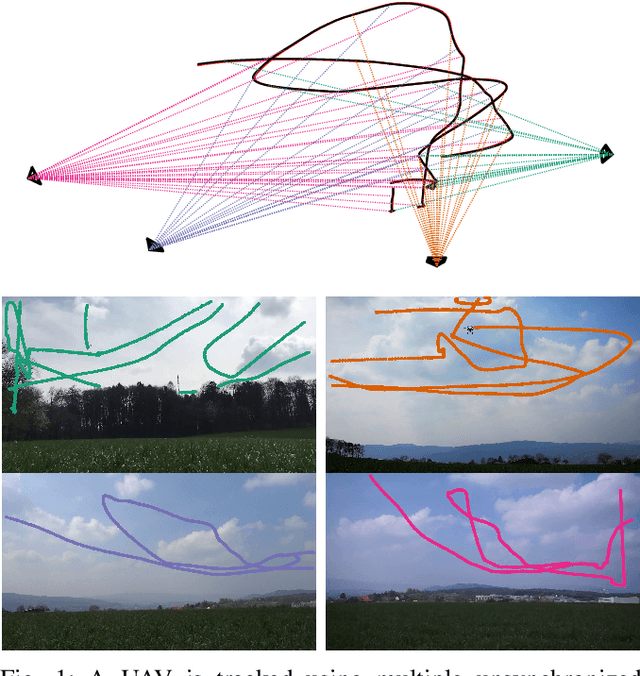

Abstract:We present a method to reconstruct the 3D trajectory of an airborne robotic system only from videos recorded with cameras that are unsynchronized, may feature rolling shutter distortion, and whose viewpoints are unknown. Our approach enables robust and accurate outside-in tracking of dynamically flying targets, with cheap and easy-to-deploy equipment. We show that, in spite of the weakly constrained setting, recent developments in computer vision make it possible to reconstruct trajectories in 3D from unsynchronized, uncalibrated networks of consumer cameras, and validate the proposed method in a realistic field experiment. We make our code available along with the data, including cm-accurate ground-truth from differential GNSS navigation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge