Jeny Rajan

Attention-effective multiple instance learning on weakly stem cell colony segmentation

Mar 09, 2022

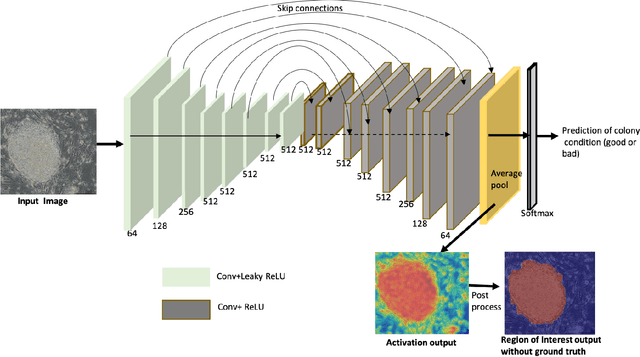

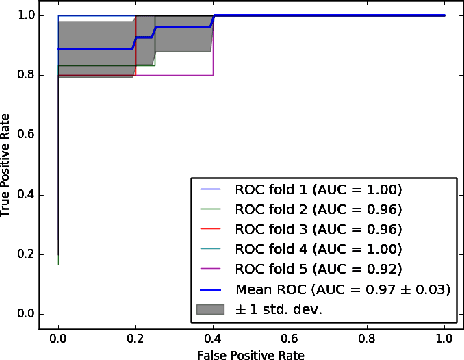

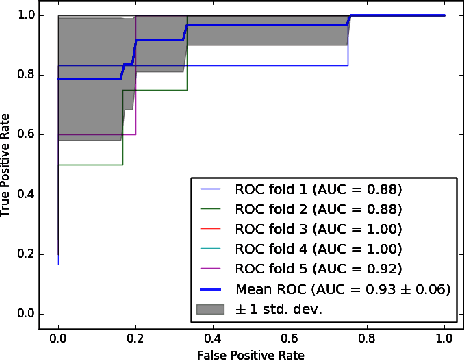

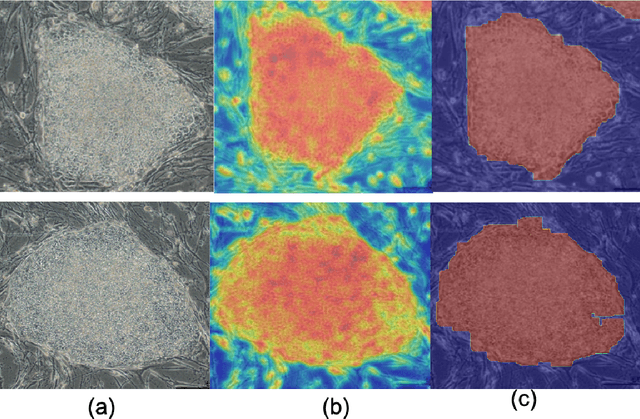

Abstract:The detection of induced pluripotent stem cell (iPSC) colonies often needs the precise extraction of the colony features. However, existing computerized systems relied on segmentation of contours by preprocessing for classifying the colony conditions were task-extensive. To maximize the efficiency in categorizing colony conditions, we propose a multiple instance learning (MIL) in weakly supervised settings. It is designed in a single model to produce weak segmentation and classification of colonies without using finely labeled samples. As a single model, we employ a U-net-like convolution neural network (CNN) to train on binary image-level labels for MIL colonies classification. Furthermore, to specify the object of interest we used a simple post-processing method. The proposed approach is compared over conventional methods using five-fold cross-validation and receiver operating characteristic (ROC) curve. The maximum accuracy of the MIL-net is 95%, which is 15 % higher than the conventional methods. Furthermore, the ability to interpret the location of the iPSC colonies based on the image level label without using a pixel-wise ground truth image is more appealing and cost-effective in colony condition recognition.

MobileCaps: A Lightweight Model for Screening and Severity Analysis of COVID-19 Chest X-Ray Images

Aug 19, 2021

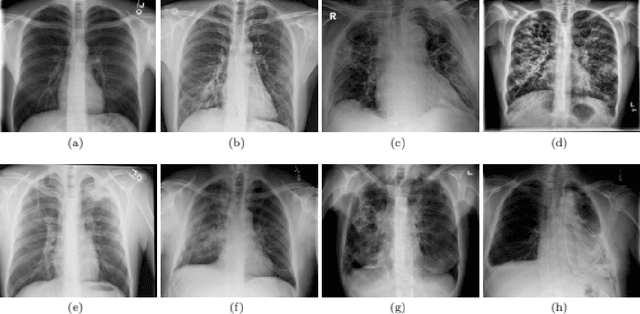

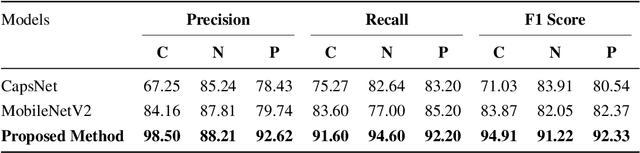

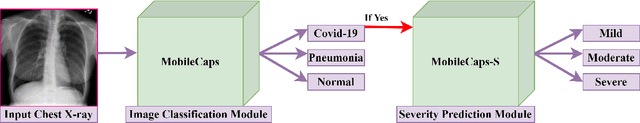

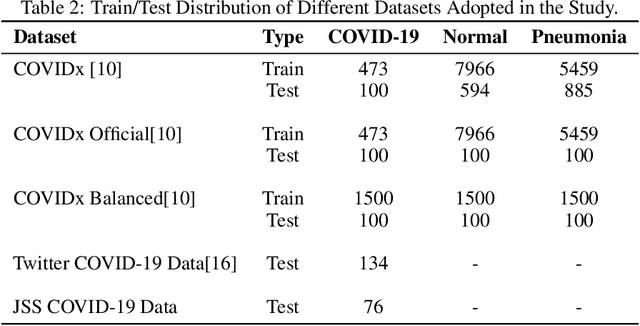

Abstract:The world is going through a challenging phase due to the disastrous effect caused by the COVID-19 pandemic on the healthcare system and the economy. The rate of spreading, post-COVID-19 symptoms, and the occurrence of new strands of COVID-19 have put the healthcare systems in disruption across the globe. Due to this, the task of accurately screening COVID-19 cases has become of utmost priority. Since the virus infects the respiratory system, Chest X-Ray is an imaging modality that is adopted extensively for the initial screening. We have performed a comprehensive study that uses CXR images to identify COVID-19 cases and realized the necessity of having a more generalizable model. We utilize MobileNetV2 architecture as the feature extractor and integrate it into Capsule Networks to construct a fully automated and lightweight model termed as MobileCaps. MobileCaps is trained and evaluated on the publicly available dataset with the model ensembling and Bayesian optimization strategies to efficiently classify CXR images of patients with COVID-19 from non-COVID-19 pneumonia and healthy cases. The proposed model is further evaluated on two additional RT-PCR confirmed datasets to demonstrate the generalizability. We also introduce MobileCaps-S and leverage it for performing severity assessment of CXR images of COVID-19 based on the Radiographic Assessment of Lung Edema (RALE) scoring technique. Our classification model achieved an overall recall of 91.60, 94.60, 92.20, and a precision of 98.50, 88.21, 92.62 for COVID-19, non-COVID-19 pneumonia, and healthy cases, respectively. Further, the severity assessment model attained an R$^2$ coefficient of 70.51. Owing to the fact that the proposed models have fewer trainable parameters than the state-of-the-art models reported in the literature, we believe our models will go a long way in aiding healthcare systems in the battle against the pandemic.

Medical Image Segmentation using 3D Convolutional Neural Networks: A Review

Aug 19, 2021

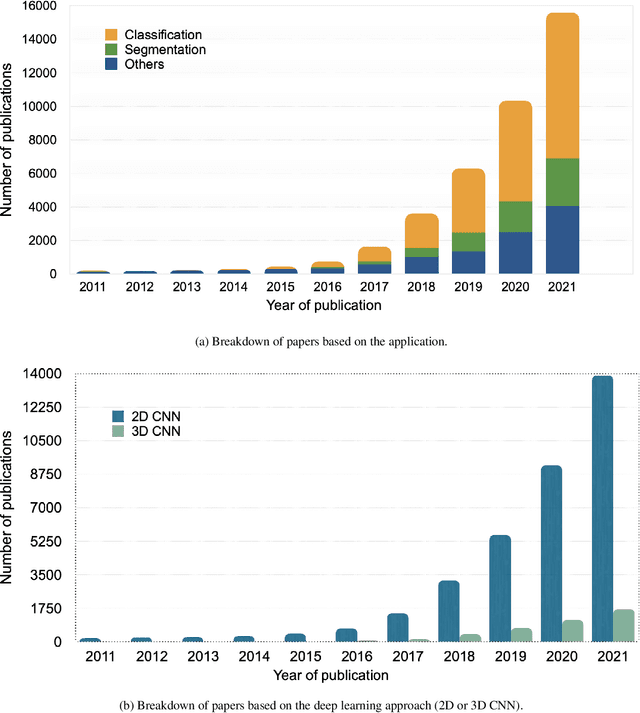

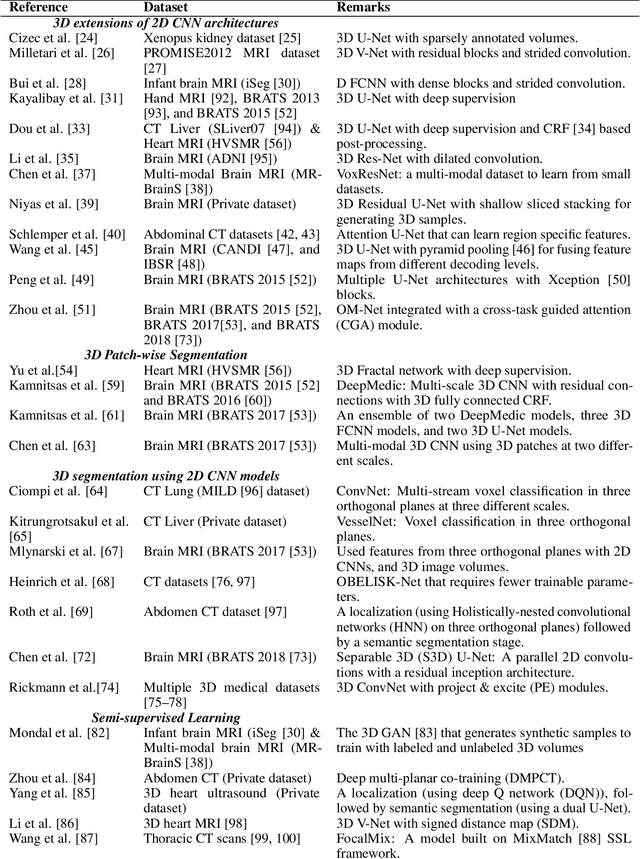

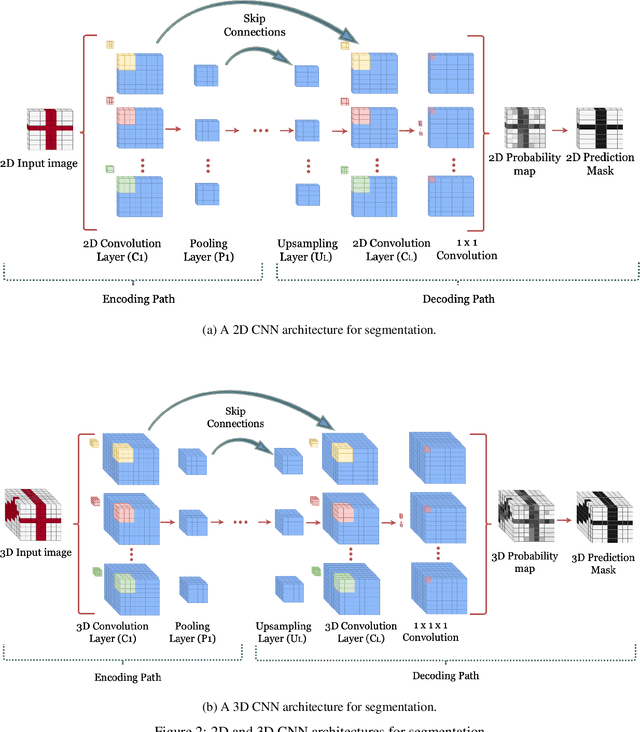

Abstract:Computer-aided medical image analysis plays a significant role in assisting medical practitioners for expert clinical diagnosis and deciding the optimal treatment plan. At present, convolutional neural networks (CNN) are the preferred choice for medical image analysis. In addition, with the rapid advancements in three-dimensional (3D) imaging systems and the availability of excellent hardware and software support to process large volumes of data, 3D deep learning methods are gaining popularity in medical image analysis. Here, we present an extensive review of the recently evolved 3D deep learning methods in medical image segmentation. Furthermore, the research gaps and future directions in 3D medical image segmentation are discussed.

WideCaps: A Wide Attention based Capsule Network for Image Classification

Aug 14, 2021

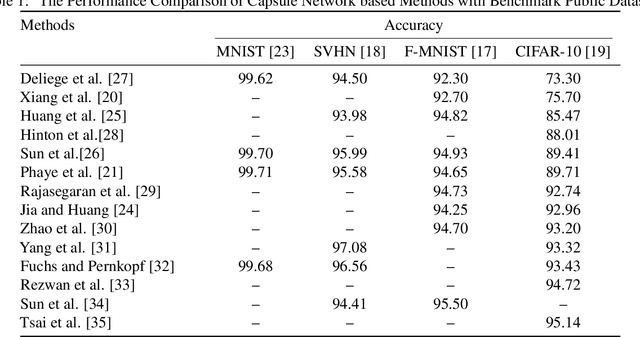

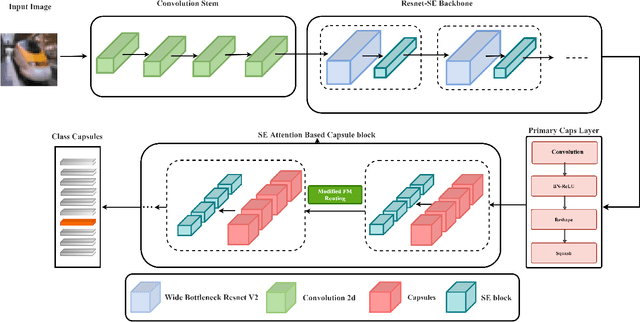

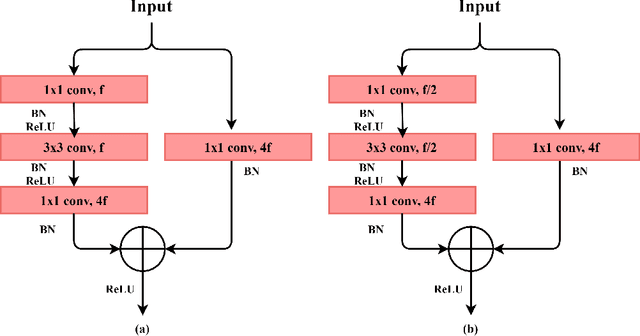

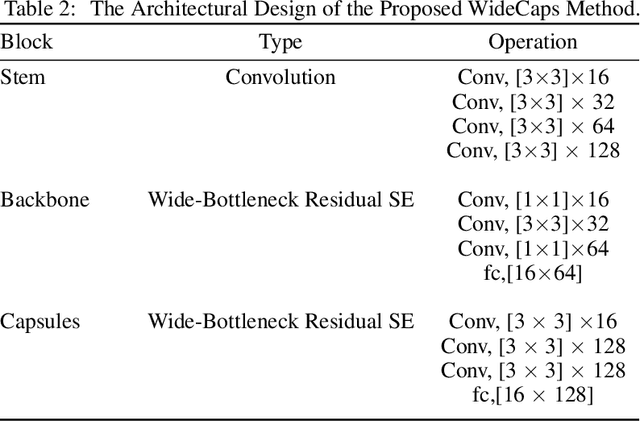

Abstract:The capsule network is a distinct and promising segment of the neural network family that drew attention due to its unique ability to maintain the equivariance property by preserving the spatial relationship amongst the features. The capsule network has attained unprecedented success over image classification tasks with datasets such as MNIST and affNIST by encoding the characteristic features into the capsules and building the parse-tree structure. However, on the datasets involving complex foreground and background regions such as CIFAR-10, the performance of the capsule network is sub-optimal due to its naive data routing policy and incompetence towards extracting complex features. This paper proposes a new design strategy for capsule network architecture for efficiently dealing with complex images. The proposed method incorporates wide bottleneck residual modules and the Squeeze and Excitation attention blocks upheld by the modified FM routing algorithm to address the defined problem. A wide bottleneck residual module facilitates extracting complex features followed by the squeeze and excitation attention block to enable channel-wise attention by suppressing the trivial features. This setup allows channel inter-dependencies at almost no computational cost, thereby enhancing the representation ability of capsules on complex images. We extensively evaluate the performance of the proposed model on three publicly available datasets, namely CIFAR-10, Fashion MNIST, and SVHN, to outperform the top-5 performance on CIFAR-10 and Fashion MNIST with highly competitive performance on the SVHN dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge