Jedrek Wosik

Evaluating the Performance of ChatGPT for Spam Email Detection

Feb 23, 2024Abstract:Email continues to be a pivotal and extensively utilized communication medium within professional and commercial domains. Nonetheless, the prevalence of spam emails poses a significant challenge for users, disrupting their daily routines and diminishing productivity. Consequently, accurately identifying and filtering spam based on content has become crucial for cybersecurity. Recent advancements in natural language processing, particularly with large language models like ChatGPT, have shown remarkable performance in tasks such as question answering and text generation. However, its potential in spam identification remains underexplored. To fill in the gap, this study attempts to evaluate ChatGPT's capabilities for spam identification in both English and Chinese email datasets. We employ ChatGPT for spam email detection using in-context learning, which requires a prompt instruction and a few demonstrations. We also investigate how the training example size affects the performance of ChatGPT. For comparison, we also implement five popular benchmark methods, including naive Bayes, support vector machines (SVM), logistic regression (LR), feedforward dense neural networks (DNN), and BERT classifiers. Though extensive experiments, the performance of ChatGPT is significantly worse than deep supervised learning methods in the large English dataset, while it presents superior performance on the low-resourced Chinese dataset, even outperforming BERT in this case.

Students Need More Attention: BERT-based AttentionModel for Small Data with Application to AutomaticPatient Message Triage

Jun 22, 2020

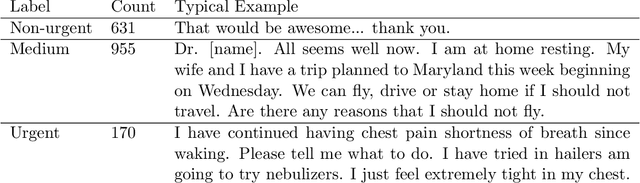

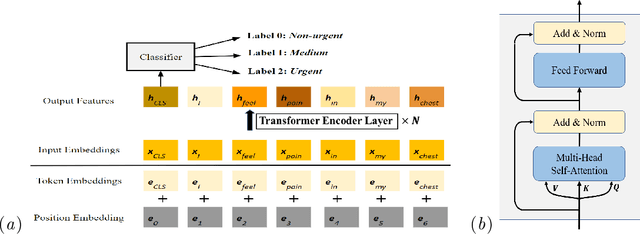

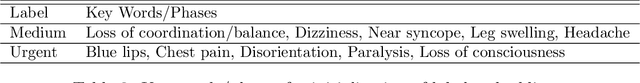

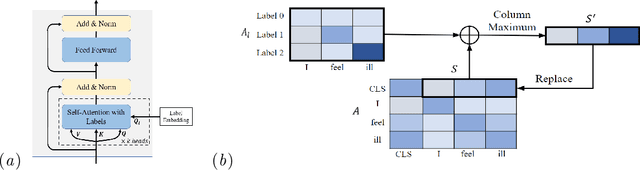

Abstract:Small and imbalanced datasets commonly seen in healthcare represent a challenge when training classifiers based on deep learning models. So motivated, we propose a novel framework based on BioBERT (Bidirectional Encoder Representations from Transformers forBiomedical TextMining). Specifically, (i) we introduce Label Embeddings for Self-Attention in each layer of BERT, which we call LESA-BERT, and (ii) by distilling LESA-BERT to smaller variants, we aim to reduce overfitting and model size when working on small datasets. As an application, our framework is utilized to build a model for patient portal message triage that classifies the urgency of a message into three categories: non-urgent, medium and urgent. Experiments demonstrate that our approach can outperform several strong baseline classifiers by a significant margin of 4.3% in terms of macro F1 score. The code for this project is publicly available at \url{https://github.com/shijing001/text_classifiers}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge